Andrew Ng coursera上的《机器学习》ex6

2016-08-26 14:17

176 查看

Andrew Ng coursera上的《机器学习》ex6

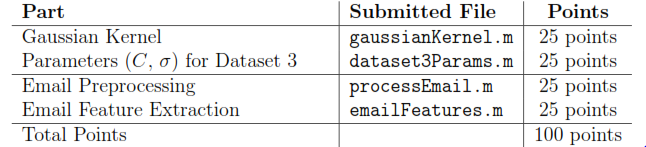

按照课程所给的ex6的文档要求,ex6要求完成以下几个计算过程的代码编写:

一、gaussianKernel.m

要求是求两个变量之间的相似性。function sim = gaussianKernel(x1, x2, sigma) %RBFKERNEL returns a radial basis function kernel between x1 and x2 % sim = gaussianKernel(x1, x2) returns a gaussian kernel between x1 and x2 % and returns the value in sim % Ensure that x1 and x2 are column vectors x1 = x1(:); x2 = x2(:); % You need to return the following variables correctly. sim = 0; % ====================== YOUR CODE HERE ====================== % Instructions: Fill in this function to return the similarity between x1 % and x2 computed using a Gaussian kernel with bandwidth % sigma % % sim = exp(-sum((x1-x2).^2)/(2*sigma^2)); % ============================================================= end

根据ex6文档中给出的公式就可以写出相应的代码。

二、dataset3Params.m

要求找出最优的参数C和α。function [C, sigma] = dataset3Params(X, y, Xval, yval) %EX6PARAMS returns your choice of C and sigma for Part 3 of the exercise %where you select the optimal (C, sigma) learning parameters to use for SVM %with RBF kernel % [C, sigma] = EX6PARAMS(X, y, Xval, yval) returns your choice of C and % sigma. You should complete this function to return the optimal C and % sigma based on a cross-validation set. % % You need to return the following variables correctly. C = 1; sigma = 0.3; % ====================== YOUR CODE HERE ====================== % Instructions: Fill in this function to return the optimal C and sigma % learning parameters found using the cross validation set. % You can use svmPredict to predict the labels on the cross % validation set. For example, % predictions = svmPredict(model, Xval); % will return the predictions on the cross validation set. % % Note: You can compute the prediction error using % mean(double(predictions ~= yval)) % vec = [0.01 0.03 0.1 0.3 1 3 10 30]'; C = 0.01; sigma = 0.01; model= svmTrain(X, y, C, @(x1, x2) gaussianKernel(x1, x2, sigma)); predictions = svmPredict(model,Xval); meanMin = mean(double(predictions ~= yval)); C_optimal = C; sigma_optimal = sigma; for i = 1:length(vec) for j = 1:length(vec) C = vec(i); sigma = vec(j); model= svmTrain(X, y, C, @(x1, x2) gaussianKernel(x1, x2, sigma)); predictions = svmPredict(model,Xval); if(meanMin >= mean(double(predictions ~= yval))) meanMin = mean(double(predictions ~= yval)); C_optimal = C; sigma_optimal = sigma; end end end C = C_optimal; sigma = sigma_optimal; % ========================================================================= end

采用的是两层循环遍历C和α的所有可能的值,最终求出最优值。

三、processEmail.m

任务是找出相应的单词以及它在单词表中的索引。function word_indices = processEmail(email_contents) %PROCESSEMAIL preprocesses a the body of an email and %returns a list of word_indices % word_indices = PROCESSEMAIL(email_contents) preprocesses % the body of an email and returns a list of indices of the % words contained in the email. % % Load Vocabulary vocabList = getVocabList(); % Init return value word_indices = []; % ========================== Preprocess Email =========================== % Find the Headers ( \n\n and remove ) % Uncomment the following lines if you are working with raw emails with the % full headers % hdrstart = strfind(email_contents, ([char(10) char(10)])); % email_contents = email_contents(hdrstart(1):end); % Lower case email_contents = lower(email_contents); % Strip all HTML % Looks for any expression that starts with < and ends with > and replace % and does not have any < or > in the tag it with a space email_contents = regexprep(email_contents, '<[^<>]+>', ' '); % Handle Numbers % Look for one or more characters between 0-9 email_contents = regexprep(email_contents, '[0-9]+', 'number'); % Handle URLS % Look for strings starting with http:// or https:// email_contents = regexprep(email_contents, ... '(http|https)://[^\s]*', 'httpaddr'); % Handle Email Addresses % Look for strings with @ in the middle email_contents = regexprep(email_contents, '[^\s]+@[^\s]+', 'emailaddr'); % Handle $ sign email_contents = regexprep(email_contents, '[$]+', 'dollar'); % ========================== Tokenize Email =========================== % Output the email to screen as well fprintf('\n==== Processed Email ====\n\n'); % Process file l = 0; while ~isempty(email_contents) % Tokenize and also get rid of any punctuation [str, email_contents] = ... strtok(email_contents, ... [' @$/#.-:&*+=[]?!(){},''">_<;%' char(10) char(13)]); % Remove any non alphanumeric characters str = regexprep(str, '[^a-zA-Z0-9]', ''); % Stem the word % (the porterStemmer sometimes has issues, so we use a try catch block) try str = porterStemmer(strtrim(str)); catch str = ''; continue; end; % Skip the word if it is too short if length(str) < 1 continue; end % Look up the word in the dictionary and add to word_indices if % found % ====================== YOUR CODE HERE ====================== % Instructions: Fill in this function to add the index of str to % word_indices if it is in the vocabulary. At this point % of the code, you have a stemmed word from the email in % the variable str. You should look up str in the % vocabulary list (vocabList). If a match exists, you % should add the index of the word to the word_indices % vector. Concretely, if str = 'action', then you should % look up the vocabulary list to find where in vocabList % 'action' appears. For example, if vocabList{18} = % 'action', then, you should add 18 to the word_indices % vector (e.g., word_indices = [word_indices ; 18]; ). % % Note: vocabList{idx} returns a the word with index idx in the % vocabulary list. % % Note: You can use strcmp(str1, str2) to compare two strings (str1 and % str2). It will return 1 only if the two strings are equivalent. % for idx = 1:1899 if(strcmp(str, vocabList{idx}) == 1) word_indices = [word_indices ; idx]; end end % ============================================================= % Print to screen, ensuring that the output lines are not too long if (l + length(str) + 1) > 78 fprintf('\n'); l = 0; end fprintf('%s ', str); l = l + length(str) + 1; end % Print footer fprintf('\n\n=========================\n'); end

作业只要求找出相应的索引。

四、emailFeatures.m

要求是将邮件中出现的关键字提取出来,然后和单词表进行匹配,如果单词表里面的单词出现在邮件当中的话,就将其标记为1,否则为0,因此该操作结束之后返回一个N维的数组。function x = emailFeatures(word_indices) %EMAILFEATURES takes in a word_indices vector and produces a feature vector %from the word indices % x = EMAILFEATURES(word_indices) takes in a word_indices vector and % produces a feature vector from the word indices. % Total number of words in the dictionary n = 1899; % You need to return the following variables correctly. x = zeros(n, 1); % ====================== YOUR CODE HERE ====================== % Instructions: Fill in this function to return a feature vector for the % given email (word_indices). To help make it easier to % process the emails, we have have already pre-processed each % email and converted each word in the email into an index in % a fixed dictionary (of 1899 words). The variable % word_indices contains the list of indices of the words % which occur in one email. % % Concretely, if an email has the text: % % The quick brown fox jumped over the lazy dog. % % Then, the word_indices vector for this text might look % like: % % 60 100 33 44 10 53 60 58 5 % % where, we have mapped each word onto a number, for example: % % the -- 60 % quick -- 100 % ... % % (note: the above numbers are just an example and are not the % actual mappings). % % Your task is take one such word_indices vector and construct % a binary feature vector that indicates whether a particular % word occurs in the email. That is, x(i) = 1 when word i % is present in the email. Concretely, if the word 'the' (say, % index 60) appears in the email, then x(60) = 1. The feature % vector should look like: % % x = [ 0 0 0 0 1 0 0 0 ... 0 0 0 0 1 ... 0 0 0 1 0 ..]; % % for i = 1:length(word_indices) x(word_indices(i)) = 1; end % ========================================================================= end

具体的实例可以查看代码注释中给出的例子。

相关文章推荐

- Coursera 机器学习(by Andrew Ng)课程学习笔记 Week 8(一)——无监督学习

- Andrew Ng的机器学习视频文件夹(from coursera, 2014)

- Coursera | Andrew Ng (02-week-1-1.3)—机器学习基础

- Coursera 机器学习(by Andrew Ng)课程学习笔记 Week 3——逻辑回归、过拟合与正则化

- Andrew Ng coursera上的《机器学习》ex1

- Coursera上Andrew Ng机器学习课程总结(二)

- Coursera 的机器学习 (Andrew Ng) 课程 视频百度云

- Coursera | Andrew Ng (03-week1)—机器学习 ML 策略(1)

- Stanford coursera Andrew Ng 机器学习课程编程作业(Exercise 1)Python3.x (补)

- Coursera 机器学习(by Andrew Ng)课程学习笔记 Week 7——支持向量机

- Andrew Ng的机器学习视频目录(from coursera, 2014)

- COURSERA 机器学习课笔记(by Prof. Andrew Ng)学习笔记(一)

- coursera上的Andrew Ng机器学习笔记1

- Coursera机器学习(Andrew Ng)笔记:回归与分类问题

- Machine Learning|Andrew Ng|Coursera 吴恩达机器学习笔记

- Stanford coursera Andrew Ng 机器学习课程编程作业(Exercise 1)

- coursera斯坦福Andrew Ng的机器学习编程作业答案

- Coursera 机器学习(by Andrew Ng)课程学习笔记 Week 6(二)——误差分析与数据集偏斜处理

- Andrew Ng coursera上的《机器学习》ex4

- Andrew Ng coursera上的《机器学习》ex7