Spark内存泄露问题分析追查

2018-01-11 10:15

393 查看

本文分析思路非常清晰,这里转载作为学习分析spark内存泄露问题的案例。(原文见文章末尾参考)

[Failure symptoms]

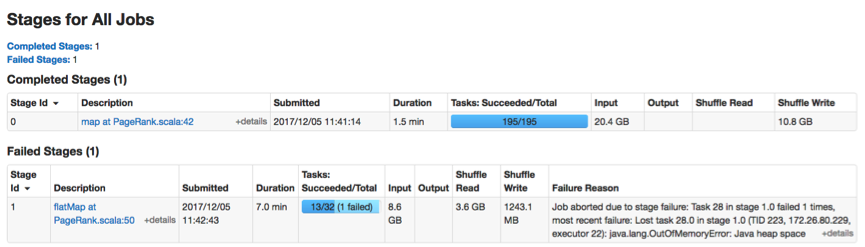

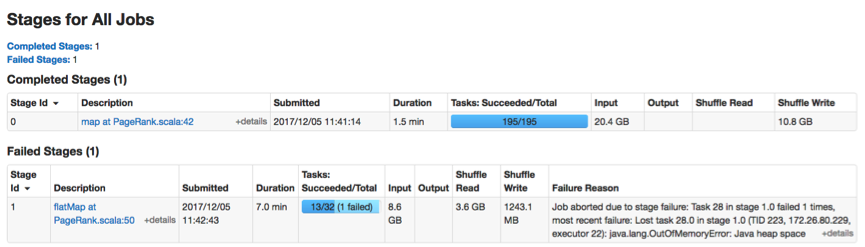

This application has a map stage and many iterative reduce stages. An OOM error occurs in a reduce task (Task-28) as follows.

[OOM root cause identification]

Each executor has 1 CPU core and 6.5GB memory, so it only runs one task at a time. After analyzing the application dataflow, error log, heap dump, and source code, I found the following steps lead to the OOM error.

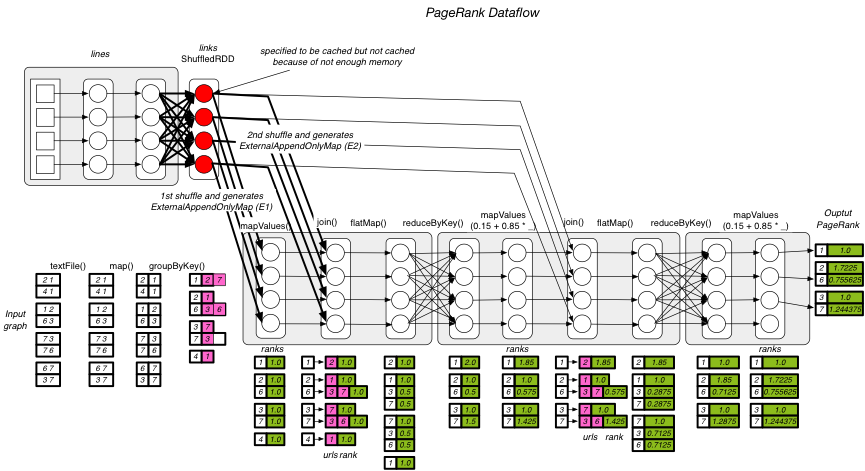

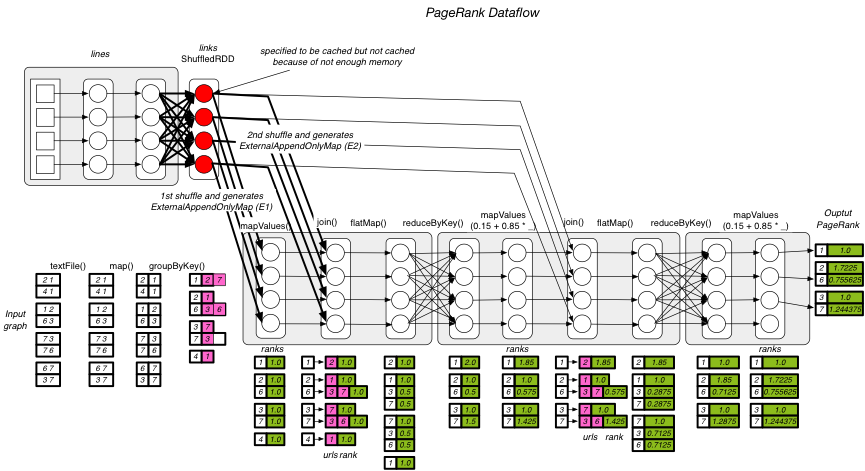

=> The MemoryManager found that there is not enough memory to cache the links:ShuffledRDD (rdd-5-28, red circles in the dataflow figure).

=> The task needs to shuffle twice (1st shuffle and 2nd shuffle in the dataflow figure).

=> The task needs to generate two ExternalAppendOnlyMap (E1 for 1st shuffle and E2 for 2nd shuffle) in sequence.

=> The 1st shuffle begins and ends. E1 aggregates all the shuffled data of 1st shuffle and achieves 3.3 GB.

=> The 2nd shuffle begins. E2 is aggregating the shuffled data of 2nd shuffle, and finding that there is not enough memory left. This triggers the memory contention.

=> To handle the memory contention, the TaskMemoryManager releases E1 (spills it onto disk) and assumes that the 3.3GB space is free now.

=> E2 continues to aggregates the shuffled records of 2nd shuffle. However, E2 encounters an OOM error while shuffling.

[Guess]

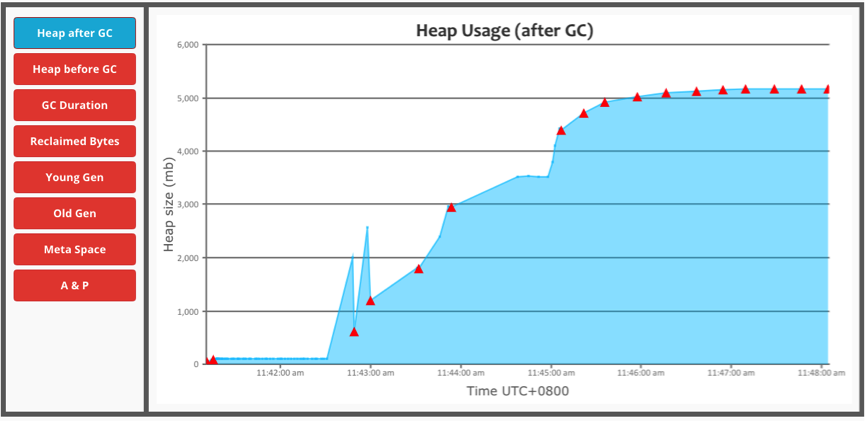

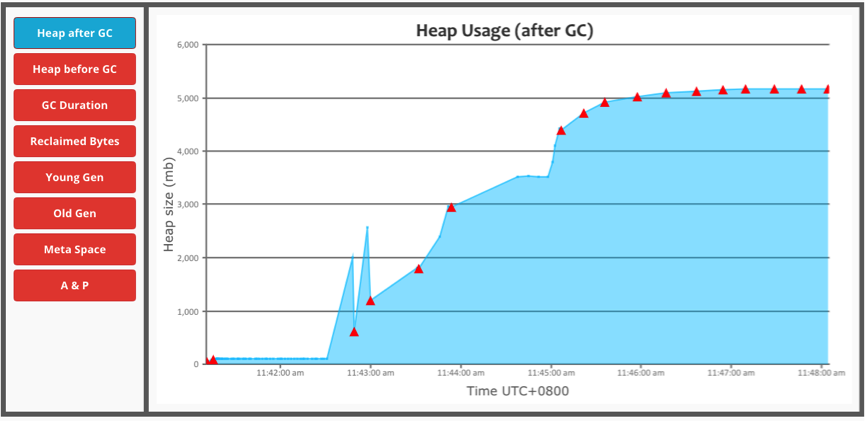

The task memory usage below reveals that there is not memory drop down. So, the cause may be that the 3.3GB ExternalAppendOnlyMap (E1) is not actually released by the TaskMemoryManger.

[Root cause]

After analyzing the heap dump, I found the guess is right (the 3.3GB ExternalAppendOnlyMap is actually not released). The 1.6GB object is ExternalAppendOnlyMap (E2).

[Question]

Why the released ExternalAppendOnlyMap is still in memory?

The source code of ExternalAppendOnlyMap shows that the currentMap (AppendOnlyMap) has been set to null when the spill action is finished.

[Root cause in the source code]

I further analyze the reference chain of unreleased ExternalAppendOnlyMap. The reference chain shows that the 3.3GB ExternalAppendOnlyMap is still referenced by the upstream/readingIterator and further referenced by TaskMemoryManager as follows. So, the root cause in the source code is that the ExternalAppendOnlyMap is still referenced by other iterators (setting the currentMap to null is not enough).

[Potential solution]

Setting the upstream/readingIterator to null after the forceSpill() action. I will try this solution in these days.

[References]

[1] PageRank source code. https://github.com/JerryLead/SparkGC/blob/master/src/main/scala/applications/graph/PageRank.scala

[2] Task execution log. https://github.com/JerryLead/Misc/blob/master/OOM-TasksMemoryManager/log/TaskExecutionLog.txt

转载源自SPARK-22713,作者JerryLead(Xu Lijie)

[Abstract]

I recently encountered an OOM error in a PageRank application (org.apache.spark.examples.SparkPageRank). After profiling the application, I found the OOM error is related to the memory contention in shuffle spill phase. Here, the memory contention means that a task tries to release some old memory consumers from memory for keeping the new memory consumers. After analyzing the OOM heap dump, I found the root cause is a memory leak in TaskMemoryManager. Since memory contention is common in shuffle phase, this is a critical bug/defect. In the following sections, I will use the application dataflow, execution log, heap dump, and source code to identify the root cause.[Application]

This is a PageRank application from Spark’s example library. The following figure shows the application dataflow. The source code is available at [1].

[Failure symptoms]

This application has a map stage and many iterative reduce stages. An OOM error occurs in a reduce task (Task-28) as follows.

[OOM root cause identification]

Each executor has 1 CPU core and 6.5GB memory, so it only runs one task at a time. After analyzing the application dataflow, error log, heap dump, and source code, I found the following steps lead to the OOM error.

=> The MemoryManager found that there is not enough memory to cache the links:ShuffledRDD (rdd-5-28, red circles in the dataflow figure).

=> The task needs to shuffle twice (1st shuffle and 2nd shuffle in the dataflow figure).

=> The task needs to generate two ExternalAppendOnlyMap (E1 for 1st shuffle and E2 for 2nd shuffle) in sequence.

=> The 1st shuffle begins and ends. E1 aggregates all the shuffled data of 1st shuffle and achieves 3.3 GB.

=> The 2nd shuffle begins. E2 is aggregating the shuffled data of 2nd shuffle, and finding that there is not enough memory left. This triggers the memory contention.

=> To handle the memory contention, the TaskMemoryManager releases E1 (spills it onto disk) and assumes that the 3.3GB space is free now.

=> E2 continues to aggregates the shuffled records of 2nd shuffle. However, E2 encounters an OOM error while shuffling.

[Guess]

The task memory usage below reveals that there is not memory drop down. So, the cause may be that the 3.3GB ExternalAppendOnlyMap (E1) is not actually released by the TaskMemoryManger.

[Root cause]

After analyzing the heap dump, I found the guess is right (the 3.3GB ExternalAppendOnlyMap is actually not released). The 1.6GB object is ExternalAppendOnlyMap (E2).

[Question]

Why the released ExternalAppendOnlyMap is still in memory?

The source code of ExternalAppendOnlyMap shows that the currentMap (AppendOnlyMap) has been set to null when the spill action is finished.

[Root cause in the source code]

I further analyze the reference chain of unreleased ExternalAppendOnlyMap. The reference chain shows that the 3.3GB ExternalAppendOnlyMap is still referenced by the upstream/readingIterator and further referenced by TaskMemoryManager as follows. So, the root cause in the source code is that the ExternalAppendOnlyMap is still referenced by other iterators (setting the currentMap to null is not enough).

[Potential solution]

Setting the upstream/readingIterator to null after the forceSpill() action. I will try this solution in these days.

[References]

[1] PageRank source code. https://github.com/JerryLead/SparkGC/blob/master/src/main/scala/applications/graph/PageRank.scala

[2] Task execution log. https://github.com/JerryLead/Misc/blob/master/OOM-TasksMemoryManager/log/TaskExecutionLog.txt

转载源自SPARK-22713,作者JerryLead(Xu Lijie)

相关文章推荐

- HBase RegionLoad获取Name乱码问题的源码分析与解决方式

- 多个eclipse插件导出同名且版本不同的包带来的问题之分析过程

- 关于一个在Intellij Idea中打包正常,tomcat启动也正常,但在浏览器中访问出404的问题的原因分析

- linux 系统 UDP 丢包问题分析思路

- 从ffmpeg源代码分析如何解决ffmpeg编码的延迟问题(如何解决编码 0 延时)

- 关于主成分分析的五个问题

- [百度分享]频繁分配释放内存导致的性能问题的分析

- 分析Button的android:layout_marginBottom参数失效问题

- Java 编程技术中汉字问题的分析及解决,文件操作

- MIS系统性能问题分析

- C语言编程常见问题分析

- String Reduction问题分析

- DllMain()限入死锁问题分析 (三)

- SQL 查询分析中使用net命令问题

- Java中子类调用父类构造方法的问题分析

- 主流数据库总结分析一下,希望大家多补充问题

- 你所不知道的SQL Server数据库启动过程,以及启动不起来的各种问题的分析及解决技巧

- 汉诺塔问题分析与python实现

- Linux 环境下, ORACLE 监听启动慢的问题分析

- VC运行库版本不同导致链接.LIB静态库时发生重复定义问题的一个案例分析和总结