转:类效果评估——acc、recall、F1、ROC、回归、距离

2017-12-22 17:01

435 查看

一、acc、recall、F1、混淆矩阵、分类综合报告

转自:http://blog.csdn.net/sinat_26917383/article/details/75199996?locationNum=3&fps=1

1、准确率

第一种方式:accuracy_score

# 准确率 import numpy as np from sklearn.metrics import accuracy_score y_pred = [0, 2, 1, 3,9,9,8,5,8] y_true = [0, 1, 2, 3,2,6,3,5,9] accuracy_score(y_true, y_pred) Out[127]: 0.33333333333333331 accuracy_score(y_true, y_pred, normalize=False) # 类似海明距离,每个类别求准确后,再求微平均 Out[128]: 31

2

3

4

5

6

7

8

9

10

11

第二种方式:metrics

宏平均比微平均更合理,但也不是说微平均一无是处,具体使用哪种评测机制,还是要取决于数据集中样本分布宏平均(Macro-averaging),是先对每一个类统计指标值,然后在对所有类求算术平均值。

微平均(Micro-averaging),是对数据集中的每一个实例不分类别进行统计建立全局混淆矩阵,然后计算相应指标。(来源:谈谈评价指标中的宏平均和微平均)

from sklearn import metrics metrics.precision_score(y_true, y_pred, average='micro') # 微平均,精确率 Out[130]: 0.33333333333333331 metrics.precision_score(y_true, y_pred, average='macro') # 宏平均,精确率 Out[131]: 0.375 metrics.precision_score(y_true, y_pred, labels=[0, 1, 2, 3], average='macro') # 指定特定分类标签的精确率 Out[133]: 0.51

2

3

4

5

6

7

8

9

其中average参数有五种:(None, ‘micro’, ‘macro’, ‘weighted’, ‘samples’)

.

2、召回率

metrics.recall_score(y_true, y_pred, average='micro') Out[134]: 0.33333333333333331 metrics.recall_score(y_true, y_pred, average='macro') Out[135]: 0.31251

2

3

4

5

.

3、F1

metrics.f1_score(y_true, y_pred, average='weighted') Out[136]: 0.370370370370370351

2

3

.

4、混淆矩阵

# 混淆矩阵 from sklearn.metrics import confusion_matrix confusion_matrix(y_true, y_pred) Out[137]: array([[1, 0, 0, ..., 0, 0, 0], [0, 0, 1, ..., 0, 0, 0], [0, 1, 0, ..., 0, 0, 1], ..., [0, 0, 0, ..., 0, 0, 1], [0, 0, 0, ..., 0, 0, 0], [0, 0, 0, ..., 0, 1, 0]])1

2

3

4

5

6

7

8

9

10

11

12

横为true label 竖为predict

.

5、 分类报告

# 分类报告:precision/recall/fi-score/均值/分类个数 from sklearn.metrics import classification_report y_true = [0, 1, 2, 2, 0] y_pred = [0, 0, 2, 2, 0] target_names = ['class 0', 'class 1', 'class 2'] print(classification_report(y_true, y_pred, target_names=target_names))1

2

3

4

5

6

其中的结果:

precision recall f1-score support class 0 0.67 1.00 0.80 2 class 1 0.00 0.00 0.00 1 class 2 1.00 1.00 1.00 2 avg / total 0.67 0.80 0.72 51

2

3

4

5

6

7

包含:precision/recall/fi-score/均值/分类个数

.

6、 kappa score

kappa score是一个介于(-1, 1)之间的数. score>0.8意味着好的分类;0或更低意味着不好(实际是随机标签)from sklearn.metrics import cohen_kappa_score y_true = [2, 0, 2, 2, 0, 1] y_pred = [0, 0, 2, 2, 0, 2] cohen_kappa_score(y_true, y_pred)1

2

3

4

.

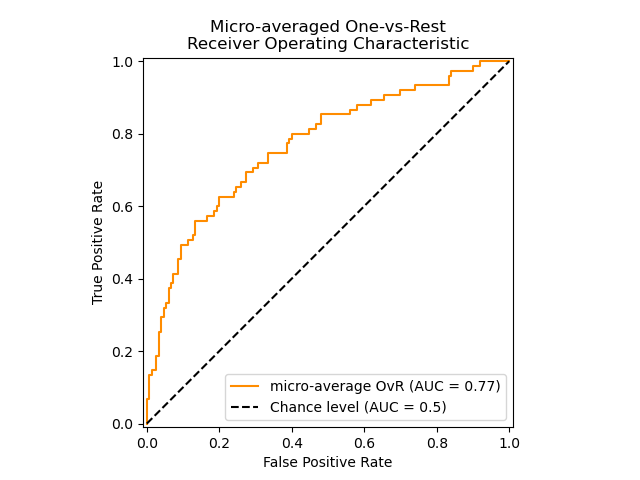

二、ROC

1、计算ROC值

import numpy as np from sklearn.metrics import roc_auc_score y_true = np.array([0, 0, 1, 1]) y_scores = np.array([0.1, 0.4, 0.35, 0.8]) roc_auc_score(y_true, y_scores)1

2

3

4

5

2、ROC曲线

y = np.array([1, 1, 2, 2]) scores = np.array([0.1, 0.4, 0.35, 0.8]) fpr, tpr, thresholds = roc_curve(y, scores, pos_label=2)1

2

3

来看一个官网例子,贴部分代码,全部的code见:Receiver

Operating Characteristic (ROC)

import numpy as np

import matplotlib.pyplot as plt

from itertools import cycle

from sklearn import svm, datasets

from sklearn.metrics import roc_curve, auc

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import label_binarize

from sklearn.multiclass import OneVsRestClassifier

from scipy import interp

# Import some data to play with

iris = datasets.load_iris()

X = iris.data

y = iris.target

# 画图

all_fpr = np.unique(np.concatenate([fpr[i] for i in range(n_classes)]))

# Then interpolate all ROC curves at this points

mean_tpr = np.zeros_like(all_fpr)

for i in range(n_classes):

mean_tpr += interp(all_fpr, fpr[i], tpr[i])

# Finally average it and compute AUC

mean_tpr /= n_classes

fpr["macro"] = all_fpr

tpr["macro"] = mean_tpr

roc_auc["macro"] = auc(fpr["macro"], tpr["macro"])

# Plot all ROC curves

plt.figure()

plt.plot(fpr["micro"], tpr["micro"],

label='micro-average ROC curve (area = {0:0.2f})'

''.format(roc_auc["micro"]),

color='deeppink', linestyle=':', linewidth=4)

plt.plot(fpr["macro"], tpr["macro"],

label='macro-average ROC curve (area = {0:0.2f})'

''.format(roc_auc["macro"]),

color='navy', linestyle=':', linewidth=4)

colors = cycle(['aqua', 'darkorange', 'cornflowerblue'])

for i, color in zip(range(n_classes), colors):

plt.plot(fpr[i], tpr[i], color=color, lw=lw,

label='ROC curve of class {0} (area = {1:0.2f})'

''.format(i, roc_auc[i]))

plt.plot([0, 1], [0, 1], 'k--', lw=lw)

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.05])

plt.xlabel('False Positive Rate')

plt.ylabel('True Positive Rate')

plt.title('Some extension of Receiver operating characteristic to multi-class')

plt.legend(loc="lower right")

plt.show()12

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

.

三、距离

.

1、海明距离

from sklearn.metrics import hamming_loss y_pred = [1, 2, 3, 4] y_true = [2, 2, 3, 4] hamming_loss(y_true, y_pred) 0.251

2

3

4

5

.

2、Jaccard距离

import numpy as np from sklearn.metrics import jaccard_similarity_score y_pred = [0, 2, 1, 3,4] y_true = [0, 1, 2, 3,4] jaccard_similarity_score(y_true, y_pred) 0.5 jaccard_similarity_score(y_true, y_pred, normalize=False) 21

2

3

4

5

6

7

8

.

四、回归

1、 可释方差值(Explained variance score)

from sklearn.metrics import explained_variance_score y_true = [3, -0.5, 2, 7] y_pred = [2.5, 0.0, 2, 8] explained_variance_score(y_true, y_pred)1

2

3

4

.

2、 平均绝对误差(Mean absolute error)

from sklearn.metrics import mean_absolute_error y_true = [3, -0.5, 2, 7] y_pred = [2.5, 0.0, 2, 8] mean_absolute_error(y_true, y_pred)1

2

3

4

.

3、 均方误差(Mean squared error)

from sklearn.metrics import mean_squared_error y_true = [3, -0.5, 2, 7] y_pred = [2.5, 0.0, 2, 8] mean_squared_error(y_true, y_pred)1

2

3

4

.

4、中值绝对误差(Median absolute error)

from sklearn.metrics import median_absolute_error y_true = [3, -0.5, 2, 7] y_pred = [2.5, 0.0, 2, 8] median_absolute_error(y_true, y_pred)1

2

3

4

.

5、 R方值,确定系数

from sklearn.metrics import r2_score y_true = [3, -0.5, 2, 7] y_pred = [2.5, 0.0, 2, 8] r2_score(y_true, y_pred)1

2

3

4

.

参考文献:

sklearn中的模型评估

相关文章推荐

- python + sklearn ︱分类效果评估——acc、recall、F1、ROC、回归、距离

- python + sklearn ︱分类效果评估——acc、recall、F1、ROC、回归、距离

- sklearn工具包---分类效果评估(acc、recall、F1、ROC、回归、距离)

- ROC,AUC,Precision,Recall,F1的介绍与计算

- 3. 效果评估(效果回归)

- ROC,AUC,Precision,Recall,F1的介绍与计算(转)

- ROC曲线以及评估指标F1-Score, recall, precision-整理版

- K近邻回归模型对Boston房价进行预测,同时对性能进行评估(1.使用普通的算术平均法2.考虑距离差异进行加权平均)

- 回归模型效果评估系列1-QQ图

- 聚类算法效果评估2,计算Precison,Recall,Fvalue,RI

- accuracy、precision、recall、F1、ROC等指标

- sklearn.metrics中的评估方法(accuracy_score,recall_score,roc_curve,roc_auc_score,confusion_matrix)

- sklearn.metrics中的评估方法(accuracy_score,recall_score,roc_curve,roc_auc_score,confusion_matrix)

- python sklearn-04:逻辑回归及其效果评估

- 精确率(Precision)、召回率(Recall)、F1-score、ROC、AUC

- sklearn.metrics中的评估方法介绍(accuracy_score, recall_score, roc_curve, roc_auc_score, confusion_matrix)

- ROC、Precision、Recall、TPR、FPR理解

- 准确率(Accuracy), 精确率(Precision), 召回率(Recall)和F1-Measure

- Precision ROC Recall

- 针对机器学习初学者的MNIST实验——回归的实现、训练和模型评估