文章【Android 的视频编码 H263 MP4V H264】的代码实现

2017-05-19 16:26

661 查看

最近很多同学问我SPS和PPS在那里设置,其实这篇文章只是我 上篇文章的一个简单实现

具体情况情看看上一篇

http://blog.csdn href="http://lib.csdn.net/base/dotnet" target=_blank>.NET /zblue78/archive/2010/12/15/6078040.aspx

这里只用HTC的G7做了H264的程序,谅解!

csdn的资源慢了 粘代码算了

资源 http://download.csdn.net/source/2918751

欢迎大家经常访问我的blog http://blog.csdn href="http://lib.csdn.net/base/dotnet" target=_blank>.Net /zblue78/

共同探讨,啥也不说的 直接上码

AndroidManifest.xml

[xhtml] view

plain copy

<?xml version="1.0" encoding="utf-8"?>

<manifest xmlns:Android="http://schemas.android.com/apk/res/android"

package="com.zjzhang"

android:versionCode="1"

android:versionName="1.0">

<application android:icon="@drawable/icon" android:label="@string/app_name" android:debuggable="true">

<activity android:name=".VideoCameraActivity"

android:screenOrientation="landscape"

android:label="@string/app_name">

<intent-filter>

<action android:name="android.intent.action.MAIN" />

<category android:name="android.intent.category.LAUNCHER" />

</intent-filter>

</activity>

</application>

<uses-sdk android:minSdkVersion="3" />

<uses-permission android:name="android.permission.INTERNET"/>

<uses-permission android:name="android.permission.CAMERA"/>

<uses-permission android:name="android.permission.RECORD_VIDEO"/>

<uses-permission android:name="android.permission.RECORD_AUDIO"/>

<uses-permission android:name="android.permission.WRITE_EXTERNAL_STORAGE"/>

</manifest>

main.xml

[xhtml] view

plain copy

<?xml version="1.0" encoding="utf-8"?>

<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android"

android:orientation="vertical"

android:layout_width="fill_parent"

android:layout_height="fill_parent"

>

<SurfaceView

android:id="@+id/surface_camera"

android:layout_width="176px"

android:layout_height="144px"

android:layout_alignParentRight="true"

android:layout_alignParentTop="true"

/>

</LinearLayout>

[Java] view

plain copy

package com.zjzhang;

import java.io.DataInputStream;

import java.io.File;

import java.io.IOException;

import java.io.InputStream;

import java.io.RandomAccessFile;

import android.app.Activity;

import android.content.Context;

import android.os.Bundle;

import android.graphics.PixelFormat;

import android.media.MediaRecorder;

import android.net.LocalServerSocket;

import android.net.LocalSocket;

import android.net.LocalSocketAddress;

import android.util.Log;

import android.view.SurfaceHolder;

import android.view.SurfaceView;

import android.view.View;

import android.view.Window;

import android.view.WindowManager;

public class VideoCameraActivity extends Activity implements

SurfaceHolder.Callback, MediaRecorder.OnErrorListener,

MediaRecorder.OnInfoListener {

private static final int mVideoEncoder =MediaRecorder.VideoEncoder.H264;

private static final String TAG = "VideoCamera";

LocalSocket receiver, sender;

LocalServerSocket lss;

private MediaRecorder mMediaRecorder = null;

boolean mMediaRecorderRecording = false;

private SurfaceView mSurfaceView = null;

private SurfaceHolder mSurfaceHolder = null;

Thread t;

Context mContext = this;

RandomAccessFile raf = null;

@Override

public void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

getWindow().setFormat(PixelFormat.TRANSLUCENT);

requestWindowFeature(Window.FEATURE_NO_TITLE);

getWindow().setFlags(WindowManager.LayoutParams.FLAG_FULLSCREEN,

WindowManager.LayoutParams.FLAG_FULLSCREEN);

setContentView(R.layout.main);

mSurfaceView = (SurfaceView) this.findViewById(R.id.surface_camera);

SurfaceHolder holder = mSurfaceView.getHolder();

holder.addCallback(this);

holder.setType(SurfaceHolder.SURFACE_TYPE_PUSH_BUFFERS);

mSurfaceView.setVisibility(View.VISIBLE);

receiver = new LocalSocket();

try {

lss = new LocalServerSocket("VideoCamera");

receiver.connect(new LocalSocketAddress("VideoCamera"));

receiver.setReceiveBufferSize(500000);

receiver.setSendBufferSize(500000);

sender = lss.accept();

sender.setReceiveBufferSize(500000);

sender.setSendBufferSize(500000);

} catch (IOException e) {

finish();

return;

}

}

@Override

public void onStart() {

super.onStart();

}

@Override

public void onResume() {

super.onResume();

}

@Override

public void onPause() {

super.onPause();

if (mMediaRecorderRecording) {

stopVideoRecording();

try {

lss.close();

receiver.close();

sender.close();

} catch (IOException e) {

e.printStackTrace();

}

}

finish();

}

private void stopVideoRecording() {

Log.d(TAG, "stopVideoRecording");

if (mMediaRecorderRecording || mMediaRecorder != null) {

if (t != null)

t.interrupt();

try {

raf.close();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

releaseMediaRecorder();

}

}

private void startVideoRecording() {

Log.d(TAG, "startVideoRecording");

(t = new Thread() {

public void run() {

int frame_size = 1024;

byte[] buffer = new byte[1024 * 64];

int num, number = 0;

InputStream fis = null;

try {

fis = receiver.getInputStream();

} catch (IOException e1) {

return;

}

try {

Thread.currentThread().sleep(500);

} catch (InterruptedException e1) {

e1.printStackTrace();

}

number = 0;

releaseMediaRecorder();

//如果是H264或是MPEG_4_SP的就要在这里找到相应的设置参数的流

//avcC box H264的设置参数

//esds box MPEG_4_SP 的设置参数

//其实 如果分辨率 等数值不变的话,这些参数是不会变化的,

//那么我就只需要在第一次运行的时候确定就可以了

while (true) {

try {

num = fis.read(buffer, number, frame_size);

number += num;

if (num < frame_size) {

break;

}

} catch (IOException e) {

break;

}

}

initializeVideo();

number = 0;

// 重新启动捕获,以获取视频流

DataInputStream dis=new DataInputStream(fis);

//读取最前面的32个自己的空头

try {

dis.read(buffer,0,32);

} catch (IOException e1) {

// TODO Auto-generated catch block

e1.printStackTrace();

}

try {

File file = new File("/sdcard/stream.h264");

if (file.exists())

file.delete();

raf = new RandomAccessFile(file, "rw");

} catch (Exception ex) {

Log.v("System.out", ex.toString());

}

//这些参数要对应我现在的视频设置,如果想变化的话需要去重新确定,

//当然不知道是不是不同的机器是不是一样,我这里只有一个HTC G7做测试。

byte[] h264sps={0x67,0x42,0x00,0x0C,(byte) 0x96,0x54,0x0B,0x04,(byte) 0xA2};

byte[] h264pps={0x68,(byte) 0xCE,0x38,(byte) 0x80};

byte[] h264head={0,0,0,1};

try {

raf.write(h264head);

raf.write(h264sps);

raf.write(h264head);

raf.write(h264pps);

} catch (IOException e1) {

// TODO Auto-generated catch block

e1.printStackTrace();

}

while (true)

{

try {

//读取每场的长度

int h264length=dis.readInt();

number =0;

raf.write(h264head);

while(number<h264length)

{

int lost=h264length-number;

num = fis.read(buffer,0,frame_size<lost?frame_size:lost);

Log.d(TAG,String.format("H264 %d,%d,%d", h264length,number,num));

number+=num;

raf.write(buffer, 0, num);

}

} catch (IOException e) {

break;

}

}

}

}).start();

}

private boolean initializeVideo() {

if (mSurfaceHolder==null)

return false;

mMediaRecorderRecording = true;

if (mMediaRecorder == null)

mMediaRecorder = new MediaRecorder();

else

mMediaRecorder.reset();

mMediaRecorder.setVideoSource(MediaRecorder.VideoSource.CAMERA);

mMediaRecorder.setOutputFormat(MediaRecorder.OutputFormat.THREE_GPP);

mMediaRecorder.setVideoFrameRate(20);

mMediaRecorder.setVideoSize(352, 288);

mMediaRecorder.setVideoEncoder(mVideoEncoder);

mMediaRecorder.setPreviewDisplay(mSurfaceHolder.getSurface());

mMediaRecorder.setMaxDuration(0);

mMediaRecorder.setMaxFileSize(0);

mMediaRecorder.setOutputFile(sender.getFileDescriptor());

try {

mMediaRecorder.setOnInfoListener(this);

mMediaRecorder.setOnErrorListener(this);

mMediaRecorder.prepare();

mMediaRecorder.start();

} catch (IOException exception) {

releaseMediaRecorder();

finish();

return false;

}

return true;

}

private void releaseMediaRecorder() {

Log.v(TAG, "Releasing media recorder.");

if (mMediaRecorder != null) {

if (mMediaRecorderRecording) {

try {

mMediaRecorder.setOnErrorListener(null);

mMediaRecorder.setOnInfoListener(null);

mMediaRecorder.stop();

} catch (RuntimeException e) {

Log.e(TAG, "stop fail: " + e.getMessage());

}

mMediaRecorderRecording = false;

}

mMediaRecorder.reset();

mMediaRecorder.release();

mMediaRecorder = null;

}

}

@Override

public void surfaceChanged(SurfaceHolder holder, int format, int w, int h) {

Log.d(TAG, "surfaceChanged");

mSurfaceHolder = holder;

if (!mMediaRecorderRecording) {

initializeVideo();

startVideoRecording();

}

}

@Override

public void surfaceCreated(SurfaceHolder holder) {

Log.d(TAG, "surfaceCreated");

mSurfaceHolder = holder;

}

@Override

public void surfaceDestroyed(SurfaceHolder holder) {

Log.d(TAG, "surfaceDestroyed");

mSurfaceHolder = null;

}

@Override

public void onInfo(MediaRecorder mr, int what, int extra) {

switch (what) {

case MediaRecorder.MEDIA_RECORDER_INFO_UNKNOWN:

Log.d(TAG, "MEDIA_RECORDER_INFO_UNKNOWN");

break;

case MediaRecorder.MEDIA_RECORDER_INFO_MAX_DURATION_REACHED:

Log.d(TAG, "MEDIA_RECORDER_INFO_MAX_DURATION_REACHED");

break;

case MediaRecorder.MEDIA_RECORDER_INFO_MAX_FILESIZE_REACHED:

Log.d(TAG, "MEDIA_RECORDER_INFO_MAX_FILESIZE_REACHED");

break;

}

}

@Override

public void onError(MediaRecorder mr, int what, int extra) {

if (what == MediaRecorder.MEDIA_RECORDER_ERROR_UNKNOWN) {

Log.d(TAG, "MEDIA_RECORDER_ERROR_UNKNOWN");

finish();

}

}

}

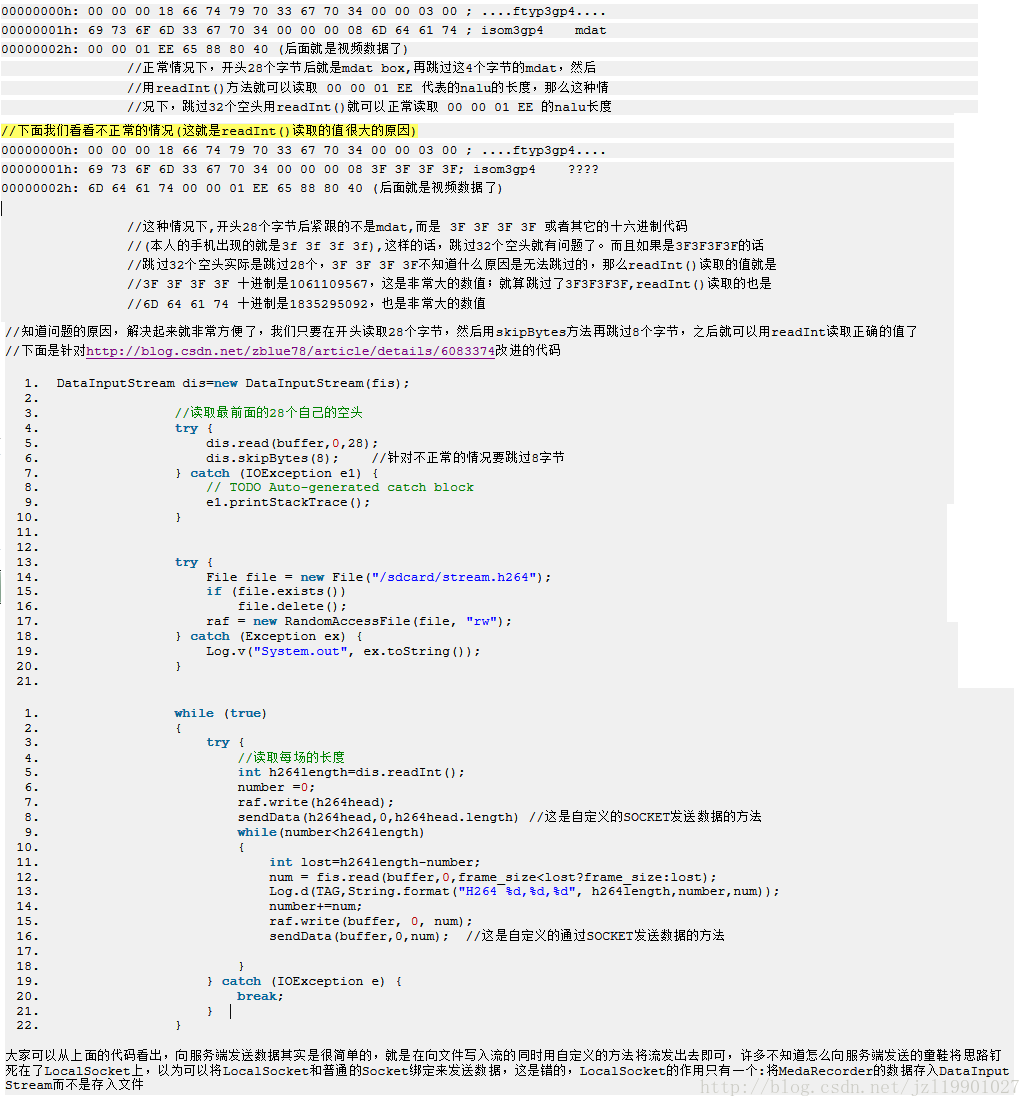

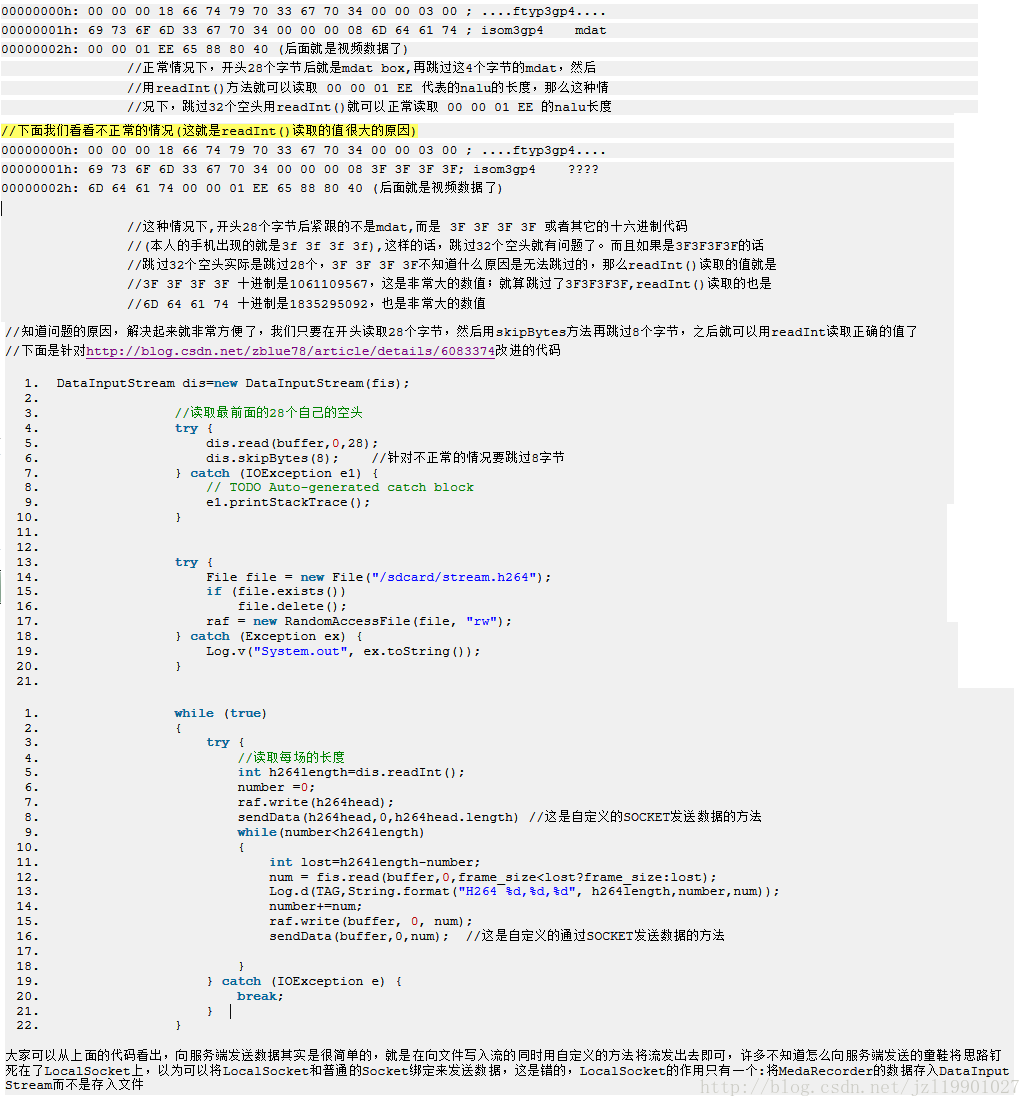

核心是http://blog.csdn.net/zblue78/article/details/6083374,用博主的方法录制视频后许多人发现无法播放,主要问题是

DataInputStream的readInt()方法读取的值太大(之后会提到),readInt()是用来读取四个字节并以十进制表示这四个字节代表的数值

我们先来看看MediaRecorder使用H264编码的具体情况,问题就处在这里

PS: CSDN 的代码排版真是越来越坑爹了

[java] view

plain copy

//获取SPS和PPS

public class GetSPSAndPPS {

private byte[] SPS;

private byte[] PPS;

private final int PARA_SPS = 0;

private final int PARA_PPS = 1;

public GetSPSAndPPS(byte[] in){

int dataLength = in.length;

// 'a'=0x61, 'v'=0x76, 'c'=0x63, 'C'=0x43

byte[] avcC = new byte[] { 0x61, 0x76, 0x63, 0x43 };

// avcC的起始位置

int avcRecord = 0;

for (int ix = 0; ix < dataLength; ++ix) {

if (in[ix] == avcC[0] && in[ix + 1] == avcC[1]

&& in[ix + 2] == avcC[2]

&& in[ix + 3] == avcC[3]) {

// 找到avcC,则记录avcRecord起始位置,然后退出循环。

avcRecord = ix + 4;

break;

}

}

if (0 == avcRecord) {

handleMainThread("Cannot find avvC");

return;

}

int spsStartPos = avcRecord + 6;

byte[] spsbt = new byte[] { in[spsStartPos],

in[spsStartPos + 1] };

int spsLength = bytes2Int(spsbt);

SPS = new byte[spsLength];

spsStartPos += 2;

System.arraycopy(in, spsStartPos, SPS, 0, spsLength);

int ppsStartPos = spsStartPos + spsLength + 1;

byte[] ppsbt = new byte[] { in[ppsStartPos],

in[ppsStartPos + 1] };

int ppsLength = bytes2Int(ppsbt);

PPS = new byte[ppsLength];

ppsStartPos += 2;

System.arraycopy(in, ppsStartPos, PPS, 0, ppsLength);

handleMainThread("Find SPS and PPS!");

}

private int bytes2Int(byte[] bt) {

int ret = bt[0];

ret <<= 8;

ret |= bt[1];

return ret;

}

public byte[] getParameter(int type) {

switch(type){

case PARA_SPS:

return SPS;

case PARA_PPS:

return PPS;

default:return null;

}

}

private void handleMainThread(String s) {

Message msg = new Message();

Bundle b = new Bundle();

b.putString("String", String.valueOf(s));

msg.setData(b);

try {

RAS_MAIN.myhandle.sendMessage(msg);

} catch (Exception e) {

System.out.print(e.getMessage());

}

}

}

具体情况情看看上一篇

http://blog.csdn

这里只用HTC的G7做了H264的程序,谅解!

csdn的资源慢了 粘代码算了

资源 http://download.csdn.net/source/2918751

欢迎大家经常访问我的blog http://blog.csdn

共同探讨,啥也不说的 直接上码

AndroidManifest.xml

[xhtml] view

plain copy

<?xml version="1.0" encoding="utf-8"?>

<manifest xmlns:Android="http://schemas.android.com/apk/res/android"

package="com.zjzhang"

android:versionCode="1"

android:versionName="1.0">

<application android:icon="@drawable/icon" android:label="@string/app_name" android:debuggable="true">

<activity android:name=".VideoCameraActivity"

android:screenOrientation="landscape"

android:label="@string/app_name">

<intent-filter>

<action android:name="android.intent.action.MAIN" />

<category android:name="android.intent.category.LAUNCHER" />

</intent-filter>

</activity>

</application>

<uses-sdk android:minSdkVersion="3" />

<uses-permission android:name="android.permission.INTERNET"/>

<uses-permission android:name="android.permission.CAMERA"/>

<uses-permission android:name="android.permission.RECORD_VIDEO"/>

<uses-permission android:name="android.permission.RECORD_AUDIO"/>

<uses-permission android:name="android.permission.WRITE_EXTERNAL_STORAGE"/>

</manifest>

main.xml

[xhtml] view

plain copy

<?xml version="1.0" encoding="utf-8"?>

<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android"

android:orientation="vertical"

android:layout_width="fill_parent"

android:layout_height="fill_parent"

>

<SurfaceView

android:id="@+id/surface_camera"

android:layout_width="176px"

android:layout_height="144px"

android:layout_alignParentRight="true"

android:layout_alignParentTop="true"

/>

</LinearLayout>

[Java] view

plain copy

package com.zjzhang;

import java.io.DataInputStream;

import java.io.File;

import java.io.IOException;

import java.io.InputStream;

import java.io.RandomAccessFile;

import android.app.Activity;

import android.content.Context;

import android.os.Bundle;

import android.graphics.PixelFormat;

import android.media.MediaRecorder;

import android.net.LocalServerSocket;

import android.net.LocalSocket;

import android.net.LocalSocketAddress;

import android.util.Log;

import android.view.SurfaceHolder;

import android.view.SurfaceView;

import android.view.View;

import android.view.Window;

import android.view.WindowManager;

public class VideoCameraActivity extends Activity implements

SurfaceHolder.Callback, MediaRecorder.OnErrorListener,

MediaRecorder.OnInfoListener {

private static final int mVideoEncoder =MediaRecorder.VideoEncoder.H264;

private static final String TAG = "VideoCamera";

LocalSocket receiver, sender;

LocalServerSocket lss;

private MediaRecorder mMediaRecorder = null;

boolean mMediaRecorderRecording = false;

private SurfaceView mSurfaceView = null;

private SurfaceHolder mSurfaceHolder = null;

Thread t;

Context mContext = this;

RandomAccessFile raf = null;

@Override

public void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

getWindow().setFormat(PixelFormat.TRANSLUCENT);

requestWindowFeature(Window.FEATURE_NO_TITLE);

getWindow().setFlags(WindowManager.LayoutParams.FLAG_FULLSCREEN,

WindowManager.LayoutParams.FLAG_FULLSCREEN);

setContentView(R.layout.main);

mSurfaceView = (SurfaceView) this.findViewById(R.id.surface_camera);

SurfaceHolder holder = mSurfaceView.getHolder();

holder.addCallback(this);

holder.setType(SurfaceHolder.SURFACE_TYPE_PUSH_BUFFERS);

mSurfaceView.setVisibility(View.VISIBLE);

receiver = new LocalSocket();

try {

lss = new LocalServerSocket("VideoCamera");

receiver.connect(new LocalSocketAddress("VideoCamera"));

receiver.setReceiveBufferSize(500000);

receiver.setSendBufferSize(500000);

sender = lss.accept();

sender.setReceiveBufferSize(500000);

sender.setSendBufferSize(500000);

} catch (IOException e) {

finish();

return;

}

}

@Override

public void onStart() {

super.onStart();

}

@Override

public void onResume() {

super.onResume();

}

@Override

public void onPause() {

super.onPause();

if (mMediaRecorderRecording) {

stopVideoRecording();

try {

lss.close();

receiver.close();

sender.close();

} catch (IOException e) {

e.printStackTrace();

}

}

finish();

}

private void stopVideoRecording() {

Log.d(TAG, "stopVideoRecording");

if (mMediaRecorderRecording || mMediaRecorder != null) {

if (t != null)

t.interrupt();

try {

raf.close();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

releaseMediaRecorder();

}

}

private void startVideoRecording() {

Log.d(TAG, "startVideoRecording");

(t = new Thread() {

public void run() {

int frame_size = 1024;

byte[] buffer = new byte[1024 * 64];

int num, number = 0;

InputStream fis = null;

try {

fis = receiver.getInputStream();

} catch (IOException e1) {

return;

}

try {

Thread.currentThread().sleep(500);

} catch (InterruptedException e1) {

e1.printStackTrace();

}

number = 0;

releaseMediaRecorder();

//如果是H264或是MPEG_4_SP的就要在这里找到相应的设置参数的流

//avcC box H264的设置参数

//esds box MPEG_4_SP 的设置参数

//其实 如果分辨率 等数值不变的话,这些参数是不会变化的,

//那么我就只需要在第一次运行的时候确定就可以了

while (true) {

try {

num = fis.read(buffer, number, frame_size);

number += num;

if (num < frame_size) {

break;

}

} catch (IOException e) {

break;

}

}

initializeVideo();

number = 0;

// 重新启动捕获,以获取视频流

DataInputStream dis=new DataInputStream(fis);

//读取最前面的32个自己的空头

try {

dis.read(buffer,0,32);

} catch (IOException e1) {

// TODO Auto-generated catch block

e1.printStackTrace();

}

try {

File file = new File("/sdcard/stream.h264");

if (file.exists())

file.delete();

raf = new RandomAccessFile(file, "rw");

} catch (Exception ex) {

Log.v("System.out", ex.toString());

}

//这些参数要对应我现在的视频设置,如果想变化的话需要去重新确定,

//当然不知道是不是不同的机器是不是一样,我这里只有一个HTC G7做测试。

byte[] h264sps={0x67,0x42,0x00,0x0C,(byte) 0x96,0x54,0x0B,0x04,(byte) 0xA2};

byte[] h264pps={0x68,(byte) 0xCE,0x38,(byte) 0x80};

byte[] h264head={0,0,0,1};

try {

raf.write(h264head);

raf.write(h264sps);

raf.write(h264head);

raf.write(h264pps);

} catch (IOException e1) {

// TODO Auto-generated catch block

e1.printStackTrace();

}

while (true)

{

try {

//读取每场的长度

int h264length=dis.readInt();

number =0;

raf.write(h264head);

while(number<h264length)

{

int lost=h264length-number;

num = fis.read(buffer,0,frame_size<lost?frame_size:lost);

Log.d(TAG,String.format("H264 %d,%d,%d", h264length,number,num));

number+=num;

raf.write(buffer, 0, num);

}

} catch (IOException e) {

break;

}

}

}

}).start();

}

private boolean initializeVideo() {

if (mSurfaceHolder==null)

return false;

mMediaRecorderRecording = true;

if (mMediaRecorder == null)

mMediaRecorder = new MediaRecorder();

else

mMediaRecorder.reset();

mMediaRecorder.setVideoSource(MediaRecorder.VideoSource.CAMERA);

mMediaRecorder.setOutputFormat(MediaRecorder.OutputFormat.THREE_GPP);

mMediaRecorder.setVideoFrameRate(20);

mMediaRecorder.setVideoSize(352, 288);

mMediaRecorder.setVideoEncoder(mVideoEncoder);

mMediaRecorder.setPreviewDisplay(mSurfaceHolder.getSurface());

mMediaRecorder.setMaxDuration(0);

mMediaRecorder.setMaxFileSize(0);

mMediaRecorder.setOutputFile(sender.getFileDescriptor());

try {

mMediaRecorder.setOnInfoListener(this);

mMediaRecorder.setOnErrorListener(this);

mMediaRecorder.prepare();

mMediaRecorder.start();

} catch (IOException exception) {

releaseMediaRecorder();

finish();

return false;

}

return true;

}

private void releaseMediaRecorder() {

Log.v(TAG, "Releasing media recorder.");

if (mMediaRecorder != null) {

if (mMediaRecorderRecording) {

try {

mMediaRecorder.setOnErrorListener(null);

mMediaRecorder.setOnInfoListener(null);

mMediaRecorder.stop();

} catch (RuntimeException e) {

Log.e(TAG, "stop fail: " + e.getMessage());

}

mMediaRecorderRecording = false;

}

mMediaRecorder.reset();

mMediaRecorder.release();

mMediaRecorder = null;

}

}

@Override

public void surfaceChanged(SurfaceHolder holder, int format, int w, int h) {

Log.d(TAG, "surfaceChanged");

mSurfaceHolder = holder;

if (!mMediaRecorderRecording) {

initializeVideo();

startVideoRecording();

}

}

@Override

public void surfaceCreated(SurfaceHolder holder) {

Log.d(TAG, "surfaceCreated");

mSurfaceHolder = holder;

}

@Override

public void surfaceDestroyed(SurfaceHolder holder) {

Log.d(TAG, "surfaceDestroyed");

mSurfaceHolder = null;

}

@Override

public void onInfo(MediaRecorder mr, int what, int extra) {

switch (what) {

case MediaRecorder.MEDIA_RECORDER_INFO_UNKNOWN:

Log.d(TAG, "MEDIA_RECORDER_INFO_UNKNOWN");

break;

case MediaRecorder.MEDIA_RECORDER_INFO_MAX_DURATION_REACHED:

Log.d(TAG, "MEDIA_RECORDER_INFO_MAX_DURATION_REACHED");

break;

case MediaRecorder.MEDIA_RECORDER_INFO_MAX_FILESIZE_REACHED:

Log.d(TAG, "MEDIA_RECORDER_INFO_MAX_FILESIZE_REACHED");

break;

}

}

@Override

public void onError(MediaRecorder mr, int what, int extra) {

if (what == MediaRecorder.MEDIA_RECORDER_ERROR_UNKNOWN) {

Log.d(TAG, "MEDIA_RECORDER_ERROR_UNKNOWN");

finish();

}

}

}

核心是http://blog.csdn.net/zblue78/article/details/6083374,用博主的方法录制视频后许多人发现无法播放,主要问题是

DataInputStream的readInt()方法读取的值太大(之后会提到),readInt()是用来读取四个字节并以十进制表示这四个字节代表的数值

我们先来看看MediaRecorder使用H264编码的具体情况,问题就处在这里

PS: CSDN 的代码排版真是越来越坑爹了

[java] view

plain copy

//获取SPS和PPS

public class GetSPSAndPPS {

private byte[] SPS;

private byte[] PPS;

private final int PARA_SPS = 0;

private final int PARA_PPS = 1;

public GetSPSAndPPS(byte[] in){

int dataLength = in.length;

// 'a'=0x61, 'v'=0x76, 'c'=0x63, 'C'=0x43

byte[] avcC = new byte[] { 0x61, 0x76, 0x63, 0x43 };

// avcC的起始位置

int avcRecord = 0;

for (int ix = 0; ix < dataLength; ++ix) {

if (in[ix] == avcC[0] && in[ix + 1] == avcC[1]

&& in[ix + 2] == avcC[2]

&& in[ix + 3] == avcC[3]) {

// 找到avcC,则记录avcRecord起始位置,然后退出循环。

avcRecord = ix + 4;

break;

}

}

if (0 == avcRecord) {

handleMainThread("Cannot find avvC");

return;

}

int spsStartPos = avcRecord + 6;

byte[] spsbt = new byte[] { in[spsStartPos],

in[spsStartPos + 1] };

int spsLength = bytes2Int(spsbt);

SPS = new byte[spsLength];

spsStartPos += 2;

System.arraycopy(in, spsStartPos, SPS, 0, spsLength);

int ppsStartPos = spsStartPos + spsLength + 1;

byte[] ppsbt = new byte[] { in[ppsStartPos],

in[ppsStartPos + 1] };

int ppsLength = bytes2Int(ppsbt);

PPS = new byte[ppsLength];

ppsStartPos += 2;

System.arraycopy(in, ppsStartPos, PPS, 0, ppsLength);

handleMainThread("Find SPS and PPS!");

}

private int bytes2Int(byte[] bt) {

int ret = bt[0];

ret <<= 8;

ret |= bt[1];

return ret;

}

public byte[] getParameter(int type) {

switch(type){

case PARA_SPS:

return SPS;

case PARA_PPS:

return PPS;

default:return null;

}

}

private void handleMainThread(String s) {

Message msg = new Message();

Bundle b = new Bundle();

b.putString("String", String.valueOf(s));

msg.setData(b);

try {

RAS_MAIN.myhandle.sendMessage(msg);

} catch (Exception e) {

System.out.print(e.getMessage());

}

}

}

相关文章推荐

- 文章【Android 的视频编码 H263 MP4V H264】的代码实现

- 文章【Android 的视频编码 H263 MP4V H264】的代码实现

- 文章【Android 的视频编码 H263 MP4V H264】的代码实现

- 文章【Android 的视频编码 H263 MP4V H264】的代码实现

- 【Android 的视频编码 H263 MP4V H264】的代码实现

- 【Android 的视频编码 H263 MP4V H264】的代码实现 .

- Android 的视频编码 H263 MP4V H264的代码实现

- Android 的视频编码 H263 MP4V H264

- Android 的视频编码 H263 MP4V H264

- Android 的视频编码 H263 MP4V H264

- Android 的视频编码 H263 MP4V H264

- Android 的视频编码 H263 MP4V H264

- android中 代码实现截图功能(静态+动态视频)

- Android实现录屏直播(三)MediaProjection + VirtualDisplay + librtmp + MediaCodec实现视频编码并推流到rtmp服务器

- Android中SurfaceView视频播放实现代码

- 安卓视频播放器 一行代码快速实现视频播放,Android视频播放,AndroidMP3播放,安卓视频播放一行代码搞定,仿今日头条 Android视频播放器

- Java代码实现Android下的视频通话

- Android 仿今日头条的视频播放控件(几行代码快速实现)

- Android实现录屏直播(三)MediaProjection + VirtualDisplay + librtmp + MediaCodec实现视频编码并推流到rtmp服务器

- Mars Chen Android开发教学视频中XML代码实现的总结