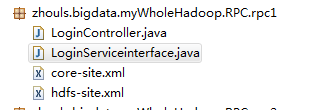

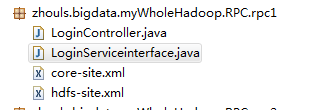

Hadoop HDFS编程 API入门系列之RPC版本1(八)

2016-12-14 00:05

477 查看

不多说,直接上代码。

[b]代码[/b]

[b]代码[/b]

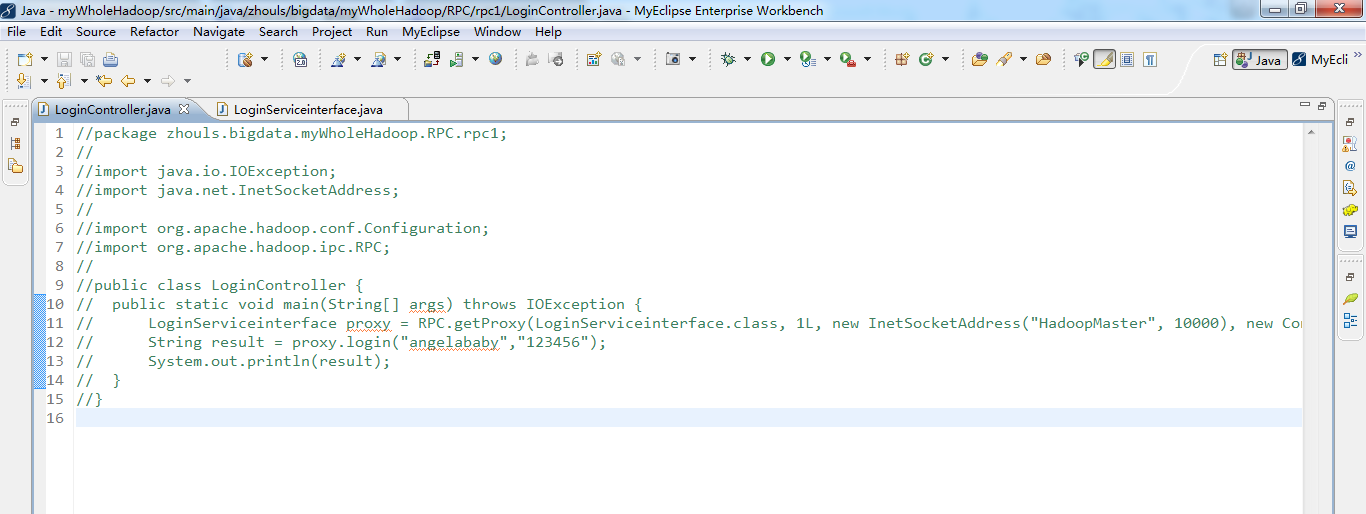

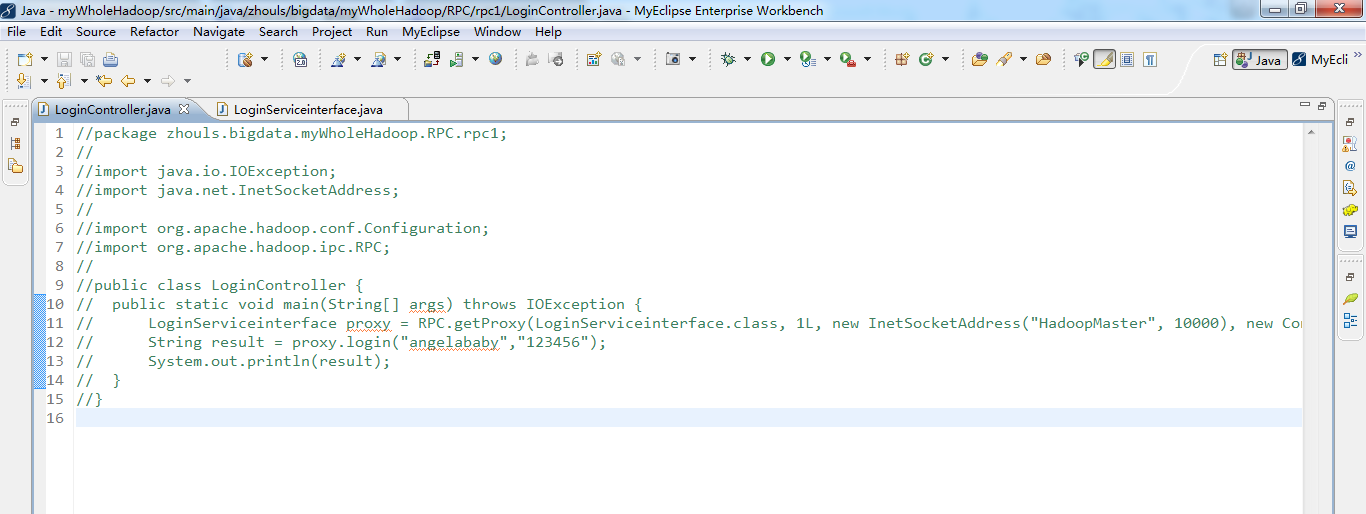

package zhouls.bigdata.myWholeHadoop.RPC.rpc1;

import java.io.IOException;

import java.net.InetSocketAddress;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.ipc.RPC;

public class LoginController {

public static void main(String[] args) throws IOException {

LoginServiceinterface proxy = RPC.getProxy(LoginServiceinterface.class, 1L, new InetSocketAddress("HadoopMaster", 10000), new Configuration());

String result = proxy.login("angelababy","123456");

System.out.println(result);

}

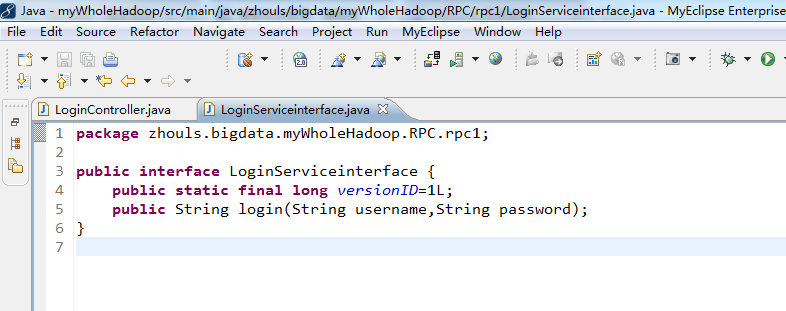

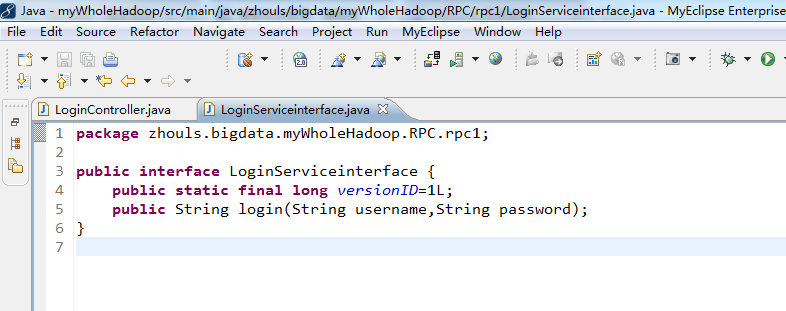

}package zhouls.bigdata.myWholeHadoop.RPC.rpc1;

public interface LoginServiceinterface {

public static final long versionID=1L;

public String login(String username,String password);

}<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>fs.default.name</name> <value>hdfs://HadoopMaster:9000</value> <description>The name of the default file system, using 9000 port.</description> </property> <property> <name>hadoop.tmp.dir</name> <value>/tmp</value> <description>A base for other temporary directories.</description> </property> </configuration>

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>dfs.namenode.rpc-address</name> <value>HadoopMaster:9000</value> </property> <property> <name>dfs.replication</name> <value>2</value> <description>Set to 1 for pseudo-distributed mode,Set to 2 for distributed mode,Set to 3 for distributed mode.</description> </property> <property> <name>dfs.namenode.http-address</name> <value>HadoopMaster:50070</value> </property> </configuration>

相关文章推荐

- Hadoop HDFS编程 API入门系列之RPC版本2(九)

- Hadoop HDFS编程 API入门系列之简单综合版本1(四)

- Hadoop HDFS编程 API入门系列之HdfsUtil版本2(七)

- Hadoop HDFS编程 API入门系列之HdfsUtil版本1(六)

- Hadoop MapReduce编程 API入门系列之统计学生成绩版本2(十八)

- Hadoop HDFS编程 API入门系列之HDFS_HA(五)

- Hadoop HDFS编程 API入门系列之路径过滤上传多个文件到HDFS(二)

- Hadoop MapReduce编程 API入门系列之wordcount版本3(七)

- Hadoop MapReduce编程 API入门系列之wordcount版本2(六)

- Hadoop MapReduce编程 API入门系列之网页流量版本1(二十一)

- Hadoop HDFS编程 API入门系列之合并小文件到HDFS(三)

- Hadoop MapReduce编程 API入门系列之挖掘气象数据版本2(十)

- Hadoop MapReduce编程 API入门系列之挖掘气象数据版本3(九)

- Hadoop HDFS编程 API入门系列之从本地上传文件到HDFS(一)

- Hadoop MapReduce编程 API入门系列之网页流量版本1(二十二)

- Hadoop MapReduce编程 API入门系列之wordcount版本4(八)

- Hadoop MapReduce编程 API入门系列之wordcount版本1(五)

- Hadoop MapReduce编程 API入门系列之wordcount版本5(九)

- Hadoop MapReduce编程 API入门系列之小文件合并(二十九)

- 【Hadoop入门学习系列之二】HDFS架构和编程