编译Hadoop-3.0.0-alpha1本地库+snappy

2016-12-14 00:00

253 查看

1. Hadoop-3.0.0-alpha1

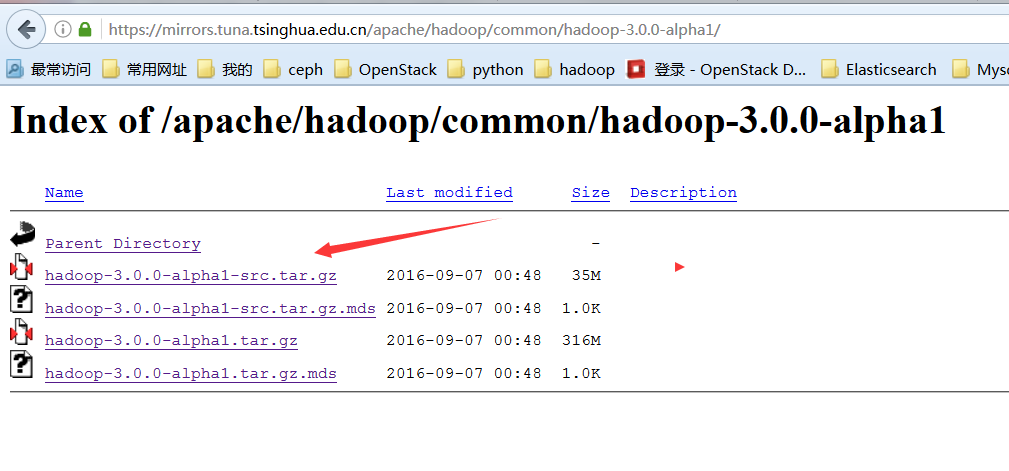

1.1、下载src源码

https://mirrors.tuna.tsinghua.edu.cn/apache/hadoop/common/hadoop-3.0.0-alpha1/

1.2、解压

tar -zxvf hadoop-3.0.0-alpha1-src.tar.gz mv hadoop-3.0.0-alpha1 /opt/hadoop

1.3、检查编译需要的条件

打开BUILDING.txt 文件,可以看到需要的软件* Unix System * JDK 1.8+ * Maven 3.0 or later * Findbugs 1.3.9 (if running findbugs) * ProtocolBuffer 2.5.0 * CMake 2.6 or newer (if compiling native code), must be 3.0 or newer on Mac * Zlib devel (if compiling native code) * openssl devel (if compiling native hadoop-pipes and to get the best HDFS encryption performance) * Linux FUSE (Filesystem in Userspace) version 2.6 or above (if compiling fuse_dfs) * Internet connection for first build (to fetch all Maven and Hadoop dependencies) * python (for releasedocs) * bats (for shell code testing)

2. 安装jdk1.8+

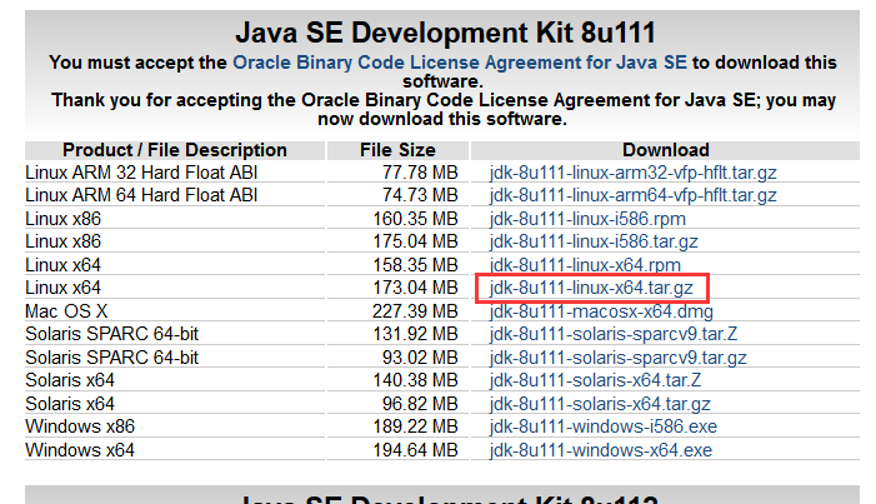

2.1、下载

去oracle官网下载jdk8的tar包www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

2.2、解压

mkdir /opt/dlw/core cd /opt/dlw/core tar -zxvf jdk-8u111-linux-x64.tar.gz mv jdk1.8.0_111 jdk

2.3、配置环境变量

将以下配置写入到/etc/profile文件中export JAVA_HOME=/opt/dlw/core/jdk export CLASSPATH=.:$JAVA_HOME/lib:$JAVA_HOME/jre/lib:$CLASSPATH export PATH=$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$PATH

2.4、检查java版本

source /etc/profile java -version java version "1.8.0_111" Java(TM) SE Runtime Environment (build 1.8.0_111-b14) Java HotSpot(TM) 64-Bit Server VM (build 25.111-b14, mixed mode)

3.安装maven

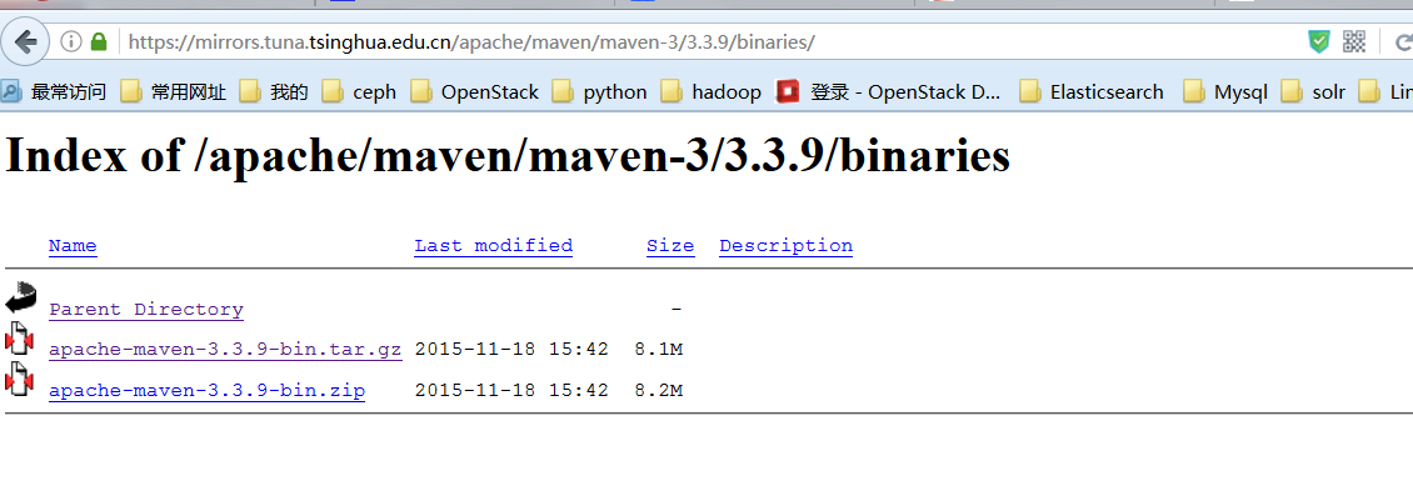

3.1、下载

https://mirrors.tuna.tsinghua.edu.cn/apache/maven/maven-3/3.3.9/binaries/

3.2、解压,创建软连接

tar -zxvf apache-maven-3.3.9-bin.tar.gz mv apache-maven-3.3.9 /opt/dlw/core/mvn cd /usr/bin ln -s /opt/dlw/core/mvn/bin/mvn mvn

3.3、检查版本

mvn --version Apache Maven 3.3.9 (bb52d8502b132ec0a5a3f4c09453c07478323dc5; 2015-11-11T00:41:47+08:00) Maven home: /opt/beh/core/mvn Java version: 1.8.0_111, vendor: Oracle Corporation Java home: /opt/beh/core/jdk/jre Default locale: en_US, platform encoding: UTF-8 OS name: "linux", version: "3.10.0-327.el7.x86_64", arch: "amd64", family: "unix"

4. 安装protobuf

下载2.5.0版本的protobuf4.1、解压安装

tar -zxvf protobuf-2.5.0.tar.gz mv protobuf-2.5.0 /opt/protobuf cd /opt/protobuf/ yum install gcc gcc-c++ -y ./configure make make install

4.2、检查

protoc --version libprotoc 2.5.0

#5. 安装findbugs

5.1、下载

https://sourceforge.net/projects/findbugs/files/findbugs/1.3.9/findbugs-1.3.9.tar.gz/download5.2、解压

tar -zxf findbugs-1.3.9.tar.gz mv findbugs-1.3.9 /opt/findbugs cd /opt/findbugs

5.3、修改环境变量

vi /etc/profile export FINDBUGS_HOME=/opt/findbugs export PATH=$FINDBUGS_HOME/bin:$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$PATH

5.4、检查

findbugs -version 1.3.9

6.安装必须的依赖以及其他软件包

6.1 安装依赖包

yum -y install lzo-devel zlib-devel autoconf automake libtool openssl-devel svn ncurses-devel -y

6.2 安装cmake

若cmake版本无要求,可以直接yum安装,默认版本为2.8.12.2-2.el7yum install cmake

若cmake版本有要求,高于3.4,可以下载软件包

cmake下载

cd cmake-3.10.1-Linux-x86_64/ mv cmake-3.10.1-Linux-x86_64 /opt/cmake ln -s /opt/cmake/bin/cmake /usr/bin/cmake

7. 安装snappy

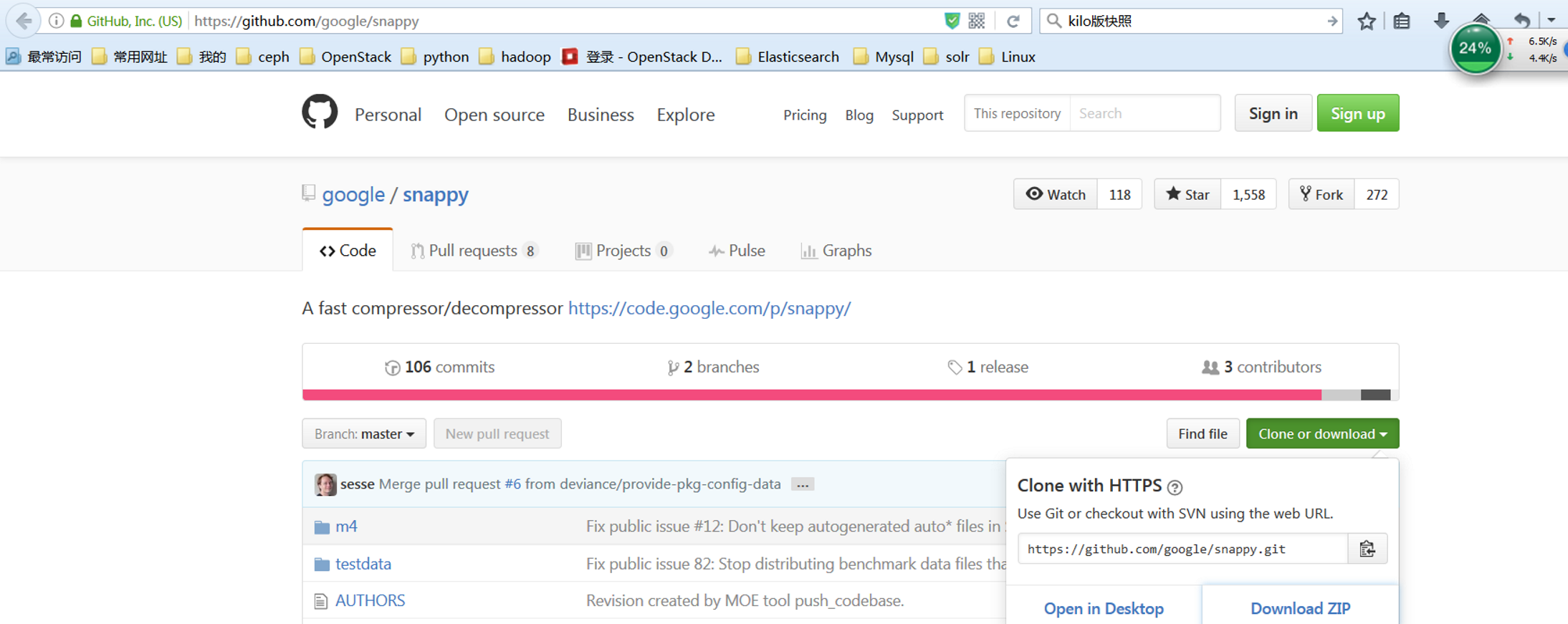

7.1、下载

snappy下载地址

7.2、解压安装

1.16版本以前安装unzip snappy-master.zip cd sanppy-master ./autogen.sh ./configure make make install

1.17版本安装

unzip snappy-master.zip cd sanppy-master mkdir build cmke .. make && make install

8.、编译

8.1、编译hadoop

cd /opt/hadoop mvn package -Pdist,native -DskipTests -Dtar

保持网络畅通,编译成功会出现下面的信息:

$ tar cf hadoop-3.0.0-alpha1.tar hadoop-3.0.0-alpha1 $ gzip -f hadoop-3.0.0-alpha1.tar Hadoop dist tar available at: /opt/hadoop/hadoop-dist/target/hadoop-3.0.0-alpha1.tar.gz [INFO] ------------------------------------------------------------------------ [INFO] Reactor Summary: [INFO] [INFO] Apache Hadoop Main ................................. SUCCESS [ 0.923 s] [INFO] Apache Hadoop Build Tools .......................... SUCCESS [ 0.637 s] [INFO] Apache Hadoop Project POM .......................... SUCCESS [ 1.198 s] [INFO] Apache Hadoop Annotations .......................... SUCCESS [ 2.637 s] [INFO] Apache Hadoop Assemblies ........................... SUCCESS [ 0.166 s] [INFO] Apache Hadoop Project Dist POM ..................... SUCCESS [ 1.411 s] [INFO] Apache Hadoop Maven Plugins ........................ SUCCESS [ 3.910 s] [INFO] Apache Hadoop MiniKDC .............................. SUCCESS [ 2.033 s] [INFO] Apache Hadoop Auth ................................. SUCCESS [ 4.308 s] [INFO] Apache Hadoop Auth Examples ........................ SUCCESS [ 3.137 s] [INFO] Apache Hadoop Common ............................... SUCCESS [01:31 min] [INFO] Apache Hadoop NFS .................................. SUCCESS [ 4.958 s] [INFO] Apache Hadoop KMS .................................. SUCCESS [ 13.450 s] [INFO] Apache Hadoop Common Project ....................... SUCCESS [ 0.054 s] [INFO] Apache Hadoop HDFS Client .......................... SUCCESS [ 29.811 s] [INFO] Apache Hadoop HDFS ................................. SUCCESS [01:41 min] [INFO] Apache Hadoop HDFS Native Client ................... SUCCESS [ 5.130 s] [INFO] Apache Hadoop HttpFS ............................... SUCCESS [ 35.180 s] [INFO] Apache Hadoop HDFS BookKeeper Journal .............. SUCCESS [ 4.794 s] [INFO] Apache Hadoop HDFS-NFS ............................. SUCCESS [ 3.529 s] [INFO] Apache Hadoop HDFS Project ......................... SUCCESS [ 0.053 s] [INFO] Apache Hadoop YARN ................................. SUCCESS [ 0.038 s] [INFO] Apache Hadoop YARN API ............................. SUCCESS [ 16.161 s] [INFO] Apache Hadoop YARN Common .......................... SUCCESS [ 33.397 s] [INFO] Apache Hadoop YARN Server .......................... SUCCESS [ 0.051 s] [INFO] Apache Hadoop YARN Server Common ................... SUCCESS [ 7.454 s] [INFO] Apache Hadoop YARN NodeManager ..................... SUCCESS [ 16.125 s] [INFO] Apache Hadoop YARN Web Proxy ....................... SUCCESS [ 3.310 s] [INFO] Apache Hadoop YARN ApplicationHistoryService ....... SUCCESS [ 6.031 s] [INFO] Apache Hadoop YARN Timeline Service ................ SUCCESS [ 8.618 s] [INFO] Apache Hadoop YARN ResourceManager ................. SUCCESS [ 23.076 s] [INFO] Apache Hadoop YARN Server Tests .................... SUCCESS [ 1.818 s] [INFO] Apache Hadoop YARN Client .......................... SUCCESS [ 5.562 s] [INFO] Apache Hadoop YARN SharedCacheManager .............. SUCCESS [ 3.104 s] [INFO] Apache Hadoop YARN Timeline Plugin Storage ......... SUCCESS [ 3.118 s] [INFO] Apache Hadoop YARN Timeline Service HBase tests .... SUCCESS [ 4.144 s] [INFO] Apache Hadoop YARN Applications .................... SUCCESS [ 0.030 s] [INFO] Apache Hadoop YARN DistributedShell ................ SUCCESS [ 2.862 s] [INFO] Apache Hadoop YARN Unmanaged Am Launcher ........... SUCCESS [ 1.957 s] [INFO] Apache Hadoop YARN Site ............................ SUCCESS [ 0.030 s] [INFO] Apache Hadoop YARN Registry ........................ SUCCESS [ 4.267 s] [INFO] Apache Hadoop YARN Project ......................... SUCCESS [ 9.494 s] [INFO] Apache Hadoop MapReduce Client ..................... SUCCESS [ 0.126 s] [INFO] Apache Hadoop MapReduce Core ....................... SUCCESS [ 21.362 s] [INFO] Apache Hadoop MapReduce Common ..................... SUCCESS [ 16.393 s] [INFO] Apache Hadoop MapReduce Shuffle .................... SUCCESS [ 3.718 s] [INFO] Apache Hadoop MapReduce App ........................ SUCCESS [ 9.266 s] [INFO] Apache Hadoop MapReduce HistoryServer .............. SUCCESS [ 5.469 s] [INFO] Apache Hadoop MapReduce JobClient .................. SUCCESS [ 7.002 s] [INFO] Apache Hadoop MapReduce HistoryServer Plugins ...... SUCCESS [ 1.997 s] [INFO] Apache Hadoop MapReduce NativeTask ................. SUCCESS [ 37.612 s] [INFO] Apache Hadoop MapReduce Examples ................... SUCCESS [ 5.021 s] [INFO] Apache Hadoop MapReduce ............................ SUCCESS [ 5.284 s] [INFO] Apache Hadoop MapReduce Streaming .................. SUCCESS [ 4.639 s] [INFO] Apache Hadoop Distributed Copy ..................... SUCCESS [ 9.254 s] [INFO] Apache Hadoop Archives ............................. SUCCESS [ 2.161 s] [INFO] Apache Hadoop Archive Logs ......................... SUCCESS [ 2.401 s] [INFO] Apache Hadoop Rumen ................................ SUCCESS [ 4.663 s] [INFO] Apache Hadoop Gridmix .............................. SUCCESS [ 4.135 s] [INFO] Apache Hadoop Data Join ............................ SUCCESS [ 2.230 s] [INFO] Apache Hadoop Extras ............................... SUCCESS [ 2.528 s] [INFO] Apache Hadoop Pipes ................................ SUCCESS [ 5.361 s] [INFO] Apache Hadoop OpenStack support .................... SUCCESS [ 4.569 s] [INFO] Apache Hadoop Amazon Web Services support .......... SUCCESS [ 4.738 s] [INFO] Apache Hadoop Azure support ........................ SUCCESS [ 4.228 s] [INFO] Apache Hadoop Client ............................... SUCCESS [ 7.863 s] [INFO] Apache Hadoop Mini-Cluster ......................... SUCCESS [ 2.282 s] [INFO] Apache Hadoop Scheduler Load Simulator ............. SUCCESS [ 5.680 s] [INFO] Apache Hadoop Azure Data Lake support .............. SUCCESS [ 4.789 s] [INFO] Apache Hadoop Tools Dist ........................... SUCCESS [ 10.628 s] [INFO] Apache Hadoop Kafka Library support ................ SUCCESS [ 2.151 s] [INFO] Apache Hadoop Tools ................................ SUCCESS [ 0.030 s] [INFO] Apache Hadoop Distribution ......................... SUCCESS [ 36.886 s] [INFO] ------------------------------------------------------------------------ [INFO] BUILD SUCCESS [INFO] ------------------------------------------------------------------------ [INFO] Total time: 11:37 min [INFO] Finished at: 2016-12-14T10:36:49+08:00 [INFO] Final Memory: 295M/964M [INFO] ------------------------------------------------------------------------

8.2、编译完成后,检查本地库

编译的成果位于/opt/hadoop/hadoop-dist/target目录下# cd /opt/hadoop/hadoop-dist/target/hadoop-3.0.0-alpha1/ # ./bin/hadoop checknative -a 2016-12-14 11:12:04,874 WARN bzip2.Bzip2Factory: Failed to load/initialize native-bzip2 library system-native, will use pure-Java version 2016-12-14 11:12:04,880 INFO zlib.ZlibFactory: Successfully loaded & initialized native-zlib library Native library checking: hadoop: true /opt/hadoop/hadoop-dist/target/hadoop-3.0.0-alpha1/lib/native/libhadoop.so.1.0.0 zlib: true /lib64/libz.so.1 snappy: true /lib64/libsnappy.so.1 lz4: true revision:10301 bzip2: false openssl: true /lib64/libcrypto.so ISA-L: false libhadoop was built without ISA-L support 2016-12-14 11:12:05,001 INFO util.ExitUtil: Exiting with status 1

相关文章推荐

- fuse挂载hadoop2.0.0文件系统hdfs到本地(关于libhdfs和fuse-dfs的编译)

- Linux 环境下部署Hadoop 2.x,建议尝试64位系统下进行本地编译的安装方式

- 重新编译Hadoop 2.7.2 native以支持snappy

- Centos 7 安装Hadoop 3.0.0-alpha1

- Apache Hadoop 3.0.0-alpha1主要改进

- Hadoop 2.0 native lib build failed, Hadoop 2.0 本地库编译失败

- hadoop 3.0.0 alpha1 伪分布式搭建

- centos编译hadoop2.7.2 本地库

- 本地编译Hadoop2.7.1源码总结和问题解决

- ubuntu 14.04 install hadoop 3.0.0 alpha1

- Linux 环境下部署Hadoop 2.x,建议尝试64位系统下进行本地编译的安装方式

- 编译本地64位版本的hadoop-2.6.0

- spark的安装部署--10(源码编译安装hadoop+spark+解决64位系统本地库问题)

- Hadoop-编译本地库

- 在centOS 6.3下,进行hadoop 2.0.0-alpha(yarn)本地模式部署

- Hadoop-2.2.0 Linux 64位系统本地库编译

- hadoop 2.2 本地库编译

- hadoop2.7.1本地编译

- CentOS中用Nexus搭建maven私服,为Hadoop编译提供本地镜像

- Apache Hadoop 3.0.0-alpha1版发布做了哪些改进