spark 1.X standalone和on yarn安装配置

2016-08-28 14:06

369 查看

安装JDK 1.7以上 Hadoop 2.7.0不支持JDK1.6,Spark 1.5.0开始不支持JDK 1.6

安装Scala 2.10.4

安装 Hadoop 2.x 至少HDFS

spark-env.sh

[code=xml;toolbar:false">export JAVA_HOME=

export SCALA_HOME=

export HADOOP_CONF_DIR=/opt/modules/hadoop-2.2.0/etc/hadoop //运行在yarn上必须要指定

export SPARK_MASTER_IP=server1

export SPARK_MASTER_PORT=8888

export SPARK_MASTER_WEBUI_PORT=8080

export SPARK_WORKER_CORES=

export SPARK_WORKER_INSTANCES=1

export SPARK_WORKER_MEMORY=26g

export SPARK_WORKER_PORT=7078

export SPARK_WORKER_WEBUI_PORT=8081

export SPARK_JAVA_OPTS="-verbose:gc -XX:-PrintGCDetails -XX:PrintGCTimeStamps"

slaves指定worker节点xx.xx.xx.2

xx.xx.xx.3

xx.xx.xx.4

xx.xx.xx.5

运行spark-submit时默认的属性从spark-defaults.conf文件读取

spark-defaults.confspark.master=spark://hadoop-spark.dargon.org:7077

启动集群

spark-shell命令其实也是执行spark-submit命令

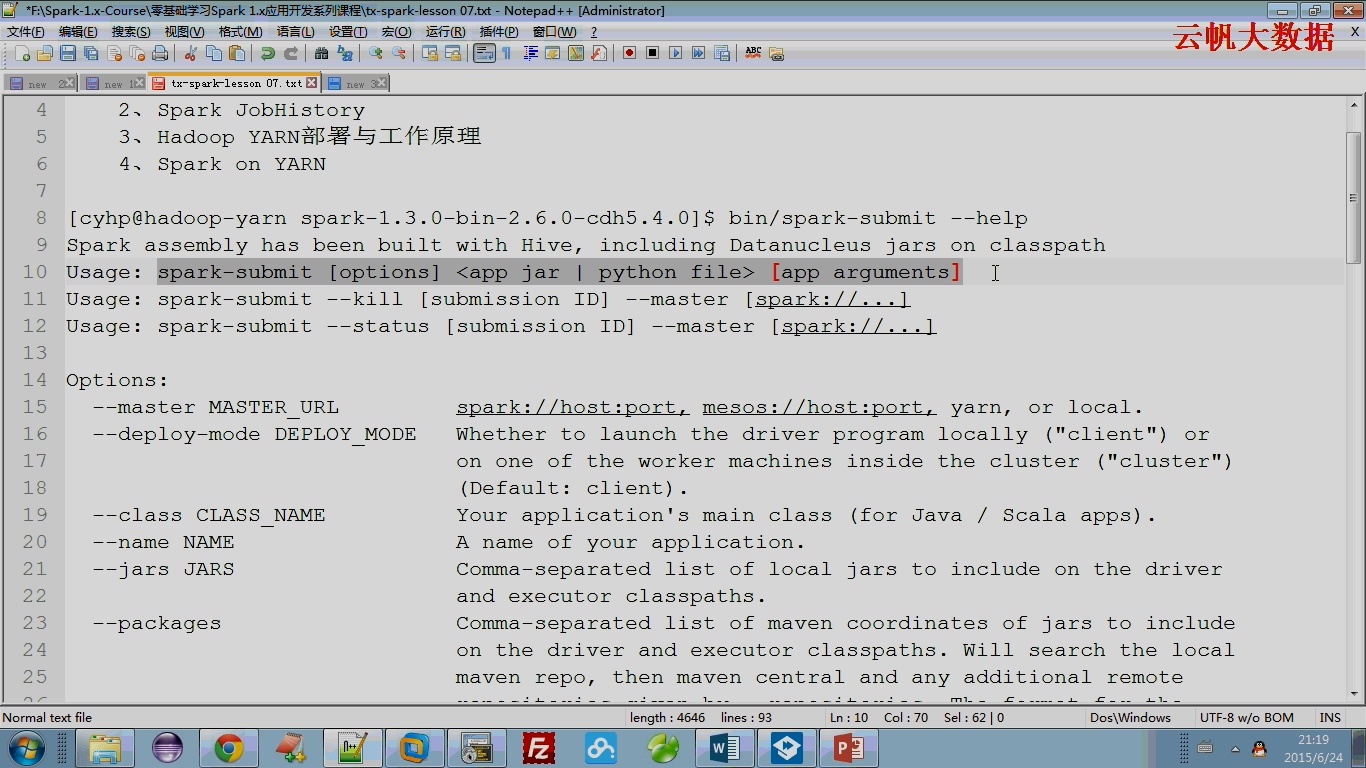

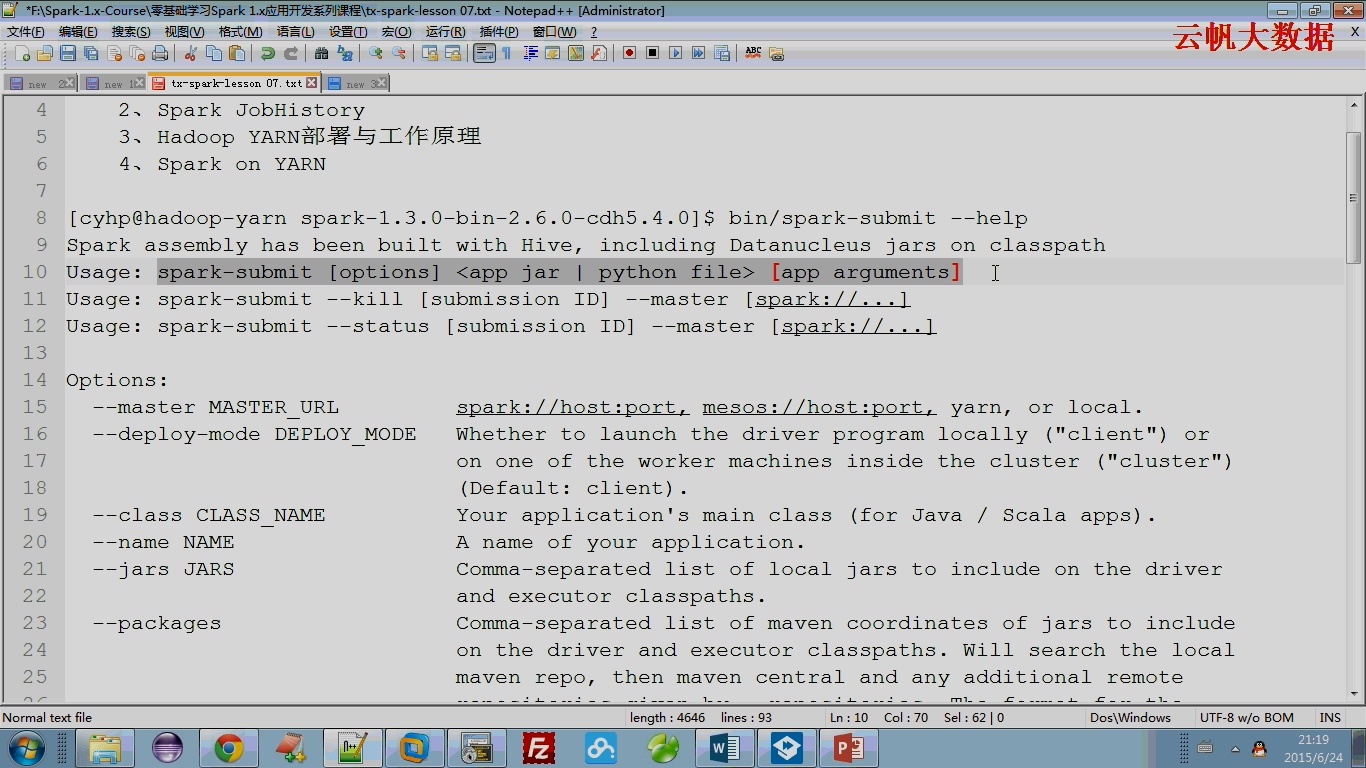

spark-submit --help

deploy-mode针对driver program(SparkContext)的client(本地)、cluster(集群)

默认是client的,SparkContext运行在本地,如果改成cluster则SparkContext运行在集群上

hadoop on yarn的部署模式就是cluster,SparkContext运行在Application Master

spark-shell quick-start链接

http://spark.apache.org/docs/latest/quick-start.html

本文出自 “点滴积累” 博客,请务必保留此出处http://tianxingzhe.blog.51cto.com/3390077/1711959

安装Scala 2.10.4

安装 Hadoop 2.x 至少HDFS

spark-env.sh

[code=xml;toolbar:false">export JAVA_HOME=

export SCALA_HOME=

export HADOOP_CONF_DIR=/opt/modules/hadoop-2.2.0/etc/hadoop //运行在yarn上必须要指定

export SPARK_MASTER_IP=server1

export SPARK_MASTER_PORT=8888

export SPARK_MASTER_WEBUI_PORT=8080

export SPARK_WORKER_CORES=

export SPARK_WORKER_INSTANCES=1

export SPARK_WORKER_MEMORY=26g

export SPARK_WORKER_PORT=7078

export SPARK_WORKER_WEBUI_PORT=8081

export SPARK_JAVA_OPTS="-verbose:gc -XX:-PrintGCDetails -XX:PrintGCTimeStamps"

slaves指定worker节点xx.xx.xx.2

xx.xx.xx.3

xx.xx.xx.4

xx.xx.xx.5

运行spark-submit时默认的属性从spark-defaults.conf文件读取

spark-defaults.confspark.master=spark://hadoop-spark.dargon.org:7077

启动集群

spark-shell命令其实也是执行spark-submit命令

spark-submit --help

deploy-mode针对driver program(SparkContext)的client(本地)、cluster(集群)

默认是client的,SparkContext运行在本地,如果改成cluster则SparkContext运行在集群上

hadoop on yarn的部署模式就是cluster,SparkContext运行在Application Master

spark-shell quick-start链接

http://spark.apache.org/docs/latest/quick-start.html

本文出自 “点滴积累” 博客,请务必保留此出处http://tianxingzhe.blog.51cto.com/3390077/1711959

相关文章推荐

- spark 1.X standalone和on yarn安装配置

- 1、Spark的StandAlone模式原理和安装、Spark-on-YARN的理解

- Spark on Yarn 安装配置

- Ubuntu 14.10 下Spark on yarn安装

- Spark1.6.0 on Hadoop-2.6.3 安装配置

- Spark On Yarn(HDFS HA)详细配置

- Spark On YARN 集群安装部署

- spark on yarn 的安装

- Spark On YARN 分布式集群安装

- Spark On YARN自动调整Executor数量配置 - Dynamic Resource Allocation

- Spark On YARN 集群安装部署

- spark on yarn(zookeeper 配置)

- Storm on Yarn 安装配置

- Spark On YARN 集群安装部署

- ubuntu安装spark on yarn

- ubuntu安装spark on yarn

- Spark on Yarn+Hbase环境搭建指南(三)Spark安装

- 安装spark on yarn error

- Spark1.5.2 on Hadoop2.4.0 安装配置

- Spark On YARN 集群安装部署