缓存框架Guava Cache部分源码分析 推荐

2016-07-17 15:03

441 查看

在本地缓存中,最常用的就是OSCache和谷歌的Guava Cache。其中OSCache在07年就停止维护了,但它仍然被广泛的使用。谷歌的Guava Cache也是一个非常优秀的本地缓存,使用起来非常灵活,功能也十分强大,可以说是当前本地缓存中最优秀的缓存框架之一。之前我们分析了OSCache的部分源码,本篇就通过Guava Cache的部分源码,来分析一下Guava Cache的实现原理。

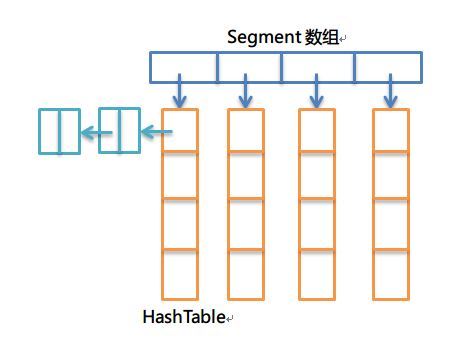

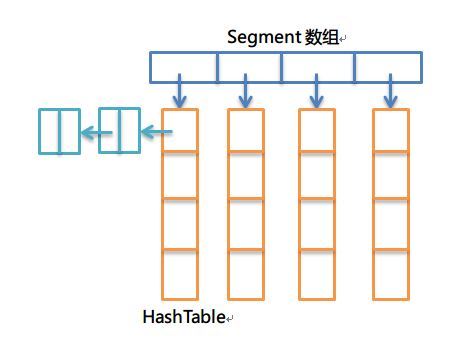

在分析之前,先弄清数据结构的使用。之前的文章提到,OSCache使用了一个扩展的HashTable,作为缓存的数据结构,由于在get操作上,没有使用同步的方式,通过引入一个更新状态数据结构,来控制并发访问的安全。Guava Cache也是使用一个扩展的HashTable作为其缓存数据结构,然而,在实现上,和OSCache是完全不同的。Guava Cache所用的HashTable和ConcurrentHashMap十分相似,通过引入一个Segment数组,对HashTable进行分段,通过分离锁、final以及volatile的配合,实现了并发环境下的线程安全,同时,性能也非常高(每个Segment段的操作互不影响,即使写操作,只要在不同的Segment上,也完全可以并发的执行)。具体的原理,可以参考ConcurrentHashMap的实现,这里就不进行具体的剖析了。

数据结构核心部分可以通过下面的图形表示:

CacheBuilder

CacheBuilder集成了创建缓存所需的各种参数。正如官方文档介绍的:CacheBuilder将创建一个LoadingCache和Cache的实例,该实例可以包含下面任何特性

自动将内容加载到缓存中

LRU淘汰策略

根据上一次访问时间或写入时间决定缓存过期

key关键字可以采用弱引用(WeakReference)

value值可以采用弱引用(WeakReference)以及软引用(SoftReference)

缓存移除或回收进行通知

统计缓存访问性能信息

所有特性都是可选的,创建的缓存可以包含上面所有的特性,也可以都不使用,具有很强的灵活性。

下面是一个简单的使用例子:

接下来,根据CacheBuilder的源码进行简要的分析:

CacheBuilder中一些重要的参数:

说明:上面就是创建缓存涉及的参数,我们可以人工指定,也可以使用默认值。我们可以看看NULL_STATS_COUNTER、NullListener的定义,其对各个方法的实现进行了重写,函数内容直接为空,这也是为了不影响性能的做法。CacheBuilder将创建缓存方法进行了封装,是值得我们借鉴的地方。

Guava Cache对于缓存的key和value提供了多种引用类型,默认情况下,两者都是强引用类型。关于引用类型的枚举定义如下:

LocalCache

这一部分结合文章开头给出的数据结构图解,就很容易理解了。

首先查看LocalCache下的成员变量:

static final int MAXIMUM_CAPACITY = 1 << 30:缓存最大容量,该数值必须是2的幂,同时小于这个最大值2^30

static final int MAX_SEGMENTS = 1 << 16:Segment数组最大容量

static final int CONTAINS_VALUE_RETRIES = 3:containsValue方法的重试次数

static final int DRAIN_THRESHOLD = 0x3F(63):Number of cache access operations that can be buffered per segment before the cache's recency ordering information is updated. This is used to avoid lock contention by recording a memento of reads and delaying a lock acquisition until the threshold is crossed or a mutation occurs.

static final int DRAIN_MAX = 16:一次清理操作中,最大移除的entry数量

final int segmentMask:定位segment

final int segmentShift:定位segment,同时让entry分布均匀,尽量平均分布在每个segment[i]中

final Segment<K, V>[] segments:segment数组,每个元素下都是一个HashTable

final int concurrencyLevel:并发程度,用来计算segment数组的大小。segment数组的大小正决定了并发的程度

final Equivalence<Object> keyEquivalence:key比较方式

final Equivalence<Object> valueEquivalence:value比较方式

final Strength keyStrength:key引用类型

final Strength valueStrength:value引用类型

final long maxWeight:最大权重

final Weigher<K, V> weigher:计算每个entry权重的接口

final long expireAfterAccessNanos:一个entry访问后多久过期

final long expireAfterWriteNanos:一个entry写入后多久过期

final long refreshNanos:一个entry写入多久后进行刷新

final Queue<RemovalNotification<K, V>> removalNotificationQueue:移除监听器使用队列

final RemovalListener<K, V> removalListener:entry过期移除或者gc回收(弱引用和软引用)将会通知的监听器

final Ticker ticker:统计时间

final EntryFactory entryFactory:创建entry的工厂

final StatsCounter globalStatsCounter:全局缓存性能统计器(命中、未命中、put成功、失败次数等)

final CacheLoader<? super K, V> defaultLoader:默认的缓存加载器

LocalCache构造入口如下:

到这里缓存就初始化完成了。

下面我们看一下Segment的定义,实现上跟ConcurrentHashMap的原理很像,因此不作详细介绍。具体可以看看ConcurrentHashMap的实现源码。

各种缓存的核心操作无外乎put/get/remove等。下面我们先抛开统计、LRU等,重点关注Guava Cache的put、get方法的实现。

下面是get方法的源码:

说明:一般缓存的get方法会去查找指定的key对应的value,如果不存在就直接返回null或者抛出异常,如OSCache就是抛出一个缓存需要刷新的异常,让用户进行put操作,Guava Cache这样的处理很有意思,在get获取不到或者过期的话,会通过我们提供的load方法将entry主动加载到缓存中来。

下面是get方法的核心源码,大部分说明都在注释中:

至此,Guava Cache从get以及put的核心部分已经分析完了。关于其余的部分细节以及各个数据结构的具体实现,可以好好研读源码,本文主要理通总体流程,分析其实现原理。

OSCache和Guava Cache在实现上有很大不同,但二者都是非常优秀的本地缓存框架,认真学习它们的实现原理和源码,对开发是大有裨益的。我们对其进行一下简要的对比:

OSCache和Guava Cache底层都是HashTable,但是二者又是不同的。OSCache对原有HashTable进行了扩展,在get方法上是没有加锁的,而是通过其他措施进行并发安全控制,因此读性能大幅度提高;Guava Cache也是对HashTable进行了扩展,原理类似于ConcurrentHashMap,通过分离锁等实现线程安全的同时,读写性能都大大提高,尤其在写上,也是可以并发的。

OSCache在get方法时,如果缓存过期或者不存在,会抛出需要刷新的异常,用户需要通过put方法进行刷新缓存,否则会发生死锁;而Guava Cache在get的时候,会通过用户重载的load方法,自动进行加载,十分方便。

在分析之前,先弄清数据结构的使用。之前的文章提到,OSCache使用了一个扩展的HashTable,作为缓存的数据结构,由于在get操作上,没有使用同步的方式,通过引入一个更新状态数据结构,来控制并发访问的安全。Guava Cache也是使用一个扩展的HashTable作为其缓存数据结构,然而,在实现上,和OSCache是完全不同的。Guava Cache所用的HashTable和ConcurrentHashMap十分相似,通过引入一个Segment数组,对HashTable进行分段,通过分离锁、final以及volatile的配合,实现了并发环境下的线程安全,同时,性能也非常高(每个Segment段的操作互不影响,即使写操作,只要在不同的Segment上,也完全可以并发的执行)。具体的原理,可以参考ConcurrentHashMap的实现,这里就不进行具体的剖析了。

数据结构核心部分可以通过下面的图形表示:

CacheBuilder

CacheBuilder集成了创建缓存所需的各种参数。正如官方文档介绍的:CacheBuilder将创建一个LoadingCache和Cache的实例,该实例可以包含下面任何特性

自动将内容加载到缓存中

LRU淘汰策略

根据上一次访问时间或写入时间决定缓存过期

key关键字可以采用弱引用(WeakReference)

value值可以采用弱引用(WeakReference)以及软引用(SoftReference)

缓存移除或回收进行通知

统计缓存访问性能信息

所有特性都是可选的,创建的缓存可以包含上面所有的特性,也可以都不使用,具有很强的灵活性。

下面是一个简单的使用例子:

LoadingCache<Key, Graph> graphs = CacheBuilder.newBuilder()

.maximumSize(10000)

.expireAfterWrite(10, TimeUnit.MINUTES)

.removalListener(MY_LISTENER)

.build(

new CacheLoader<Key, Graph>() {

public Graph load(Key key) throws AnyException {

return createExpensiveGraph(key);

}

});} 还可以这样写:String spec = "maximumSize=10000,expireAfterWrite=10m";

LoadingCache<Key, Graph> graphs = CacheBuilder.from(spec)

.removalListener(MY_LISTENER)

.build(

new CacheLoader<Key, Graph>() {

public Graph load(Key key) throws AnyException {

return createExpensiveGraph(key);

}

});} 说明:上面的例子指定Cache容量最大为10000,并且写入后经过10分钟自动过期,并指定了一个缓存移除的消息监听器,可以在缓存移除的时候,进行指定的操作。接下来,根据CacheBuilder的源码进行简要的分析:

CacheBuilder中一些重要的参数:

//默认容量

private static final int DEFAULT_INITIAL_CAPACITY = 16;

//默认并发程度(segement大小就是通过这个计算)

private static final int DEFAULT_CONCURRENCY_LEVEL = 4;

//默认失效时间

private static final int DEFAULT_EXPIRATION_NANOS = 0;

//默认刷新时间

private static final int DEFAULT_REFRESH_NANOS = 0;

//默认性能计数器

static final Supplier<? extends StatsCounter> NULL_STATS_COUNTER = Suppliers.ofInstance(

new StatsCounter() {

@Override

public void recordHits(int count) {}

@Override

public void recordMisses(int count) {}

@Override

public void recordLoadSuccess(long loadTime) {}

@Override

public void recordLoadException(long loadTime) {}

@Override

public void recordEviction() {}

@Override

public CacheStats snapshot() {

return EMPTY_STATS;

}

});

static final CacheStats EMPTY_STATS = new CacheStats(0, 0, 0, 0, 0, 0);

static final Supplier<StatsCounter> CACHE_STATS_COUNTER =

new Supplier<StatsCounter>() {

@Override

public StatsCounter get() {

return new SimpleStatsCounter();

}

};

//移除事件监听器(默认为空)

enum NullListener implements RemovalListener<Object, Object> {

INSTANCE;

@Override

public void onRemoval(RemovalNotification<Object, Object> notification) {}

}

enum OneWeigher implements Weigher<Object, Object> {

INSTANCE;

@Override

public int weigh(Object key, Object value) {

return 1;

}

}

static final Ticker NULL_TICKER = new Ticker() {

@Override

public long read() {

return 0;

}

};

static final int UNSET_INT = -1;

boolean strictParsing = true;

//初始容量

int initialCapacity = UNSET_INT;

//并发程度,Segment数组的大小通过这个进行计算,后面会进行介绍

int concurrencyLevel = UNSET_INT;

//缓存最大容量

long maximumSize = UNSET_INT;

//

long maximumWeight = UNSET_INT;

Weigher<? super K, ? super V> weigher;

//引用类型(默认都为强引用)

Strength keyStrength;

Strength valueStrength;

//写入后过期时间

long expireAfterWriteNanos = UNSET_INT;

//读取后过期时间

long expireAfterAccessNanos = UNSET_INT;

//刷新时间

long refreshNanos = UNSET_INT;

//判断是否相同的方法(因为有引用类型可以为弱引用和软引用)

Equivalence<Object> keyEquivalence;

Equivalence<Object> valueEquivalence;

RemovalListener<? super K, ? super V> removalListener;

Ticker ticker;

Supplier<? extends StatsCounter> statsCounterSupplier = NULL_STATS_COUNTER;说明:上面就是创建缓存涉及的参数,我们可以人工指定,也可以使用默认值。我们可以看看NULL_STATS_COUNTER、NullListener的定义,其对各个方法的实现进行了重写,函数内容直接为空,这也是为了不影响性能的做法。CacheBuilder将创建缓存方法进行了封装,是值得我们借鉴的地方。

Guava Cache对于缓存的key和value提供了多种引用类型,默认情况下,两者都是强引用类型。关于引用类型的枚举定义如下:

STRONG {

@Override

<K, V> ValueReference<K, V> referenceValue(

Segment<K, V> segment, ReferenceEntry<K, V> entry, V value, int weight) {

return (weight == 1)

? new StrongValueReference<K, V>(value)

: new WeightedStrongValueReference<K, V>(value, weight);

}

@Override

Equivalence<Object> defaultEquivalence() {

return Equivalence.equals();

}

},

SOFT {

@Override

<K, V> ValueReference<K, V> referenceValue(

Segment<K, V> segment, ReferenceEntry<K, V> entry, V value, int weight) {

return (weight == 1)

? new SoftValueReference<K, V>(segment.valueReferenceQueue, value, entry)

: new WeightedSoftValueReference<K, V>(

segment.valueReferenceQueue, value, entry, weight);

}

@Override

Equivalence<Object> defaultEquivalence() {

return Equivalence.identity();

}

},

WEAK {

@Override

<K, V> ValueReference<K, V> referenceValue(

Segment<K, V> segment, ReferenceEntry<K, V> entry, V value, int weight) {

return (weight == 1)

? new WeakValueReference<K, V>(segment.valueReferenceQueue, value, entry)

: new WeightedWeakValueReference<K, V>(

segment.valueReferenceQueue, value, entry, weight);

}

@Override

Equivalence<Object> defaultEquivalence() {

return Equivalence.identity();

}

}; 值得注意的是,Equivalence<Object> defaultEquivalence()是不同的,这也正对应了上面Equivalence<Object> keyEquivalence;和Equivalence<Object> valueEquivalence;两个参数。对于强引用来说,直接使用equal进行判断对象是否相同,但对于弱引用和软引用,采用的identity方法。关于这里的的细节,会有单独章节进行讨论。本章节以STRONG进行分析。LocalCache

这一部分结合文章开头给出的数据结构图解,就很容易理解了。

首先查看LocalCache下的成员变量:

static final int MAXIMUM_CAPACITY = 1 << 30:缓存最大容量,该数值必须是2的幂,同时小于这个最大值2^30

static final int MAX_SEGMENTS = 1 << 16:Segment数组最大容量

static final int CONTAINS_VALUE_RETRIES = 3:containsValue方法的重试次数

static final int DRAIN_THRESHOLD = 0x3F(63):Number of cache access operations that can be buffered per segment before the cache's recency ordering information is updated. This is used to avoid lock contention by recording a memento of reads and delaying a lock acquisition until the threshold is crossed or a mutation occurs.

static final int DRAIN_MAX = 16:一次清理操作中,最大移除的entry数量

final int segmentMask:定位segment

final int segmentShift:定位segment,同时让entry分布均匀,尽量平均分布在每个segment[i]中

final Segment<K, V>[] segments:segment数组,每个元素下都是一个HashTable

final int concurrencyLevel:并发程度,用来计算segment数组的大小。segment数组的大小正决定了并发的程度

final Equivalence<Object> keyEquivalence:key比较方式

final Equivalence<Object> valueEquivalence:value比较方式

final Strength keyStrength:key引用类型

final Strength valueStrength:value引用类型

final long maxWeight:最大权重

final Weigher<K, V> weigher:计算每个entry权重的接口

final long expireAfterAccessNanos:一个entry访问后多久过期

final long expireAfterWriteNanos:一个entry写入后多久过期

final long refreshNanos:一个entry写入多久后进行刷新

final Queue<RemovalNotification<K, V>> removalNotificationQueue:移除监听器使用队列

final RemovalListener<K, V> removalListener:entry过期移除或者gc回收(弱引用和软引用)将会通知的监听器

final Ticker ticker:统计时间

final EntryFactory entryFactory:创建entry的工厂

final StatsCounter globalStatsCounter:全局缓存性能统计器(命中、未命中、put成功、失败次数等)

final CacheLoader<? super K, V> defaultLoader:默认的缓存加载器

LocalCache构造入口如下:

LocalCache(CacheBuilder<? super K, ? super V> builder, @Nullable CacheLoader<? super K, V> loader)其中,builder就是通过CacheBuilder创建的实例,这个在上面的小节中已经讲解了,下面看一下LocalCache初始化部分的代码:

LocalCache(

CacheBuilder<? super K, ? super V> builder, @Nullable CacheLoader<? super K, V> loader) {

//并发程度,根据我们传的参数和默认最大值中选取小者。

//如果没有指定该参数的情况下,CacheBuilder将其置为UNSET_INT即为-1

//getConcurrencyLevel方法获取时,如果为-1就返回默认值4

//否则返回用户传入的参数

concurrencyLevel = Math.min(builder.getConcurrencyLevel(), MAX_SEGMENTS);

//键值的引用类型,没有指定的话,默认为强引用类型

keyStrength = builder.getKeyStrength();

valueStrength = builder.getValueStrength();

//判断相同的方法,强引用类型就是Equivalence.equals()

keyEquivalence = builder.getKeyEquivalence();

valueEquivalence = builder.getValueEquivalence();

maxWeight = builder.getMaximumWeight();

weigher = builder.getWeigher();

expireAfterAccessNanos = builder.getExpireAfterAccessNanos();

expireAfterWriteNanos = builder.getExpireAfterWriteNanos();

refreshNanos = builder.getRefreshNanos();

//移除消息监听器

removalListener = builder.getRemovalListener();

//如果我们指定了移除消息监听器的话,会创建一个队列,临时保存移除的内容

removalNotificationQueue = (removalListener == NullListener.INSTANCE)

? LocalCache.<RemovalNotification<K, V>>discardingQueue()

: new ConcurrentLinkedQueue<RemovalNotification<K, V>>();

ticker = builder.getTicker(recordsTime());

//创建新的缓存内容(entry)的工厂,会根据引用类型选择对应的工厂

entryFactory = EntryFactory.getFactory(keyStrength, usesAccessEntries(), usesWriteEntries());

globalStatsCounter = builder.getStatsCounterSupplier().get();

defaultLoader = loader;

//初始化缓存容量,默认为16

int initialCapacity = Math.min(builder.getInitialCapacity(), MAXIMUM_CAPACITY);

if (evictsBySize() && !customWeigher()) {

initialCapacity = Math.min(initialCapacity, (int) maxWeight);

}

// Find the lowest power-of-two segmentCount that exceeds concurrencyLevel, unless

// maximumSize/Weight is specified in which case ensure that each segment gets at least 10

// entries. The special casing for size-based eviction is only necessary because that eviction

// happens per segment instead of globally, so too many segments compared to the maximum size

// will result in random eviction behavior.

int segmentShift = 0;

int segmentCount = 1;

//根据并发程度来计算segement数组的大小(大于等于concurrencyLevel的最小的2的幂,这里即为4)

while (segmentCount < concurrencyLevel

&& (!evictsBySize() || segmentCount * 20 <= maxWeight)) {

++segmentShift;

segmentCount <<= 1;

}

//这里的segmentShift和segmentMask用来打散entry,让缓存内容尽量均匀分布在每个segment下

this.segmentShift = 32 - segmentShift;

segmentMask = segmentCount - 1;

//这里进行初始化segment数组,大小即为4

this.segments = newSegmentArray(segmentCount);

//每个segment的容量,总容量/segment的大小,向上取整,这里就是16/4=4

int segmentCapacity = initialCapacity / segmentCount;

if (segmentCapacity * segmentCount < initialCapacity) {

++segmentCapacity;

}

//这里计算每个Segment[i]下的table的大小

int segmentSize = 1;

//SegmentSize为小于segmentCapacity的最大的2的幂,这里为4

while (segmentSize < segmentCapacity) {

segmentSize <<= 1;

}

//初始化每个segment[i]

//注:根据权重的方法使用较少,这里走else分支

if (evictsBySize()) {

// Ensure sum of segment max weights = overall max weights

long maxSegmentWeight = maxWeight / segmentCount + 1;

long remainder = maxWeight % segmentCount;

for (int i = 0; i < this.segments.length; ++i) {

if (i == remainder) {

maxSegmentWeight--;

}

this.segments[i] =

createSegment(segmentSize, maxSegmentWeight, builder.getStatsCounterSupplier().get());

}

} else {

for (int i = 0; i < this.segments.length; ++i) {

this.segments[i] =

createSegment(segmentSize, UNSET_INT, builder.getStatsCounterSupplier().get());

}

}

}到这里缓存就初始化完成了。

下面我们看一下Segment的定义,实现上跟ConcurrentHashMap的原理很像,因此不作详细介绍。具体可以看看ConcurrentHashMap的实现源码。

static class Segment<K, V> extends ReentrantLock {

final LocalCache<K, V> map;

/**

* The number of live elements in this segment's region.

*/

volatile int count;

/**

* The weight of the live elements in this segment's region.

*/

@GuardedBy("this")

long totalWeight;

/**

* Number of updates that alter the size of the table. This is used during bulk-read methods to

* make sure they see a consistent snapshot: If modCounts change during a traversal of segments

* loading size or checking containsValue, then we might have an inconsistent view of state

* so (usually) must retry.

*/

int modCount;

/**

* The table is expanded when its size exceeds this threshold. (The value of this field is

* always {@code (int) (capacity * 0.75)}.)

*/

int threshold;

/**

* The per-segment table.

*/

volatile AtomicReferenceArray<ReferenceEntry<K, V>> table;

/**

* The maximum weight of this segment. UNSET_INT if there is no maximum.

*/

final long maxSegmentWeight;

/**

* The key reference queue contains entries whose keys have been garbage collected, and which

* need to be cleaned up internally.

*/

final ReferenceQueue<K> keyReferenceQueue;

/**

* The value reference queue contains value references whose values have been garbage collected,

* and which need to be cleaned up internally.

*/

final ReferenceQueue<V> valueReferenceQueue;

/**

* The recency queue is used to record which entries were accessed for updating the access

* list's ordering. It is drained as a batch operation when either the DRAIN_THRESHOLD is

* crossed or a write occurs on the segment.

*/

final Queue<ReferenceEntry<K, V>> recencyQueue;

/**

* A counter of the number of reads since the last write, used to drain queues on a small

* fraction of read operations.

*/

final AtomicInteger readCount = new AtomicInteger();

/**

* A queue of elements currently in the map, ordered by write time. Elements are added to the

* tail of the queue on write.

*/

@GuardedBy("this")

final Queue<ReferenceEntry<K, V>> writeQueue;

/**

* A queue of elements currently in the map, ordered by access time. Elements are added to the

* tail of the queue on access (note that writes count as accesses).

*/

@GuardedBy("this")

final Queue<ReferenceEntry<K, V>> accessQueue;

/** Accumulates cache statistics. */

final StatsCounter statsCounter;

} 注意到其中有几个队列,keyReferenceQueue和valueReferenceQueue,在弱引用或软引用情况下gc回收的内容会放入这两个队列,accessQueue,用来进行LRU替换算法,recencyQueue记录哪些entry被访问,用于accessQueue的更新。各种缓存的核心操作无外乎put/get/remove等。下面我们先抛开统计、LRU等,重点关注Guava Cache的put、get方法的实现。

下面是get方法的源码:

@Override

public V get(K key) throws ExecutionException {

return localCache.getOrLoad(key);

}

V getOrLoad(K key) throws ExecutionException {

return get(key, defaultLoader);

}

V get(K key, CacheLoader<? super K, V> loader) throws ExecutionException {

//这里对哈希再哈希(Wang/Jenkins方法,为了进一步降低冲突)的细节暂时不讲,重点关注后面的get方法

int hash = hash(checkNotNull(key));

//根据hash找到对应的那个segment

return segmentFor(hash).get(key, hash, loader);

}

Segment<K, V> segmentFor(int hash) {

return segments[(hash >>> segmentShift) & segmentMask];

}

V get(K key, int hash, CacheLoader<? super K, V> loader) throws ExecutionException {

//key和loader不能为null(空指针异常)

checkNotNull(key);

checkNotNull(loader);

try {

//count保存的是该sengment中缓存的数量,如果为0,就直接去载入

if (count != 0) { // read-volatile

// don't call getLiveEntry, which would ignore loading values

ReferenceEntry<K, V> e = getEntry(key, hash);

//e != null说明缓存中已存在

if (e != null) {

long now = map.ticker.read();

//getLiveValue在entry无效、过期、正在载入都会返回null,如果返回不为空,就是正常命中

V value = getLiveValue(e, now);

if (value != null) {

recordRead(e, now);

//性能统计

statsCounter.recordHits(1);

//根据用户是否设置距离上次访问或者写入一段时间会过期,进行刷新或者直接返回

return scheduleRefresh(e, key, hash, value, now, loader);

}

ValueReference<K, V> valueReference = e.getValueReference();

if (valueReference.isLoading()) {

//如果正在加载中,等待加载完成获取

return waitForLoadingValue(e, key, valueReference);

}

}

}

//如果不存在或者过期,就通过loader方法进行加载

return lockedGetOrLoad(key, hash, loader);

} catch (ExecutionException ee) {

Throwable cause = ee.getCause();

if (cause instanceof Error) {

throw new ExecutionError((Error) cause);

} else if (cause instanceof RuntimeException) {

throw new UncheckedExecutionException(cause);

}

throw ee;

} finally {

//清理。通常情况下,清理操作会伴随写入进行,但是如果很久不写入的话,就需要读线程进行完成

//那么这个“很久”是多久呢?还记得前面我们设置了一个参数DRAIN_THRESHOLD=63吧

//而我们的判断条件就是if ((readCount.incrementAndGet() & DRAIN_THRESHOLD) == 0)

//条件成立,才会执行清理,也就是说,连续读取64次就会执行一次清理操作

//具体是如何清理的,后面再介绍,这里仅关注核心流程

postReadCleanup();

}

}说明:一般缓存的get方法会去查找指定的key对应的value,如果不存在就直接返回null或者抛出异常,如OSCache就是抛出一个缓存需要刷新的异常,让用户进行put操作,Guava Cache这样的处理很有意思,在get获取不到或者过期的话,会通过我们提供的load方法将entry主动加载到缓存中来。

下面是get方法的核心源码,大部分说明都在注释中:

V lockedGetOrLoad(K key, int hash, CacheLoader<? super K, V> loader)

throws ExecutionException {

ReferenceEntry<K, V> e;

ValueReference<K, V> valueReference = null;

LoadingValueReference<K, V> loadingValueReference = null;

boolean createNewEntry = true;

//加锁

lock();

try {

// re-read ticker once inside the lock

long now = map.ticker.read();

preWriteCleanup(now);

int newCount = this.count - 1;

//当前segment下的HashTable

AtomicReferenceArray<ReferenceEntry<K, V>> table = this.table;

//这里也是为什么table的大小要为2的幂(最后index范围刚好在0-table.length()-1)

int index = hash & (table.length() - 1);

ReferenceEntry<K, V> first = table.get(index);

//在链表上查找

for (e = first; e != null; e = e.getNext()) {

K entryKey = e.getKey();

if (e.getHash() == hash && entryKey != null

&& map.keyEquivalence.equivalent(key, entryKey)) {

valueReference = e.getValueReference();

//如果正在载入中,就不需要创建,只需要等待载入完成读取即可

if (valueReference.isLoading()) {

createNewEntry = false;

} else {

V value = valueReference.get();

// 被gc回收(在弱引用和软引用的情况下会发生)

if (value == null) {

enqueueNotification(entryKey, hash, valueReference, RemovalCause.COLLECTED);

} else if (map.isExpired(e, now)) {

// 过期

enqueueNotification(entryKey, hash, valueReference, RemovalCause.EXPIRED);

} else {

//存在并且没有过期,更新访问队列并记录命中信息,返回value

recordLockedRead(e, now);

statsCounter.recordHits(1);

// we were concurrent with loading; don't consider refresh

return value;

}

// 对于被gc回收和过期的情况,从写队列和访问队列中移除

// 因为在后面重新载入后,会再次添加到队列中

writeQueue.remove(e);

accessQueue.remove(e);

this.count = newCount; // write-volatile

}

break;

}

}

if (createNewEntry) {

//先创建一个loadingValueReference,表示正在载入

loadingValueReference = new LoadingValueReference<K, V>();

if (e == null) {

//如果当前链表为空,先创建一个头结点

e = newEntry(key, hash, first);

e.setValueReference(loadingValueReference);

table.set(index, e);

} else {

e.setValueReference(loadingValueReference);

}

}

} finally {

//解锁

unlock();

//执行清理

postWriteCleanup();

}

if (createNewEntry) {

try {

// Synchronizes on the entry to allow failing fast when a recursive load is

// detected. This may be circumvented when an entry is copied, but will fail fast most

// of the time.

synchronized (e) {

//异步加载

return loadSync(key, hash, loadingValueReference, loader);

}

} finally {

//记录未命中

statsCounter.recordMisses(1);

}

} else {

// 等待加载进来然后读取即可

return waitForLoadingValue(e, key, valueReference);

}

}下面是异步载入的代码:V loadSync(K key, int hash, LoadingValueReference<K, V> loadingValueReference,

CacheLoader<? super K, V> loader) throws ExecutionException {

//这里通过我们重写的load方法,根据key,将value载入

ListenableFuture<V> loadingFuture = loadingValueReference.loadFuture(key, loader);

return getAndRecordStats(key, hash, loadingValueReference, loadingFuture);

}

//等待载入,并记录载入成功或失败

V getAndRecordStats(K key, int hash, LoadingValueReference<K, V> loadingValueReference,

ListenableFuture<V> newValue) throws ExecutionException {

V value = null;

try {

value = getUninterruptibly(newValue);

if (value == null) {

throw new InvalidCacheLoadException("CacheLoader returned null for key " + key + ".");

}

//性能统计信息记录载入成功

statsCounter.recordLoadSuccess(loadingValueReference.elapsedNanos());

//这个方法才是真正的将缓存内容加载完成(当前还是loadingValueReference,表示isLoading)

storeLoadedValue(key, hash, loadingValueReference, value);

return value;

} finally {

if (value == null) {

statsCounter.recordLoadException(loadingValueReference.elapsedNanos());

removeLoadingValue(key, hash, loadingValueReference);

}

}

}

boolean storeLoadedValue(K key, int hash, LoadingValueReference<K, V> oldValueReference,

V newValue) {

lock();

try {

long now = map.ticker.read();

preWriteCleanup(now);

int newCount = this.count + 1;

if (newCount > this.threshold) { // ensure capacity

expand();

newCount = this.count + 1;

}

AtomicReferenceArray<ReferenceEntry<K, V>> table = this.table;

int index = hash & (table.length() - 1);

ReferenceEntry<K, V> first = table.get(index);

//找到

for (ReferenceEntry<K, V> e = first; e != null; e = e.getNext()) {

K entryKey = e.getKey();

if (e.getHash() == hash && entryKey != null

&& map.keyEquivalence.equivalent(key, entryKey)) {

ValueReference<K, V> valueReference = e.getValueReference();

V entryValue = valueReference.get();

// replace the old LoadingValueReference if it's live, otherwise

// perform a putIfAbsent

if (oldValueReference == valueReference

|| (entryValue == null && valueReference != UNSET)) {

++modCount;

if (oldValueReference.isActive()) {

RemovalCause cause =

(entryValue == null) ? RemovalCause.COLLECTED : RemovalCause.REPLACED;

enqueueNotification(key, hash, oldValueReference, cause);

newCount--;

}

//LoadingValueReference变成对应引用类型的ValueReference,并进行赋值

setValue(e, key, newValue, now);

//volatile写入

this.count = newCount; // write-volatile

evictEntries();

return true;

}

// the loaded value was already clobbered

valueReference = new WeightedStrongValueReference<K, V>(newValue, 0);

enqueueNotification(key, hash, valueReference, RemovalCause.REPLACED);

return false;

}

}

++modCount;

ReferenceEntry<K, V> newEntry = newEntry(key, hash, first);

setValue(newEntry, key, newValue, now);

table.set(index, newEntry);

this.count = newCount; // write-volatile

evictEntries();

return true;

} finally {

unlock();

postWriteCleanup();

}

}至此,Guava Cache从get以及put的核心部分已经分析完了。关于其余的部分细节以及各个数据结构的具体实现,可以好好研读源码,本文主要理通总体流程,分析其实现原理。

OSCache和Guava Cache在实现上有很大不同,但二者都是非常优秀的本地缓存框架,认真学习它们的实现原理和源码,对开发是大有裨益的。我们对其进行一下简要的对比:

OSCache和Guava Cache底层都是HashTable,但是二者又是不同的。OSCache对原有HashTable进行了扩展,在get方法上是没有加锁的,而是通过其他措施进行并发安全控制,因此读性能大幅度提高;Guava Cache也是对HashTable进行了扩展,原理类似于ConcurrentHashMap,通过分离锁等实现线程安全的同时,读写性能都大大提高,尤其在写上,也是可以并发的。

OSCache在get方法时,如果缓存过期或者不存在,会抛出需要刷新的异常,用户需要通过put方法进行刷新缓存,否则会发生死锁;而Guava Cache在get的时候,会通过用户重载的load方法,自动进行加载,十分方便。

相关文章推荐

- 页面缓存:内存和文件之间的那些事

- 浅析SQL Server中的执行计划缓存(上)

- Enterprise Library for .NET Framework 2.0缓存使用实例

- PowerShell中编程清空IE缓存方法

- PowerShell中使用.NET将程序集加入全局程序集缓存

- C#中缓存的基本用法总结

- Android实现图片异步加载并缓存到本地

- wap开发中如何有效的利用缓存减少消息的传送量

- PHP基于文件存储实现缓存的方法

- PHP strtotime函数用法、实现原理和源码分析

- smarty缓存用法分析

- 在ASP.NET 2.0中操作数据之五十九:使用SQL缓存依赖项SqlCacheDependency

- 在ASP.NET 2.0中操作数据之五十八:在程序启动阶段缓存数据

- 在ASP.NET 2.0中操作数据之五十七:在分层架构中缓存数据

- 引用全局程序集缓存内的程序集的方法

- asp Response.flush 实时显示进度

- C#实现清除IE浏览器缓存的方法

- jQuery 源码分析笔记(3) Deferred机制

- ASP.NET缓存管理的几种方法

- PHP文件缓存类实现代码