MapReduce程序开发中的context

2016-07-10 13:37

274 查看

简要截取:

具体详解:

本篇博客以经典的wordcount程序为例来说明context的用法:

直接上代码:

运行结果:

特别说明:

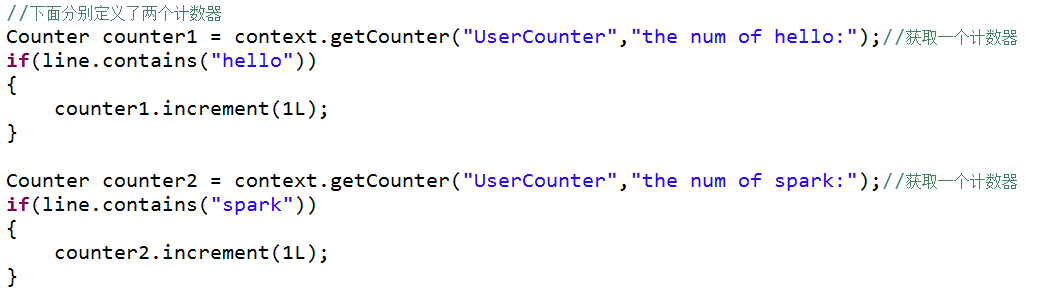

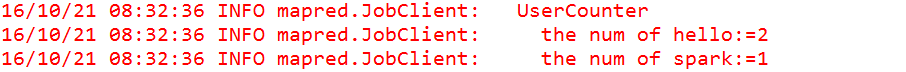

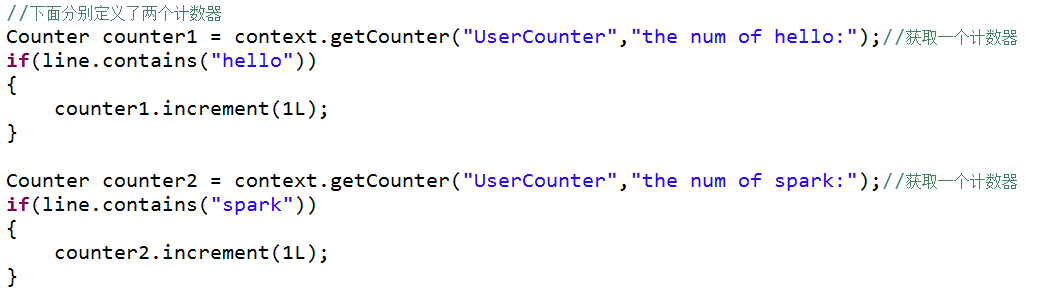

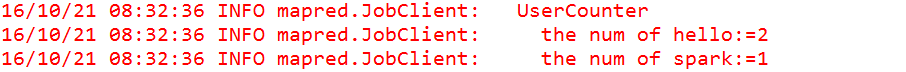

1、计数器counter是全局的。 换言之,MapReduce框架将跨所有map和reduce聚集这些计数器,并在作业结束 时产生一个最终结果。

2、Configuration: core-site.xml, mapred-site.xml, yarn-site.xml, hdfs-site.xml这四个配置文件读取的方式分为3种:

第一:用Hadoop的集群模式运行程序,将会读取hadoop中这几个配置文件的内容。

第二:将这个xxx-site.xml加载到eclipse的工程src中

第三:在代码中用 conf.set(name, value)的方式进行指定

具体详解:

本篇博客以经典的wordcount程序为例来说明context的用法:

直接上代码:

package MapReduce;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Counter;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.JobID;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.mapreduce.lib.partition.HashPartitioner;

import org.apache.zookeeper.common.IOUtils;

public class WordCount

{

public static String path1 = "file:///C:\\word.txt";

public static String path2 = "file:///C:\\dirout\\";

public static void main(String[] args) throws Exception

{

Configuration conf = new Configuration();

FileSystem fileSystem = FileSystem.get(conf);//获取本地文件系统中的一个fileSystem实例

if(fileSystem.exists(new Path(path2)))

{

fileSystem.delete(new Path(path2), true);

}

Job job = Job.getInstance(conf,"wordcount");

//job.setJarByClass(WordCount.class);

FileInputFormat.setInputPaths(job, new Path(path1));

job.setInputFormatClass(TextInputFormat.class);

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LongWritable.class);

job.setNumReduceTasks(1);

job.setPartitionerClass(HashPartitioner.class);

job.setReducerClass(MyReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

job.setOutputFormatClass(TextOutputFormat.class);

FileOutputFormat.setOutputPath(job, new Path(path2));

job.waitForCompletion(true);

//查看运行结果:

FSDataInputStream fr = fileSystem.open(new Path("file:///C:\\dirout\\part-r-00000"));

IOUtils.copyBytes(fr, System.out, 1024, true);

}

public static class MyMapper extends Mapper<LongWritable, Text, Text, LongWritable>

{

protected void map(LongWritable k1, Text v1,Context context)throws IOException, InterruptedException

{

//在这里我们利用context获取日志中的相关数据:键值对信息

LongWritable key = context.getCurrentKey();

System.out.println(v1.toString()+"对应的起始偏移量是:"+key.get());

Text value = context.getCurrentValue();

System.out.println("当前文本行是:"+value.toString());

//获取当前行文本所对应的文件的信息

FileSplit inputSplit = (FileSplit) context.getInputSplit();

Path path = inputSplit.getPath();

System.out.println(v1.toString()+"对应的文本路径是:"+path);

String filename = path.getName();

System.out.println(v1.toString()+"对应的文件名是:"+filename);

System.out.println("----------------------------------------------------------");

//利用context获取计数器,对敏感词汇进行计数

Counter counter = context.getCounter("Sensitive Word", "sensitiveword");

if(v1.toString().contains("fenlie"))

{

counter.increment(1L); //如果日志当中包含dalai这个敏感词,自定义计数器加1

}

String[] splited = v1.toString().split("\t");

for (String string : splited)

{

context.write(new Text(string),new LongWritable(1L));

}

}

protected void cleanup(Context context)throws IOException, InterruptedException

{

String jobName = context.getJobName();

System.out.println("当前运行的jobname是:"+jobName);

JobID jobID = context.getJobID();

System.out.println("当前运行的jobId是:"+jobID);

Configuration conf = context.getConfiguration();

System.out.println("运行中读取的配置文件是:"+conf);

String user = context.getUser();

System.out.println("当前操作用户是:"+user);

}

}

public static class MyReducer extends Reducer<Text, LongWritable, Text, LongWritable>

{

protected void reduce(Text k2, Iterable<LongWritable> v2s,Context context)throws IOException, InterruptedException

{

long sum = 0L;

for (LongWritable v2 : v2s)

{

sum += v2.get();

}

context.write(k2,new LongWritable(sum));

}

}

}运行结果:

2016-07-10 13:29:56,715 INFO [main] Configuration.deprecation (Configuration.java:warnOnceIfDeprecated(1009)) - session.id is deprecated. Instead, use dfs.metrics.session-id 2016-07-10 13:29:56,719 INFO [main] jvm.JvmMetrics (JvmMetrics.java:init(76)) - Initializing JVM Metrics with processName=JobTracker, sessionId= 2016-07-10 13:29:57,138 WARN [main] mapreduce.JobSubmitter (JobSubmitter.java:copyAndConfigureFiles(150)) - Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this. 2016-07-10 13:29:57,142 WARN [main] mapreduce.JobSubmitter (JobSubmitter.java:copyAndConfigureFiles(259)) - No job jar file set. User classes may not be found. See Job or Job#setJar(String). 2016-07-10 13:29:57,151 INFO [main] input.FileInputFormat (FileInputFormat.java:listStatus(280)) - Total input paths to process : 1 2016-07-10 13:29:57,202 INFO [main] mapreduce.JobSubmitter (JobSubmitter.java:submitJobInternal(396)) - number of splits:1 2016-07-10 13:29:57,350 INFO [main] mapreduce.JobSubmitter (JobSubmitter.java:printTokens(479)) - Submitting tokens for job: job_local492959629_0001 2016-07-10 13:29:57,426 WARN [main] conf.Configuration (Configuration.java:loadProperty(2358)) - file:/tmp/hadoop-Administrator/mapred/staging/Administrator492959629/.staging/job_local492959629_0001/job.xml:an attempt to override final parameter: mapreduce.job.end-notification.max.retry.interval; Ignoring. 2016-07-10 13:29:57,436 WARN [main] conf.Configuration (Configuration.java:loadProperty(2358)) - file:/tmp/hadoop-Administrator/mapred/staging/Administrator492959629/.staging/job_local492959629_0001/job.xml:an attempt to override final parameter: mapreduce.job.end-notification.max.attempts; Ignoring. 2016-07-10 13:29:57,648 WARN [main] conf.Configuration (Configuration.java:loadProperty(2358)) - file:/tmp/hadoop-Administrator/mapred/local/localRunner/Administrator/job_local492959629_0001/job_local492959629_0001.xml:an attempt to override final parameter: mapreduce.job.end-notification.max.retry.interval; Ignoring. 2016-07-10 13:29:57,656 WARN [main] conf.Configuration (Configuration.java:loadProperty(2358)) - file:/tmp/hadoop-Administrator/mapred/local/localRunner/Administrator/job_local492959629_0001/job_local492959629_0001.xml:an attempt to override final parameter: mapreduce.job.end-notification.max.attempts; Ignoring. 2016-07-10 13:29:57,663 INFO [main] mapreduce.Job (Job.java:submit(1289)) - The url to track the job: http://localhost:8080/ 2016-07-10 13:29:57,664 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1334)) - Running job: job_local492959629_0001 2016-07-10 13:29:57,667 INFO [Thread-3] mapred.LocalJobRunner (LocalJobRunner.java:createOutputCommitter(471)) - OutputCommitter set in config null 2016-07-10 13:29:57,673 INFO [Thread-3] mapred.LocalJobRunner (LocalJobRunner.java:createOutputCommitter(489)) - OutputCommitter is org.apache.hadoop.mapreduce.lib.output.FileOutputCommitter 2016-07-10 13:29:57,753 INFO [Thread-3] mapred.LocalJobRunner (LocalJobRunner.java:runTasks(448)) - Waiting for map tasks 2016-07-10 13:29:57,754 INFO [LocalJobRunner Map Task Executor #0] mapred.LocalJobRunner (LocalJobRunner.java:run(224)) - Starting task: attempt_local492959629_0001_m_000000_0 2016-07-10 13:29:57,788 INFO [LocalJobRunner Map Task Executor #0] util.ProcfsBasedProcessTree (ProcfsBasedProcessTree.java:isAvailable(182)) - ProcfsBasedProcessTree currently is supported only on Linux. 2016-07-10 13:29:58,098 INFO [LocalJobRunner Map Task Executor #0] mapred.Task (Task.java:initialize(581)) - Using ResourceCalculatorProcessTree : org.apache.hadoop.yarn.util.WindowsBasedProcessTree@3030ff48 2016-07-10 13:29:58,103 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:runNewMapper(733)) - Processing split: file:/C:/word.txt:0+64 2016-07-10 13:29:58,115 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:createSortingCollector(388)) - Map output collector class = org.apache.hadoop.mapred.MapTask$MapOutputBuffer 2016-07-10 13:29:58,162 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:setEquator(1182)) - (EQUATOR) 0 kvi 26214396(104857584) 2016-07-10 13:29:58,162 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(975)) - mapreduce.task.io.sort.mb: 100 2016-07-10 13:29:58,162 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(976)) - soft limit at 83886080 2016-07-10 13:29:58,163 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(977)) - bufstart = 0; bufvoid = 104857600 2016-07-10 13:29:58,163 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(978)) - kvstart = 26214396; length = 6553600 hello you对应的起始偏移量是:0 当前文本行是:hello you hello you对应的文本路径是:file:/C:/word.txt hello you对应的文件名是:word.txt ---------------------------------------------------------- hello me对应的起始偏移量是:11 当前文本行是:hello me hello me对应的文本路径是:file:/C:/word.txt hello me对应的文件名是:word.txt ---------------------------------------------------------- hello she对应的起始偏移量是:21 当前文本行是:hello she hello she对应的文本路径是:file:/C:/word.txt hello she对应的文件名是:word.txt ---------------------------------------------------------- hello he对应的起始偏移量是:32 当前文本行是:hello he hello he对应的文本路径是:file:/C:/word.txt hello he对应的文件名是:word.txt ---------------------------------------------------------- hello he对应的起始偏移量是:42 当前文本行是:hello he hello he对应的文本路径是:file:/C:/word.txt hello he对应的文件名是:word.txt ---------------------------------------------------------- fenlie hello对应的起始偏移量是:52 当前文本行是:fenlie hello fenlie hello对应的文本路径是:file:/C:/word.txt fenlie hello对应的文件名是:word.txt ---------------------------------------------------------- 当前运行的jobname是:wordcount 当前运行的jobId是:job_local492959629_0001 运行中读取的配置文件是:Configuration: core-default.xml, core-site.xml, mapred-default.xml, mapred-site.xml, yarn-default.xml, yarn-site.xml, hdfs-default.xml, hdfs-site.xml, file:/tmp/hadoop-Administrator/mapred/local/localRunner/Administrator/job_local492959629_0001/job_local492959629_0001.xml 当前操作用户是:Administrator 2016-07-10 13:29:58,177 INFO [LocalJobRunner Map Task Executor #0] mapred.LocalJobRunner (LocalJobRunner.java:statusUpdate(591)) - 2016-07-10 13:29:58,177 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:flush(1437)) - Starting flush of map output 2016-07-10 13:29:58,178 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:flush(1455)) - Spilling map output 2016-07-10 13:29:58,178 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:flush(1456)) - bufstart = 0; bufend = 156; bufvoid = 104857600 2016-07-10 13:29:58,178 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:flush(1458)) - kvstart = 26214396(104857584); kvend = 26214352(104857408); length = 45/6553600 2016-07-10 13:29:58,202 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:sortAndSpill(1641)) - Finished spill 0 2016-07-10 13:29:58,212 INFO [LocalJobRunner Map Task Executor #0] mapred.Task (Task.java:done(995)) - Task:attempt_local492959629_0001_m_000000_0 is done. And is in the process of committing 2016-07-10 13:29:58,224 INFO [LocalJobRunner Map Task Executor #0] mapred.LocalJobRunner (LocalJobRunner.java:statusUpdate(591)) - map 2016-07-10 13:29:58,224 INFO [LocalJobRunner Map Task Executor #0] mapred.Task (Task.java:sendDone(1115)) - Task 'attempt_local492959629_0001_m_000000_0' done. 2016-07-10 13:29:58,224 INFO [LocalJobRunner Map Task Executor #0] mapred.LocalJobRunner (LocalJobRunner.java:run(249)) - Finishing task: attempt_local492959629_0001_m_000000_0 2016-07-10 13:29:58,225 INFO [Thread-3] mapred.LocalJobRunner (LocalJobRunner.java:runTasks(456)) - map task executor complete. 2016-07-10 13:29:58,229 INFO [Thread-3] mapred.LocalJobRunner (LocalJobRunner.java:runTasks(448)) - Waiting for reduce tasks 2016-07-10 13:29:58,229 INFO [pool-3-thread-1] mapred.LocalJobRunner (LocalJobRunner.java:run(302)) - Starting task: attempt_local492959629_0001_r_000000_0 2016-07-10 13:29:58,247 INFO [pool-3-thread-1] util.ProcfsBasedProcessTree (ProcfsBasedProcessTree.java:isAvailable(182)) - ProcfsBasedProcessTree currently is supported only on Linux. 2016-07-10 13:29:58,334 INFO [pool-3-thread-1] mapred.Task (Task.java:initialize(581)) - Using ResourceCalculatorProcessTree : org.apache.hadoop.yarn.util.WindowsBasedProcessTree@c8a80d8 2016-07-10 13:29:58,338 INFO [pool-3-thread-1] mapred.ReduceTask (ReduceTask.java:run(362)) - Using ShuffleConsumerPlugin: org.apache.hadoop.mapreduce.task.reduce.Shuffle@50d379e8 2016-07-10 13:29:58,351 INFO [pool-3-thread-1] reduce.MergeManagerImpl (MergeManagerImpl.java:<init>(193)) - MergerManager: memoryLimit=1331114752, maxSingleShuffleLimit=332778688, mergeThreshold=878535744, ioSortFactor=10, memToMemMergeOutputsThreshold=10 2016-07-10 13:29:58,354 INFO [EventFetcher for fetching Map Completion Events] reduce.EventFetcher (EventFetcher.java:run(61)) - attempt_local492959629_0001_r_000000_0 Thread started: EventFetcher for fetching Map Completion Events 2016-07-10 13:29:58,381 INFO [localfetcher#1] reduce.LocalFetcher (LocalFetcher.java:copyMapOutput(140)) - localfetcher#1 about to shuffle output of map attempt_local492959629_0001_m_000000_0 decomp: 182 len: 186 to MEMORY 2016-07-10 13:29:58,390 INFO [localfetcher#1] reduce.InMemoryMapOutput (InMemoryMapOutput.java:shuffle(100)) - Read 182 bytes from map-output for attempt_local492959629_0001_m_000000_0 2016-07-10 13:29:58,419 INFO [localfetcher#1] reduce.MergeManagerImpl (MergeManagerImpl.java:closeInMemoryFile(307)) - closeInMemoryFile -> map-output of size: 182, inMemoryMapOutputs.size() -> 1, commitMemory -> 0, usedMemory ->182 2016-07-10 13:29:58,420 INFO [EventFetcher for fetching Map Completion Events] reduce.EventFetcher (EventFetcher.java:run(76)) - EventFetcher is interrupted.. Returning 2016-07-10 13:29:58,421 INFO [pool-3-thread-1] mapred.LocalJobRunner (LocalJobRunner.java:statusUpdate(591)) - 1 / 1 copied. 2016-07-10 13:29:58,422 INFO [pool-3-thread-1] reduce.MergeManagerImpl (MergeManagerImpl.java:finalMerge(667)) - finalMerge called with 1 in-memory map-outputs and 0 on-disk map-outputs 2016-07-10 13:29:58,445 INFO [pool-3-thread-1] mapred.Merger (Merger.java:merge(591)) - Merging 1 sorted segments 2016-07-10 13:29:58,445 INFO [pool-3-thread-1] mapred.Merger (Merger.java:merge(690)) - Down to the last merge-pass, with 1 segments left of total size: 173 bytes 2016-07-10 13:29:58,450 INFO [pool-3-thread-1] reduce.MergeManagerImpl (MergeManagerImpl.java:finalMerge(742)) - Merged 1 segments, 182 bytes to disk to satisfy reduce memory limit 2016-07-10 13:29:58,451 INFO [pool-3-thread-1] reduce.MergeManagerImpl (MergeManagerImpl.java:finalMerge(772)) - Merging 1 files, 186 bytes from disk 2016-07-10 13:29:58,452 INFO [pool-3-thread-1] reduce.MergeManagerImpl (MergeManagerImpl.java:finalMerge(787)) - Merging 0 segments, 0 bytes from memory into reduce 2016-07-10 13:29:58,452 INFO [pool-3-thread-1] mapred.Merger (Merger.java:merge(591)) - Merging 1 sorted segments 2016-07-10 13:29:58,453 INFO [pool-3-thread-1] mapred.Merger (Merger.java:merge(690)) - Down to the last merge-pass, with 1 segments left of total size: 173 bytes 2016-07-10 13:29:58,453 INFO [pool-3-thread-1] mapred.LocalJobRunner (LocalJobRunner.java:statusUpdate(591)) - 1 / 1 copied. 2016-07-10 13:29:58,467 INFO [pool-3-thread-1] Configuration.deprecation (Configuration.java:warnOnceIfDeprecated(1009)) - mapred.skip.on is deprecated. Instead, use mapreduce.job.skiprecords 2016-07-10 13:29:58,474 INFO [pool-3-thread-1] mapred.Task (Task.java:done(995)) - Task:attempt_local492959629_0001_r_000000_0 is done. And is in the process of committing 2016-07-10 13:29:58,476 INFO [pool-3-thread-1] mapred.LocalJobRunner (LocalJobRunner.java:statusUpdate(591)) - 1 / 1 copied. 2016-07-10 13:29:58,476 INFO [pool-3-thread-1] mapred.Task (Task.java:commit(1156)) - Task attempt_local492959629_0001_r_000000_0 is allowed to commit now 2016-07-10 13:29:58,483 INFO [pool-3-thread-1] output.FileOutputCommitter (FileOutputCommitter.java:commitTask(439)) - Saved output of task 'attempt_local492959629_0001_r_000000_0' to file:/C:/dirout/_temporary/0/task_local492959629_0001_r_000000 2016-07-10 13:29:58,484 INFO [pool-3-thread-1] mapred.LocalJobRunner (LocalJobRunner.java:statusUpdate(591)) - reduce > reduce 2016-07-10 13:29:58,484 INFO [pool-3-thread-1] mapred.Task (Task.java:sendDone(1115)) - Task 'attempt_local492959629_0001_r_000000_0' done. 2016-07-10 13:29:58,484 INFO [pool-3-thread-1] mapred.LocalJobRunner (LocalJobRunner.java:run(325)) - Finishing task: attempt_local492959629_0001_r_000000_0 2016-07-10 13:29:58,484 INFO [Thread-3] mapred.LocalJobRunner (LocalJobRunner.java:runTasks(456)) - reduce task executor complete. 2016-07-10 13:29:58,666 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1355)) - Job job_local492959629_0001 running in uber mode : false 2016-07-10 13:29:58,667 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1362)) - map 100% reduce 100% 2016-07-10 13:29:58,668 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1373)) - Job job_local492959629_0001 completed successfully 2016-07-10 13:29:58,679 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1380)) - Counters: 34 File System Counters FILE: Number of bytes read=804 FILE: Number of bytes written=462717 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 Map-Reduce Framework Map input records=6 Map output records=12 Map output bytes=156 Map output materialized bytes=186 Input split bytes=82 Combine input records=0 Combine output records=0 Reduce input groups=6 Reduce shuffle bytes=186 Reduce input records=12 Reduce output records=6 Spilled Records=24 Shuffled Maps =1 Failed Shuffles=0 Merged Map outputs=1 GC time elapsed (ms)=7 CPU time spent (ms)=0 Physical memory (bytes) snapshot=0 Virtual memory (bytes) snapshot=0 Total committed heap usage (bytes)=467664896 Sensitive Word sensitiveword=1 Shuffle Errors BAD_ID=0 CONNECTION=0 IO_ERROR=0 WRONG_LENGTH=0 WRONG_MAP=0 WRONG_REDUCE=0 File Input Format Counters Bytes Read=64 File Output Format Counters Bytes Written=51 fenlie 1 he 2 hello 6 me 1 she 1 you 1

特别说明:

1、计数器counter是全局的。 换言之,MapReduce框架将跨所有map和reduce聚集这些计数器,并在作业结束 时产生一个最终结果。

2、Configuration: core-site.xml, mapred-site.xml, yarn-site.xml, hdfs-site.xml这四个配置文件读取的方式分为3种:

第一:用Hadoop的集群模式运行程序,将会读取hadoop中这几个配置文件的内容。

第二:将这个xxx-site.xml加载到eclipse的工程src中

第三:在代码中用 conf.set(name, value)的方式进行指定

相关文章推荐

- 在页面中定时处理C#程序

- AsyncTask专题之一 为啥要使用AsyncTask

- HTML之表单元素

- redhat6.5配置网络yum源

- mp4与avi比较

- Map中删除数据

- 数组复制

- java异常情况分析

- Docker distrubution in django

- maven项目学习问题小结

- 安装:Ubuntu12.04+Python3+Django1.7.9过程记录

- 图像的膨胀与腐蚀、细化

- docker的一些常用命令整理

- GridControl列自动匹配宽度

- springMVC初体验

- web项目docker化的两种方法

- vs2010中臃肿的ipch和sdf文件

- RecyclerView实现ViewPager效果

- 零行代码把搜索栏searchBar的英文-cancel改为中文-取消

- 关于数组的新理解