hadoop 2.6 HA搭建

2015-12-23 16:42

369 查看

准备条件

zookeeper集群已配置好(172.16.12.85,172.16.12.88,172.16.12.91),并且zookeeper集群已启动,zookeeper版本为zookeeper-3.4.5-cdh5.4.2

ssh都已配置好

hadoop版本为2.6.0-cdh5.5.1

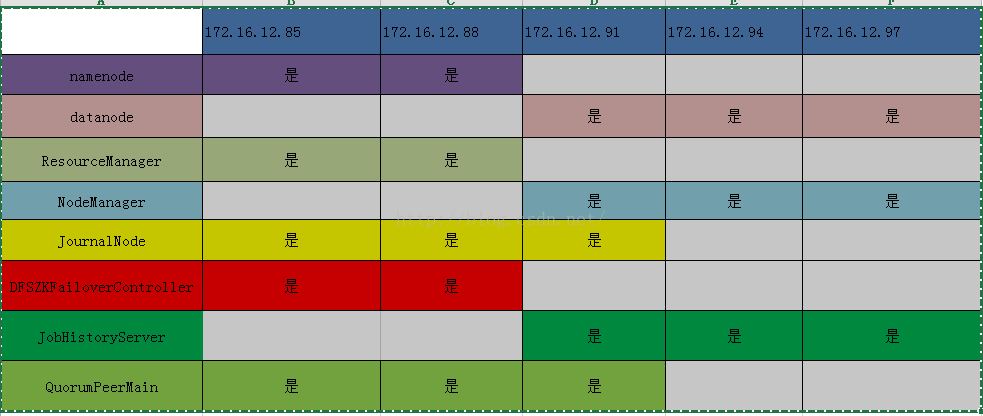

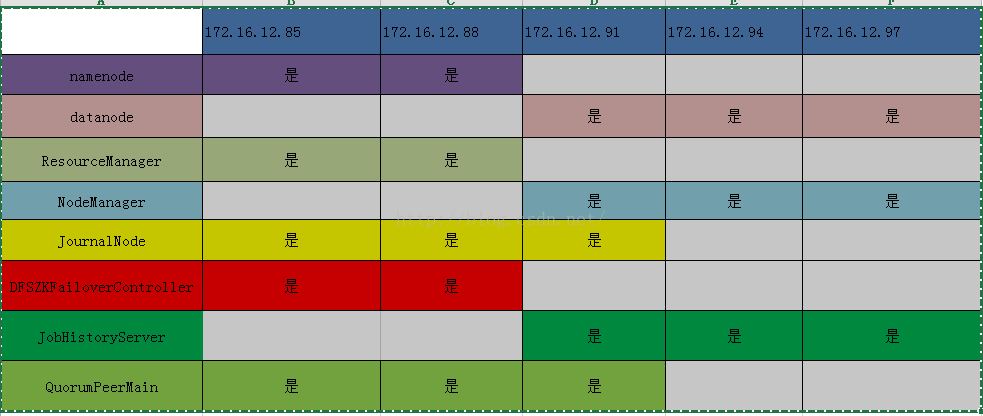

集群规划

第一步,配置core-site.xml文件。

<span style="font-size:18px;"><configuration>

<!-- 指定hdfs的nameservice为ns1,是NameNode的URI。hdfs://主机名:端口/ -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://gy-cluster</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<!-- 指定hadoop临时目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>file:<span style="background-color: rgb(255, 102, 102);">/data01/hadoop/tmp</span></value>

<description>Abase for other temporary directories.</description>

</property>

<!--指定可以在任何IP访问-->

<property>

<name>hadoop.proxyuser.hduser.hosts</name>

<value>*</value>

</property>

<!--指定所有用户可以访问-->

<property>

<name>hadoop.proxyuser.hduser.groups</name>

<value>*</value>

</property>

<!-- 指定zookeeper地址 -->

<property>

<name>ha.zookeeper.quorum</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

</configuration></span>

第二步,修改hadoop-env.sh文件,增加下面一行

第三步,修改hdfs-site.xml文件

<span style="font-size:18px;"><configuration>

<!--指定hdfs的nameservice为ns1,需要和core-site.xml中的保持一致 -->

<property>

<name>dfs.nameservices</name>

<value>gy-cluster</value>

</property>

<!-- ns1下面有两个NameNode,分别是nn1,nn2 -->

<property>

<name>dfs.ha.namenodes.gy-cluster</name>

<value>nn1,nn2</value>

</property>

<!-- nn1的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.gy-cluster.nn1</name>

<value>172.16.12.85:9000</value>

</property>

<!-- nn2的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.gy-cluster.nn2</name>

<value>172.16.12.88:9000</value>

</property>

<!-- nn1的http通信地址 -->

<property>

<name>dfs.namenode.http-address.gy-cluster.nn1</name>

<value>172.16.12.85:50070</value>

</property>

<!-- nn2的http通信地址 -->

<property>

<name>dfs.namenode.http-address.gy-cluster.nn2</name>

<value>172.16.12.88:50070</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.gy-cluster.nn1</name>

<value>172.16.12.85:53310</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.gy-cluster.nn2</name>

<value>172.16.12.88:53310</value>

</property>

<!-- 指定NameNode的元数据在JournalNode上的存放位置 -->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://172.16.12.85:8485;172.16.12.88:8485;172.16.12.91:8485/gy-cluster</value>

</property>

<!-- 配置失败自动切换实现方式 -->

<property>

<name>dfs.client.failover.proxy.provider.gy-cluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<!-- 配置隔离机制 -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>shell(/bin/true)</value>

</property>

<!-- 使用隔离机制时需要ssh免密码登陆 -->

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/weihu/.ssh/id_rsa</value>

</property>

<!-- 指定NameNode的元数据在JournalNode上的存放位置 -->

<property>

<name>dfs.journalnode.edits.dir</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/tmp/journal</span></value>

</property>

<!--指定支持高可用自动切换机制-->

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!--指定namenode名称空间的存储地址-->

<property>

<name>dfs.namenode.name.dir</name>

<value>file:<span style="background-color: rgb(255, 102, 102);">/data01/hadoop/dfs/name</span></value>

</property>

<!--指定datanode数据存储地址-->

<property>

<name>dfs.datanode.data.dir</name>

<value>file:<span style="background-color: rgb(255, 102, 102);">/data01/hadoop/dfs/data</span></value>

</property>

<!--指定数据冗余份数-->

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<!--指定可以通过web访问hdfs目录-->

4000

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.permissions.enable</name>

<value>false</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

<!--保证数据恢复 -->

<!--

<property>

<name>dfs.journalnode.http-address</name>

<value>0.0.0.0:8480</value>

</property>

<property>

<name>dfs.journalnode.rpc-address</name>

<value>0.0.0.0:8485</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

-->

</configuration></span>第四步,配置mapred-site.xml文件

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>0.0.0.0:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>0.0.0.0:19888</value>

</property>

<property>

<name>yarn.app.mapreduce.am.staging-dir</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/hadoop-yarn/staging</span></value>

</property>

<property>

<name>mapreduce.jobhistory.done-dir</name>

<value>${yarn.app.mapreduce.am.staging-dir}/history/done</value>

</property>

<property>

<name>mapreduce.jobhistory.intermediate-done-dir</name>

<value>${yarn.app.mapreduce.am.staging-dir}/history/done_intermediate</value>

</property>

<property>

<name>mapreduce.task.io.sort.factor</name>

<value>100</value>

</property>

<property>

<name>mapreduce.reduce.shuffle.parallelcopies</name>

<value>10</value>

</property>

</configuration>第五步,配置slaves文件

172.16.12.91

172.16.12.94

172.16.12.97

第六步,配置yarn-site.xml文件

<configuration>

<!-- Site specific YARN configuration properties -->

<!--rm失联后重新链接的时间-->

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>20000</value>

</property>

<!--开启resource manager HA,默认为false-->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>rm-cluster</value>

</property>

<!--配置resource manager -->

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!--开启故障自动切换-->

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>172.16.12.85</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>172.16.12.88</value>

</property>

<!--在172.16.12.85上配置rm1,在172.16.12.88上配置rm2,注意:一般都喜欢把配置好的文件远程复制到其它机器上,但这个在YARN的另一个机器上一定要修改-->

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm1</value>

<description>If we want to launch more than one RM in single node, we need this configuration</description>

</property>

<!--开启自动恢复功能-->

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<!--配置与zookeeper的连接地址-->

<property>

<name>yarn.resourcemanager.zk-state-store.address</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

<!--schelduler失联等待连接时间-->

<property>

<name>yarn.app.mapreduce.am.scheduler.connection.wait.interval-ms</name>

<value>5000</value>

</property>

<!--配置rm1-->

<property>

<name>yarn.resourcemanager.address.rm1</name>

<value>172.16.12.85:8132</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm1</name>

<value>172.16.12.85:8130</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>172.16.12.85:8188</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1</name>

<value>172.16.12.85:8131</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm1</name>

<value>172.16.12.85:8033</value>

</property>

<property>

<name>yarn.resourcemanager.ha.admin.address.rm1</name>

<value>172.16.12.85:23142</value>

</property>

<!--配置rm2-->

<property>

<name>yarn.resourcemanager.address.rm2</name>

<value>172.16.12.88:8132</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2</name>

<value>172.16.12.88:8130</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>172.16.12.88:8188</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2</name>

<value>172.16.12.88:8131</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm2</name>

<value>172.16.12.88:8033</value>

</property>

<property>

<name>yarn.resourcemanager.ha.admin.address.rm2</name>

<value>172.16.12.88:23142</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/yarn/local</span></value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/yarn/log</span></value>

</property>

<property>

<name>mapreduce.shuffle.port</name>

<value>23080</value>

</property>

<!--故障处理类-->

<property>

<name>yarn.client.failover-proxy-provider</name>

<value>org.apache.hadoop.yarn.client.ConfiguredRMFailoverProxyProvider</value>

</property>

<property>

<name>yarn.resourcemanager.ha.automatic-failover.zk-base-path</name>

<value>/yarn-leader-election</value>

<description>Optional setting. The default value is /yarn-leader-election</description>

</property>

</configuration>

所有的配置文件改完后,ssh到你其它的节点上,以上所有的红色标注的目录全部要手工创建。

配置改完后,下面启动hadoop集群。

第一步,格式化zookeeper

在172.16.12.85上执行

bin/hdfs zkfc -formatZK

第二步,在172.16.12.85,172.16.12.88,172.16.12.91上启动所有journalnode

sbin/hadoop-daemon.sh start journalnode

验证:运行jps命令,会出现journalnode进程

第三步,在172.16.12.85上执行格式化HDFS命令

bin/hdfs namenode -format

第四步,在172.16.12.85上启动namenode

sbin/hadoop-daemon.sh start namenode验证:运行jps命令,会出现namenode进程

第五步,在172.16.12.88上执行(完成主备节点同步信息)

bin/hdfs namenode -bootstrapStandby

并启动namenode

<span style="font-size:18px;"></span><pre name="code" class="html">sbin/hadoop-daemon.sh start namenode

验证:运行jps命令,会出现namenode进程

第六步,在172.16.12.85上执行,启动datanode

sbin/hadoop-daemons.sh start datanode验证:在datanode节点运行jps命令,会出现datanode进程

第七步,在172.16.12.85上执行,启动yarn

sbin/start-yarn.sh在datanode、namenode上执行jsp看是否都有ResourceManager、NodeManager进程(除了备用namenode上不会有ResourceManager)

第八步,在172.16.12.88(备用节点)上执行,启动yarn

sbin/yarn-daemon.sh start resourcemanager在此节点上运行jps,看是否出现ResourceManager进程

第九步,在172.16.12.85和172.16.12.88上执行

sbin/hadoop-daemon.sh start zkfc在此节点运行jps,验证是否有DFSZKFailoverController进程

第十步,在所有datanode节点上运行sbin/mr-jobhistory-daemon.sh start historyserver在此节点运行jps,验证是否有JobHistoryServer进程

验证集群是否可用

1,验证hdfs是否好用

命令:hadoop fs -put etc/hadoop/core-site.xml /

验证:hadoop fs -ls /

2,验证yarn是否好用命令:hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.6.0-cdh5.5.1.jar wordcount /core-site.xml /out

验证:hadoop fs -ls /out

写测试(向HDFS文件系统中写入数据,10个文件,每个文件10MB,文件存放到/benchmarks/TestDFSIO/io_data中)

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.1-tests.jar TestDFSIO -write -nrFiles 10 -size 10MB

读测试(在HDFS文件系统中读入10个文件,每个文件10M)

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.1-tests.jar TestDFSIO -read -nrFiles 10 -fileSize 10MB

删除临时文件

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.1-tests.jar TestDFSIO -clean

3,验证namenode HA的故障自动转移

4,验证yarn HA

命令:bin/yarn rmadmin -getServiceState rm1

result:active

命令:bin/yarn rmadmin -getServiceState rm2

result:standby

至此集群已搭建完成,以下是成功截图

主节点

备用节点

查看主节点8188端口

如果有什么疑问,可以加QQ群询问

zookeeper集群已配置好(172.16.12.85,172.16.12.88,172.16.12.91),并且zookeeper集群已启动,zookeeper版本为zookeeper-3.4.5-cdh5.4.2

ssh都已配置好

hadoop版本为2.6.0-cdh5.5.1

集群规划

第一步,配置core-site.xml文件。

<span style="font-size:18px;"><configuration>

<!-- 指定hdfs的nameservice为ns1,是NameNode的URI。hdfs://主机名:端口/ -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://gy-cluster</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<!-- 指定hadoop临时目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>file:<span style="background-color: rgb(255, 102, 102);">/data01/hadoop/tmp</span></value>

<description>Abase for other temporary directories.</description>

</property>

<!--指定可以在任何IP访问-->

<property>

<name>hadoop.proxyuser.hduser.hosts</name>

<value>*</value>

</property>

<!--指定所有用户可以访问-->

<property>

<name>hadoop.proxyuser.hduser.groups</name>

<value>*</value>

</property>

<!-- 指定zookeeper地址 -->

<property>

<name>ha.zookeeper.quorum</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

</configuration></span>

第二步,修改hadoop-env.sh文件,增加下面一行

<span style="font-size:18px;">export JAVA_HOME=/data01/jdk1.7</span>

第三步,修改hdfs-site.xml文件

<span style="font-size:18px;"><configuration>

<!--指定hdfs的nameservice为ns1,需要和core-site.xml中的保持一致 -->

<property>

<name>dfs.nameservices</name>

<value>gy-cluster</value>

</property>

<!-- ns1下面有两个NameNode,分别是nn1,nn2 -->

<property>

<name>dfs.ha.namenodes.gy-cluster</name>

<value>nn1,nn2</value>

</property>

<!-- nn1的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.gy-cluster.nn1</name>

<value>172.16.12.85:9000</value>

</property>

<!-- nn2的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.gy-cluster.nn2</name>

<value>172.16.12.88:9000</value>

</property>

<!-- nn1的http通信地址 -->

<property>

<name>dfs.namenode.http-address.gy-cluster.nn1</name>

<value>172.16.12.85:50070</value>

</property>

<!-- nn2的http通信地址 -->

<property>

<name>dfs.namenode.http-address.gy-cluster.nn2</name>

<value>172.16.12.88:50070</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.gy-cluster.nn1</name>

<value>172.16.12.85:53310</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.gy-cluster.nn2</name>

<value>172.16.12.88:53310</value>

</property>

<!-- 指定NameNode的元数据在JournalNode上的存放位置 -->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://172.16.12.85:8485;172.16.12.88:8485;172.16.12.91:8485/gy-cluster</value>

</property>

<!-- 配置失败自动切换实现方式 -->

<property>

<name>dfs.client.failover.proxy.provider.gy-cluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<!-- 配置隔离机制 -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>shell(/bin/true)</value>

</property>

<!-- 使用隔离机制时需要ssh免密码登陆 -->

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/weihu/.ssh/id_rsa</value>

</property>

<!-- 指定NameNode的元数据在JournalNode上的存放位置 -->

<property>

<name>dfs.journalnode.edits.dir</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/tmp/journal</span></value>

</property>

<!--指定支持高可用自动切换机制-->

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!--指定namenode名称空间的存储地址-->

<property>

<name>dfs.namenode.name.dir</name>

<value>file:<span style="background-color: rgb(255, 102, 102);">/data01/hadoop/dfs/name</span></value>

</property>

<!--指定datanode数据存储地址-->

<property>

<name>dfs.datanode.data.dir</name>

<value>file:<span style="background-color: rgb(255, 102, 102);">/data01/hadoop/dfs/data</span></value>

</property>

<!--指定数据冗余份数-->

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<!--指定可以通过web访问hdfs目录-->

4000

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.permissions.enable</name>

<value>false</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

<!--保证数据恢复 -->

<!--

<property>

<name>dfs.journalnode.http-address</name>

<value>0.0.0.0:8480</value>

</property>

<property>

<name>dfs.journalnode.rpc-address</name>

<value>0.0.0.0:8485</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

-->

</configuration></span>第四步,配置mapred-site.xml文件

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>0.0.0.0:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>0.0.0.0:19888</value>

</property>

<property>

<name>yarn.app.mapreduce.am.staging-dir</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/hadoop-yarn/staging</span></value>

</property>

<property>

<name>mapreduce.jobhistory.done-dir</name>

<value>${yarn.app.mapreduce.am.staging-dir}/history/done</value>

</property>

<property>

<name>mapreduce.jobhistory.intermediate-done-dir</name>

<value>${yarn.app.mapreduce.am.staging-dir}/history/done_intermediate</value>

</property>

<property>

<name>mapreduce.task.io.sort.factor</name>

<value>100</value>

</property>

<property>

<name>mapreduce.reduce.shuffle.parallelcopies</name>

<value>10</value>

</property>

</configuration>第五步,配置slaves文件

172.16.12.91

172.16.12.94

172.16.12.97

第六步,配置yarn-site.xml文件

<configuration>

<!-- Site specific YARN configuration properties -->

<!--rm失联后重新链接的时间-->

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>20000</value>

</property>

<!--开启resource manager HA,默认为false-->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>rm-cluster</value>

</property>

<!--配置resource manager -->

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!--开启故障自动切换-->

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>172.16.12.85</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>172.16.12.88</value>

</property>

<!--在172.16.12.85上配置rm1,在172.16.12.88上配置rm2,注意:一般都喜欢把配置好的文件远程复制到其它机器上,但这个在YARN的另一个机器上一定要修改-->

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm1</value>

<description>If we want to launch more than one RM in single node, we need this configuration</description>

</property>

<!--开启自动恢复功能-->

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<!--配置与zookeeper的连接地址-->

<property>

<name>yarn.resourcemanager.zk-state-store.address</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>172.16.12.85:2181,172.16.12.88:2181,172.16.12.91:2181</value>

</property>

<!--schelduler失联等待连接时间-->

<property>

<name>yarn.app.mapreduce.am.scheduler.connection.wait.interval-ms</name>

<value>5000</value>

</property>

<!--配置rm1-->

<property>

<name>yarn.resourcemanager.address.rm1</name>

<value>172.16.12.85:8132</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm1</name>

<value>172.16.12.85:8130</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>172.16.12.85:8188</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1</name>

<value>172.16.12.85:8131</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm1</name>

<value>172.16.12.85:8033</value>

</property>

<property>

<name>yarn.resourcemanager.ha.admin.address.rm1</name>

<value>172.16.12.85:23142</value>

</property>

<!--配置rm2-->

<property>

<name>yarn.resourcemanager.address.rm2</name>

<value>172.16.12.88:8132</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2</name>

<value>172.16.12.88:8130</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>172.16.12.88:8188</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2</name>

<value>172.16.12.88:8131</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm2</name>

<value>172.16.12.88:8033</value>

</property>

<property>

<name>yarn.resourcemanager.ha.admin.address.rm2</name>

<value>172.16.12.88:23142</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/yarn/local</span></value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value><span style="background-color: rgb(255, 102, 102);">/data01/hadoop/yarn/log</span></value>

</property>

<property>

<name>mapreduce.shuffle.port</name>

<value>23080</value>

</property>

<!--故障处理类-->

<property>

<name>yarn.client.failover-proxy-provider</name>

<value>org.apache.hadoop.yarn.client.ConfiguredRMFailoverProxyProvider</value>

</property>

<property>

<name>yarn.resourcemanager.ha.automatic-failover.zk-base-path</name>

<value>/yarn-leader-election</value>

<description>Optional setting. The default value is /yarn-leader-election</description>

</property>

</configuration>

所有的配置文件改完后,ssh到你其它的节点上,以上所有的红色标注的目录全部要手工创建。

配置改完后,下面启动hadoop集群。

第一步,格式化zookeeper

在172.16.12.85上执行

bin/hdfs zkfc -formatZK

第二步,在172.16.12.85,172.16.12.88,172.16.12.91上启动所有journalnode

sbin/hadoop-daemon.sh start journalnode

验证:运行jps命令,会出现journalnode进程

第三步,在172.16.12.85上执行格式化HDFS命令

bin/hdfs namenode -format

第四步,在172.16.12.85上启动namenode

sbin/hadoop-daemon.sh start namenode验证:运行jps命令,会出现namenode进程

第五步,在172.16.12.88上执行(完成主备节点同步信息)

bin/hdfs namenode -bootstrapStandby

并启动namenode

<span style="font-size:18px;"></span><pre name="code" class="html">sbin/hadoop-daemon.sh start namenode

验证:运行jps命令,会出现namenode进程

第六步,在172.16.12.85上执行,启动datanode

sbin/hadoop-daemons.sh start datanode验证:在datanode节点运行jps命令,会出现datanode进程

第七步,在172.16.12.85上执行,启动yarn

sbin/start-yarn.sh在datanode、namenode上执行jsp看是否都有ResourceManager、NodeManager进程(除了备用namenode上不会有ResourceManager)

第八步,在172.16.12.88(备用节点)上执行,启动yarn

sbin/yarn-daemon.sh start resourcemanager在此节点上运行jps,看是否出现ResourceManager进程

第九步,在172.16.12.85和172.16.12.88上执行

sbin/hadoop-daemon.sh start zkfc在此节点运行jps,验证是否有DFSZKFailoverController进程

第十步,在所有datanode节点上运行sbin/mr-jobhistory-daemon.sh start historyserver在此节点运行jps,验证是否有JobHistoryServer进程

验证集群是否可用

1,验证hdfs是否好用

命令:hadoop fs -put etc/hadoop/core-site.xml /

验证:hadoop fs -ls /

2,验证yarn是否好用命令:hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.6.0-cdh5.5.1.jar wordcount /core-site.xml /out

验证:hadoop fs -ls /out

写测试(向HDFS文件系统中写入数据,10个文件,每个文件10MB,文件存放到/benchmarks/TestDFSIO/io_data中)

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.1-tests.jar TestDFSIO -write -nrFiles 10 -size 10MB

读测试(在HDFS文件系统中读入10个文件,每个文件10M)

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.1-tests.jar TestDFSIO -read -nrFiles 10 -fileSize 10MB

删除临时文件

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.1-tests.jar TestDFSIO -clean

3,验证namenode HA的故障自动转移

1,杀死namenode上进程为active的 2,看namenode上状态为standby是否自动切换成active

4,验证yarn HA

命令:bin/yarn rmadmin -getServiceState rm1

result:active

命令:bin/yarn rmadmin -getServiceState rm2

result:standby

至此集群已搭建完成,以下是成功截图

主节点

备用节点

查看主节点8188端口

如果有什么疑问,可以加QQ群询问

相关文章推荐

- 详解HDFS Short Circuit Local Reads

- Hadoop_2.1.0 MapReduce序列图

- 使用Hadoop搭建现代电信企业架构

- 单机版搭建Hadoop环境图文教程详解

- hadoop常见错误以及处理方法详解

- hadoop 单机安装配置教程

- hadoop的hdfs文件操作实现上传文件到hdfs

- hadoop实现grep示例分享

- Apache Hadoop版本详解

- linux下搭建hadoop环境步骤分享

- hadoop client与datanode的通信协议分析

- hadoop中一些常用的命令介绍

- Hadoop单机版和全分布式(集群)安装

- 用PHP和Shell写Hadoop的MapReduce程序

- hadoop map-reduce中的文件并发操作

- Hadoop1.2中配置伪分布式的实例

- java结合HADOOP集群文件上传下载

- 用python + hadoop streaming 分布式编程(一) -- 原理介绍,样例程序与本地调试

- Hadoop安装感悟

- Scala代码实现列出Hadoop 文件夹下面的所有文件