Stanford UFLDL教程 Exercise:Independent Component Analysis

2015-12-07 11:23

423 查看

Exercise:Independent Component Analysis

Contents[hide]1Independent Component Analysis 1.1Dependencies 1.2Step 0: Initialization 1.3Step 1: Sample patches 1.4Step 2: ZCA whiten patches 1.5Step 3: Implement and check ICA cost functions 1.5.1Step 4: Optimization 1.6Appendix 1.6.1Backtracking line search |

Independent Component Analysis

In this exercise, you will implementIndependent Component Analysis on color images from the STL-10 dataset.

In the file independent_component_analysis_exercise.zip we have provided some

starter code. You should write your code at the places indicated "YOUR CODE HERE" in the files.

For this exercise, you will need to modify OrthonormalICACost.m andICAExercise.m.

Dependencies

You will need:computeNumericalGradient.m from

Exercise:Sparse Autoencoder

displayColorNetwork.m from

Exercise:Learning color features with Sparse Autoencoders

The following additional file is also required for this exercise:

Sampled 8x8 patches from the STL-10 dataset (stl10_patches_100k.zip)

If you have not completed the exercises listed above, we strongly suggest you complete them first.

Step 0: Initialization

In this step, we initialize some parameters used for the exercise.Step 1: Sample patches

In this step, we load and use a portion of the 8x8 patches from the STL-10 dataset (which you first saw in the exercise onlineardecoders).

Step 2: ZCA whiten patches

In this step, we ZCA whiten the patches as required by orthonormal ICA.Step 3: Implement and check ICA cost functions

In this step, you should implement the ICA cost function:orthonormalICACost inorthonormalICACost.m, which computes the cost and gradient for the orthonormal ICA objective. Note that the orthonormality constraint isnot enforcedin the cost function. It will be enforced by a projection in the gradient descent step, which you will have to complete in step 4.

When you have implemented the cost function, you should check the gradients numerically.

Hint - if you are having difficulties deriving the gradients, you may wish to consult the page onderiving

gradients using the backpropagation idea.

Step 4: Optimization

In step 4, you will optimize for the orthonormal ICA objective using gradient descent with backtracking line search (the code for which has already been provided for you. For more details on the backtracking line search, you may wish to consult theappendixof this exercise). The orthonormality constraint should be enforced with a projection, which you should fill in.

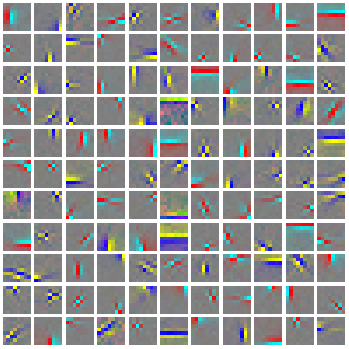

Once you have filled in the code for the projection, check that it is correct by using the verification code provided. Once you have verified that your projection is correct, comment out the verification code and run the optimization. 1000 iterations of

gradient descent should take less than 15 minutes, and produce a basis which looks like the following:

It is comparatively difficult to optimize for the objective while enforcing the orthonormality constraint using gradient descent, and convergence can be slow. Hence, in situations where an orthonormal basis is not required, other faster methods of learning

bases (such as

sparse coding) may be preferable.

Appendix

Backtracking line search

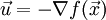

The backtracking line search used in the exercise is based off that inConvex Optimization by Boyd and Vandenbergh. In the backtracking line search, given a descent direction

(in this exercise we use

),

we want to find a good step sizet that gives us a steep descent. The general idea is to use a linear approximation (the first order Taylor approximation) to the functionf at the current

point

, and to search for a step sizet such that we can decrease the function's value by more thanα

times the decrease predicted by the linear approximation (

. For more details, you may wish to consult

the book.

However, it is not necessary to use the backtracking line search here. Gradient descent with a small step size, or backtracking to a step size so that the objective decreases is sufficient for this exercise.

from: http://ufldl.stanford.edu/wiki/index.php/Exercise:Independent_Component_Analysis

相关文章推荐

- 斯坦福cs193p作业解析之计算器1(Calculator)

- 斯坦福cs193p作业解析之计算器2(Calculator)

- 斯坦福cs193p作业解析之图形计算器(Graph Calculator)

- 斯坦福cs193p作业解析之Smashtag(Twitter)

- 1.18.2013 #Practical Programming #1

- 1.19.2013 #Practical Programming #2

- 1.22.2013 #Practical Programming #3

- Stanford机器学习---第一讲. Linear Regression with one variable

- Stanford机器学习---第二讲. 多变量线性回归 Linear Regression with multiple variable

- Stanford机器学习---第四讲. 神经网络的表示 Neural Networks representation

- Stanford机器学习---第五讲. 神经网络的学习 Neural Networks learning

- Stanford机器学习---第六讲. 怎样选择机器学习方法、系统

- Stanford机器学习---第七讲. 机器学习系统设计

- Stanford机器学习---第八讲. 支持向量机SVM

- Stanford机器学习---第九讲. 聚类

- Stanford机器学习---第十讲. 数据降维

- Solr Analysis And Solr Query -- Solr分析以及查询

- Exchange the numbers of row and array of a two-dimensional array, and form a new two-dimensional ar

- Find all the prime number between 1 and 100

- Two Minor Questions