Hadoop配置datanode无法连接到master

2014-03-02 01:29

387 查看

初次在VM上配置Hadoop,开了三台虚拟机,一个作namenode,jobtracker

另外两台机子作datanode,tasktracker

配置好后,启动集群

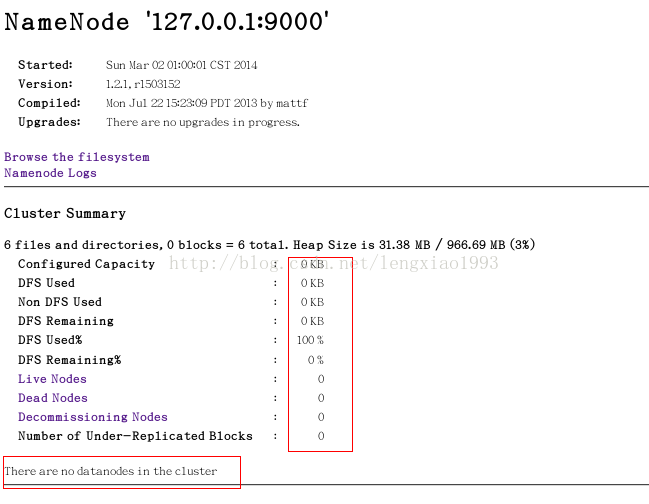

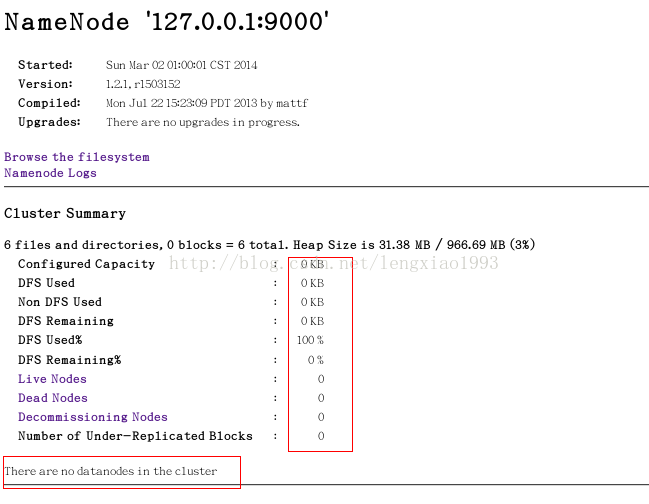

通过http://localhost:50700查看cluster状况

发现没有datanode

检查结点,发现datanode 进程已经启动,查看datanode机器上的日志

发现datanode 无法连接到master ,但是经过尝试,可以ping通,到结点查看,9000端口也处于监听状态,百思不得其解

最终发现core-site.xml 中

<property>

<name>fs.default.name</name>

<value>hdfs://localhost:9000</value>

</property>

才意识到监听127.0.0.1端口,并不能被外机访问

改为主机名,一切正常

<property>

<name>fs.default.name</name>

<value>hdfs://Master.Hadoop:9000</value>

</property>

另外两台机子作datanode,tasktracker

配置好后,启动集群

通过http://localhost:50700查看cluster状况

发现没有datanode

检查结点,发现datanode 进程已经启动,查看datanode机器上的日志

2014-03-01 22:11:17,473 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: Master.hadoop/192.168.128.132:9000. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS) 2014-03-01 22:11:18,477 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: Master.hadoop/192.168.128.132:9000. Already tried 1 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS) 2014-03-01 22:11:19,481 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: Master.hadoop/192.168.128.132:9000. Already tried 2 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS) 2014-03-01 22:11:20,485 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: Master.hadoop/192.168.128.132:9000. Already tried 3 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS) 2014-03-01 22:11:21,489 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: Master.hadoop/192.168.128.132:9000. Already tried 4 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

发现datanode 无法连接到master ,但是经过尝试,可以ping通,到结点查看,9000端口也处于监听状态,百思不得其解

最终发现core-site.xml 中

<property>

<name>fs.default.name</name>

<value>hdfs://localhost:9000</value>

</property>

才意识到监听127.0.0.1端口,并不能被外机访问

改为主机名,一切正常

<property>

<name>fs.default.name</name>

<value>hdfs://Master.Hadoop:9000</value>

</property>

相关文章推荐

- hadoop中master能够启动datanode,但是datanode无法连接namenode 报 17/11/16 03:49:13 WARN ipc.Client: Failed to conn

- 如何解决Hadoop集群环境下DataNode无法连接NameNode问题

- 停电后hadoop集群重启 DataNode无法连接NameNode

- 关于配置伪分布hadoop无法启动datanode的解决

- Hadoop集群配置ssh时,slave无法连接到master

- hadoop DataNode无法连接NameNode问题,注意/etc/hosts内容

- 部署hadoop后,datanode无法连接namenode

- hadoop集群配置datanode无法启动的原因

- hadoop datanode 启动正常,但master无法识别(50030不显示datanode节点)

- hadoop集群配置datanode无法启动的原因

- ubuntu16.0.4 hadoop datanode无法连接上namenode

- Hadoop DataNode 无法连接到主机NameNode

- 解决更改hadoop核心配置文件后会出现DataNode,或者NameNode无法启动的问题

- Hadoop DataNode 无法连接到主机NameNode

- Hadoop中datanode无法启动

- hadoop配置完成后datanode没有启动

- hadoop 完全分布式 下 datanode无法启动解决方法

- Hadoop中datanode无法启动

- hadoop多次格式化namenode造成datanode无法启动问题解决

- hadoop 完全分布式 下 datanode无法启动解决方法