随机森林 Random Trees(一)

2012-07-27 15:08

405 查看

转载自:http://lincccc.com/?p=45

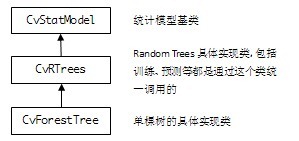

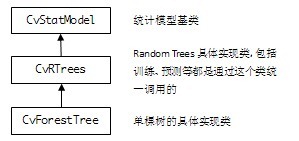

OpenCV2.3中Random Trees(R.T.)的继承结构:

API:

Example:

#include <cv.h>

#include <stdio.h>

#include <highgui.h>

#include <ml.h>

#include <map>

void print_result(float train_err, float test_err,

const CvMat* _var_imp)

{

printf( "train error %f\n", train_err );

printf( "test error %f\n\n", test_err );

if (_var_imp)

{

cv::Mat var_imp(_var_imp), sorted_idx;

cv::sortIdx(var_imp, sorted_idx, CV_SORT_EVERY_ROW +

CV_SORT_DESCENDING);

printf( "variable importance:\n" );

int i, n = (int)var_imp.total();

int type = var_imp.type();

CV_Assert(type == CV_32F || type == CV_64F);

for( i = 0; i < n; i++)

{

int k = sorted_idx.at<int>(i);

printf( "%d\t%f\n", k, type == CV_32F ?

var_imp.at<float>(k) :

var_imp.at<double>(k));

}

}

printf("\n");

}

int main()

{

const char* filename = "data.xml";

int response_idx = 0;

CvMLData data;

data.read_csv( filename ); // read data

data.set_response_idx( response_idx ); // set response index

data.change_var_type( response_idx,

CV_VAR_CATEGORICAL ); // set response type

// split train and test data

CvTrainTestSplit spl( 0.5f );

data.set_train_test_split( &spl );

data.set_miss_ch("?"); // set missing value

CvRTrees rtrees;

rtrees.train( &data, CvRTParams( 10, 2, 0, false,

16, 0, true, 0, 100, 0, CV_TERMCRIT_ITER ));

print_result( rtrees.calc_error( &data, CV_TRAIN_ERROR),

rtrees.calc_error( &data, CV_TEST_ERROR ),

rtrees.get_var_importance() );

return 0;

}

References:

[1] OpenCV 2.3 Online Documentation: http://opencv.itseez.com/modules/ml/doc/random_trees.html

[2] Random Forests, Leo Breiman and Adele Cutler: http://www.stat.berkeley.edu/users/breiman/RandomForests/cc_home.htm

[3] T. Hastie, R. Tibshirani, J. H. Friedman. The

Elements of Statistical Learning. ISBN-13 978-0387952840, 2003, Springer.

OpenCV2.3中Random Trees(R.T.)的继承结构:

API:

| CvRTParams | 定义R.T.训练用参数,CvDTreeParams的扩展子类,但并不用到CvDTreeParams(单一决策树)所需的所有参数。比如说,R.T.通常不需要剪枝,因此剪枝参数就不被用到。 max_depth 单棵树所可能达到的最大深度 min_sample_count 树节点持续分裂的最小样本数量,也就是说,小于这个数节点就不持续分裂,变成叶子了 regression_accuracy 回归树的终止条件,如果所有节点的精度都达到要求就停止 use_surrogates 是否使用代理分裂。通常都是false,在有缺损数据或计算变量重要性的场合为true,比如,变量是色彩,而图片中有一部分区域因为光照是全黑的 max_categories 将所有可能取值聚类到有限类,以保证计算速度。树会以次优分裂(suboptimal split)的形式生长。只对2种取值以上的树有意义 priors 优先级设置,设定某些你尤其关心的类或值,使训练过程更关注它们的分类或回归精度。通常不设置 calc_var_importance 设置是否需要获取变量的重要值,一般设置true nactive_vars 树的每个节点随机选择变量的数量,根据这些变量寻找最佳分裂。如果设置0值,则自动取变量总和的平方根 max_num_of_trees_in_the_forest R.T.中可能存在的树的最大数量 forest_accuracy 准确率(作为终止条件) termcrit_type 终止条件设置 — CV_TERMCRIT_ITER 以树的数目为终止条件,max_num_of_trees_in_the_forest生效 – CV_TERMCRIT_EPS 以准确率为终止条件,forest_accuracy生效 — CV_TERMCRIT_ITER | CV_TERMCRIT_EPS 两者同时作为终止条件 |

| CvRTrees::train | 训练R.T. return bool 训练是否成功 train_data 训练数据:样本(一个样本由固定数量的多个变量定义),以Mat的形式存储,以列或行排列,必须是CV_32FC1格式 tflag trainData的排列结构 — CV_ROW_SAMPLE 行排列 — CV_COL_SAMPLE 列排列 responses 训练数据:样本的值(输出),以一维Mat的形式存储,对应trainData,必须是CV_32FC1或CV_32SC1格式。对于分类问题,responses是类标签;对于回归问题,responses是需要逼近的函数取值 var_idx 定义感兴趣的变量,变量中的某些,传null表示全部 sample_idx 定义感兴趣的样本,样本中的某些,传null表示全部 var_type 定义responses的类型 — CV_VAR_CATEGORICAL 分类标签 — CV_VAR_ORDERED(CV_VAR_NUMERICAL)数值,用于回归问题 missing_mask 定义缺失数据,和train_data一样大的8位Mat params CvRTParams定义的训练参数 |

| CvRTrees::train | 训练R.T.(简短版的train函数) return bool 训练是否成功 data 训练数据:CvMLData格式,可从外部.csv格式的文件读入,内部以Mat形式存储,也是类似的value / responses / missing mask。 params CvRTParams定义的训练参数 |

| CvRTrees:predict | 对一组输入样本进行预测(分类或回归) return double 预测结果 sample 输入样本,格式同CvRTrees::train的train_data missing_mask 定义缺失数据 |

#include <cv.h>

#include <stdio.h>

#include <highgui.h>

#include <ml.h>

#include <map>

void print_result(float train_err, float test_err,

const CvMat* _var_imp)

{

printf( "train error %f\n", train_err );

printf( "test error %f\n\n", test_err );

if (_var_imp)

{

cv::Mat var_imp(_var_imp), sorted_idx;

cv::sortIdx(var_imp, sorted_idx, CV_SORT_EVERY_ROW +

CV_SORT_DESCENDING);

printf( "variable importance:\n" );

int i, n = (int)var_imp.total();

int type = var_imp.type();

CV_Assert(type == CV_32F || type == CV_64F);

for( i = 0; i < n; i++)

{

int k = sorted_idx.at<int>(i);

printf( "%d\t%f\n", k, type == CV_32F ?

var_imp.at<float>(k) :

var_imp.at<double>(k));

}

}

printf("\n");

}

int main()

{

const char* filename = "data.xml";

int response_idx = 0;

CvMLData data;

data.read_csv( filename ); // read data

data.set_response_idx( response_idx ); // set response index

data.change_var_type( response_idx,

CV_VAR_CATEGORICAL ); // set response type

// split train and test data

CvTrainTestSplit spl( 0.5f );

data.set_train_test_split( &spl );

data.set_miss_ch("?"); // set missing value

CvRTrees rtrees;

rtrees.train( &data, CvRTParams( 10, 2, 0, false,

16, 0, true, 0, 100, 0, CV_TERMCRIT_ITER ));

print_result( rtrees.calc_error( &data, CV_TRAIN_ERROR),

rtrees.calc_error( &data, CV_TEST_ERROR ),

rtrees.get_var_importance() );

return 0;

}

References:

[1] OpenCV 2.3 Online Documentation: http://opencv.itseez.com/modules/ml/doc/random_trees.html

[2] Random Forests, Leo Breiman and Adele Cutler: http://www.stat.berkeley.edu/users/breiman/RandomForests/cc_home.htm

[3] T. Hastie, R. Tibshirani, J. H. Friedman. The

Elements of Statistical Learning. ISBN-13 978-0387952840, 2003, Springer.

相关文章推荐

- Random forests, 随机森林,online random forests

- 随机森林(Random Foreast)

- Bagging(Bootstrap aggregating)、随机森林(random forests)、AdaBoost

- 装袋法(bagging)和随机森林(random forests)的区别

- Plotting trees from Random Forest models with ggraph

- Spark MLlib RandomForest(随机森林)建模与预测

- Rapidly-Exploring Random Trees(RRT)

- 2015-《HG-RRT∗: Human-Guided Optimal Random Trees for Motion Planning》

- Random forests, 随机森林,online random forests

- 随机森林分类(Random Forest Classification)

- Random Forests (随机森林)

- 【机器人学:运动规划】快速搜索随机树(RRT---Rapidly-exploring Random Trees)入门及在Matlab中演示

- 快速搜索随机树(RRT---Rapidly-exploring Random Trees)入门及在Matlab中演示

- 利用树的集成回归模型RandomForestRegressor/ExtraTreesRegressor/GradientBoostingRegressor进行回归预测(复习11)

- leetcode OJ Unique Binary Search Trees

- uva 10562 Undraw the Trees

- HDOJ 4010 Query on The Trees LCT

- Java中 Random随机用法与List集合配套使用实现随机点名

- hdu 1392 Surround the Trees( 凸包问题)

- Surround the Trees(凸包)