Lucene学习总结之七:Lucene搜索过程解析(3)

2011-01-10 15:24

357 查看

2.3、QueryParser解析查询语句生成查询对象

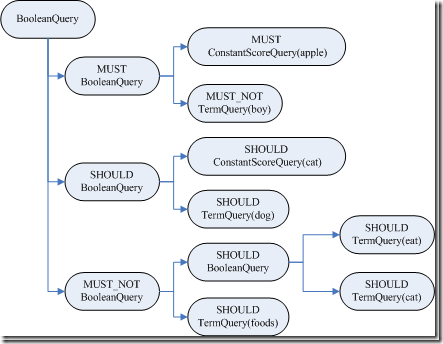

代码为:| QueryParser parser = new QueryParser(Version.LUCENE_CURRENT, "contents", new StandardAnalyzer(Version.LUCENE_CURRENT)); Query query = parser.parse("+(+apple* -boy) (cat* dog) -(eat~ foods)"); |

此处唯一要说明的是,根据查询语句生成的是一个Query树,这棵树很重要,并且会生成其他的树,一直贯穿整个索引过程。

| query BooleanQuery (id=96) | boost 1.0 | clauses ArrayList<E> (id=98) | elementData Object[10] (id=100) |------[0] BooleanClause (id=102) | | occur BooleanClause$Occur$1 (id=106) | | name "MUST" //AND | | ordinal 0 | |---query BooleanQuery (id=108) | | boost 1.0 | | clauses ArrayList<E> (id=112) | | elementData Object[10] (id=113) | |------[0] BooleanClause (id=114) | | | occur BooleanClause$Occur$1 (id=106) | | | name "MUST" //AND | | | ordinal 0 | | |--query PrefixQuery (id=116) | | boost 1.0 | | numberOfTerms 0 | | prefix Term (id=117) | | field "contents" | | text "apple" | | rewriteMethod MultiTermQuery$1 (id=119) | | docCountPercent 0.1 | | termCountCutoff 350 | |------[1] BooleanClause (id=115) | | occur BooleanClause$Occur$3 (id=123) | | name "MUST_NOT" //NOT | | ordinal 2 | |--query TermQuery (id=125) | boost 1.0 | term Term (id=127) | field "contents" | text "boy" | size 2 | disableCoord false | minNrShouldMatch 0 |------[1] BooleanClause (id=104) | | occur BooleanClause$Occur$2 (id=129) | | name "SHOULD" //OR | | ordinal 1 | |---query BooleanQuery (id=131) | | boost 1.0 | | clauses ArrayList<E> (id=133) | | elementData Object[10] (id=134) | |------[0] BooleanClause (id=135) | | | occur BooleanClause$Occur$2 (id=129) | | | name "SHOULD" //OR | | | ordinal 1 | | |--query PrefixQuery (id=137) | | boost 1.0 | | numberOfTerms 0 | | prefix Term (id=138) | | field "contents" | | text "cat" | | rewriteMethod MultiTermQuery$1 (id=119) | | docCountPercent 0.1 | | termCountCutoff 350 | |------[1] BooleanClause (id=136) | | occur BooleanClause$Occur$2 (id=129) | | name "SHOULD" //OR | | ordinal 1 | |--query TermQuery (id=140) | boost 1.0 | term Term (id=141) | field "contents" | text "dog" | size 2 | disableCoord false | minNrShouldMatch 0 |------[2] BooleanClause (id=105) | occur BooleanClause$Occur$3 (id=123) | name "MUST_NOT" //NOT | ordinal 2 |---query BooleanQuery (id=143) | boost 1.0 | clauses ArrayList<E> (id=146) | elementData Object[10] (id=147) |------[0] BooleanClause (id=148) | | occur BooleanClause$Occur$2 (id=129) | | name "SHOULD" //OR | | ordinal 1 | |--query FuzzyQuery (id=150) | boost 1.0 | minimumSimilarity 0.5 | numberOfTerms 0 | prefixLength 0 | rewriteMethod MultiTermQuery$ScoringBooleanQueryRewrite (id=152) | term Term (id=153) | field "contents" | text "eat" | termLongEnough true |------[1] BooleanClause (id=149) | occur BooleanClause$Occur$2 (id=129) | name "SHOULD" //OR | ordinal 1 |--query TermQuery (id=155) boost 1.0 term Term (id=156) field "contents" text "foods" size 2 disableCoord false minNrShouldMatch 0 size 3 disableCoord false minNrShouldMatch 0 |

对于Query对象有以下说明:

BooleanQuery即所有的子语句按照布尔关系合并

+也即MUST表示必须满足的语句

SHOULD表示可以满足的,minNrShouldMatch表示在SHOULD中必须满足的最小语句个数,默认是0,也即既然是SHOULD,也即或的关系,可以一个也不满足(当然没有MUST的时候除外)。

-也即MUST_NOT表示必须不能满足的语句

树的叶子节点中:

最基本的是TermQuery,也即表示一个词

当然也可以是PrefixQuery和FuzzyQuery,这些查询语句由于特殊的语法,可能对应的不是一个词,而是多个词,因而他们都有rewriteMethod对象指向MultiTermQuery的Inner Class,表示对应多个词,在查询过程中会得到特殊处理。

2.4、搜索查询对象

代码为:TopDocs docs = searcher.search(query, 50);

其最终调用search(createWeight(query), filter, n);

索引过程包含以下子过程:

创建weight树,计算term weight

创建scorer及SumScorer树,为合并倒排表做准备

用SumScorer进行倒排表合并

收集文档结果集合及计算打分

2.4.1、创建Weight对象树,计算Term Weight

IndexSearcher(Searcher).createWeight(Query) 代码如下:| protected Weight createWeight(Query query) throws IOException { return query.weight(this); } |

| BooleanQuery(Query).weight(Searcher) 代码为: public Weight weight(Searcher searcher) throws IOException { //重写Query对象树 Query query = searcher.rewrite(this); //创建Weight对象树 Weight weight = query.createWeight(searcher); //计算Term Weight分数 float sum = weight.sumOfSquaredWeights(); float norm = getSimilarity(searcher).queryNorm(sum); weight.normalize(norm); return weight; } |

重写Query对象树

创建Weight对象树

计算Term Weight分数

[b]2.4.1.1、重写Query对象树[/b]

从BooleanQuery的rewrite函数我们可以看出,重写过程也是一个递归的过程,一直到Query对象树的叶子节点。

| BooleanQuery.rewrite(IndexReader) 代码如下: BooleanQuery clone = null; for (int i = 0 ; i < clauses.size(); i++) { BooleanClause c = clauses.get(i); //对每一个子语句的Query对象进行重写 Query query = c.getQuery().rewrite(reader); if (query != c.getQuery()) { if (clone == null) clone = (BooleanQuery)this.clone(); //重写后的Query对象加入复制的新Query对象树 clone.clauses.set(i, new BooleanClause(query, c.getOccur())); } } if (clone != null) { return clone; //如果有子语句被重写,则返回复制的新Query对象树。 } else return this; //否则将老的Query对象树返回。 |

对此类的Query,Lucene不能够直接进行查询,必须进行重写处理:

首先,要从索引文件的词典中,把多个Term都找出来,比如"appl*",我们在索引文件的词典中可以找到如下Term:"apple","apples","apply",这些Term都要参与查询过程,而非原来的"appl*"参与查询过程,因为词典中根本就没有"appl*"。

然后,将取出的多个Term重新组织成新的Query对象进行查询,基本有两种方式:

方式一:将多个Term看成一个Term,将包含它们的文档号取出来放在一起(DocId Set),作为一个统一的倒排表来参与倒排表的合并。

方式二:将多个Term组成一个BooleanQuery,它们之间是OR的关系。

从上面的Query对象树中,我们可以看到,MultiTermQuery都有一个RewriteMethod成员变量,就是用来重写Query对象的,有以下几种:

ConstantScoreFilterRewrite采取的是方式一,其rewrite函数实现如下:

| public Query rewrite(IndexReader reader, MultiTermQuery query) { Query result = new ConstantScoreQuery(new MultiTermQueryWrapperFilter<MultiTermQuery>(query)); result.setBoost(query.getBoost()); return result; } |

| MultiTermQueryWrapperFilter中的getDocIdSet函数实现如下: public DocIdSet getDocIdSet(IndexReader reader) throws IOException { //得到MultiTermQuery的Term枚举器 final TermEnum enumerator = query.getEnum(reader); try { if (enumerator.term() == null) return DocIdSet.EMPTY_DOCIDSET; //创建包含多个Term的文档号集合 final OpenBitSet bitSet = new OpenBitSet(reader.maxDoc()); final int[] docs = new int[32]; final int[] freqs = new int[32]; TermDocs termDocs = reader.termDocs(); try { int termCount = 0; //一个循环,取出对应MultiTermQuery的所有的Term,取出他们的文档号,加入集合 do { Term term = enumerator.term(); if (term == null) break; termCount++; termDocs.seek(term); while (true) { final int count = termDocs.read(docs, freqs); if (count != 0) { for(int i=0;i<count;i++) { bitSet.set(docs[i]); } } else { break; } } } while (enumerator.next()); query.incTotalNumberOfTerms(termCount); } finally { termDocs.close(); } return bitSet; } finally { enumerator.close(); } } |

| public Query rewrite(IndexReader reader, MultiTermQuery query) throws IOException { //得到MultiTermQuery的Term枚举器 FilteredTermEnum enumerator = query.getEnum(reader); BooleanQuery result = new BooleanQuery(true); int count = 0; try { //一个循环,取出对应MultiTermQuery的所有的Term,加入BooleanQuery do { Term t = enumerator.term(); if (t != null) { TermQuery tq = new TermQuery(t); tq.setBoost(query.getBoost() * enumerator.difference()); result.add(tq, BooleanClause.Occur.SHOULD); count++; } } while (enumerator.next()); } finally { enumerator.close(); } query.incTotalNumberOfTerms(count); return result; } |

方式一使得MultiTermQuery对应的所有的Term看成一个Term,组成一个docid set,作为统一的倒排表参与倒排表的合并,这样无论这样的Term在索引中有多少,都只会有一个倒排表参与合并,不会产生TooManyClauses异常,也使得性能得到提高。但是多个Term之间的tf, idf等差别将被忽略,所以采用方式二的RewriteMethod为ConstantScoreXXX,也即除了用户指定的Query boost,其他的打分计算全部忽略。

方式二使得整个Query对象树被展开,叶子节点都为TermQuery,MultiTermQuery中的多个Term可根据在索引中的tf, idf等参与打分计算,然而我们事先并不知道索引中和MultiTermQuery相对应的Term到底有多少个,因而会出现TooManyClauses异常,也即一个BooleanQuery中的子查询太多。这样会造成要合并的倒排表非常多,从而影响性能。

Lucene认为对于MultiTermQuery这种查询,打分计算忽略是很合理的,因为当用户输入"appl*"的时候,他并不知道索引中有什么与此相关,也并不偏爱其中之一,因而计算这些词之间的差别对用户来讲是没有意义的。从而Lucene对方式二也提供了ConstantScoreXXX,来提高搜索过程的性能,从后面的例子来看,会影响文档打分,在实际的系统应用中,还是存在问题的。

为了兼顾上述两种方式,Lucene提供了ConstantScoreAutoRewrite,来根据不同的情况,选择不同的方式。

| ConstantScoreAutoRewrite.rewrite代码如下: public Query rewrite(IndexReader reader, MultiTermQuery query) throws IOException { final Collection<Term> pendingTerms = new ArrayList<Term>(); //计算文档数目限制,docCountPercent默认为0.1,也即索引文档总数的0.1% final int docCountCutoff = (int) ((docCountPercent / 100.) * reader.maxDoc()); //计算Term数目限制,默认为350 final int termCountLimit = Math.min(BooleanQuery.getMaxClauseCount(), termCountCutoff); int docVisitCount = 0; FilteredTermEnum enumerator = query.getEnum(reader); try { //一个循环,取出与MultiTermQuery相关的所有的Term。 while(true) { Term t = enumerator.term(); if (t != null) { pendingTerms.add(t); docVisitCount += reader.docFreq(t); } //如果Term数目超限,或者文档数目超限,则可能非常影响倒排表合并的性能,因而选用方式一,也即ConstantScoreFilterRewrite的方式 if (pendingTerms.size() >= termCountLimit || docVisitCount >= docCountCutoff) { Query result = new ConstantScoreQuery(new MultiTermQueryWrapperFilter<MultiTermQuery>(query)); result.setBoost(query.getBoost()); return result; } else if (!enumerator.next()) { //如果Term数目不太多,而且文档数目也不太多,不会影响倒排表合并的性能,因而选用方式二,也即ConstantScoreBooleanQueryRewrite的方式。 BooleanQuery bq = new BooleanQuery(true); for (final Term term: pendingTerms) { TermQuery tq = new TermQuery(term); bq.add(tq, BooleanClause.Occur.SHOULD); } Query result = new ConstantScoreQuery(new QueryWrapperFilter(bq)); result.setBoost(query.getBoost()); query.incTotalNumberOfTerms(pendingTerms.size()); return result; } } } finally { enumerator.close(); } } |

MultiTermQuery的getEnum返回的是FilteredTermEnum,它有两个成员变量,其中TermEnum actualEnum是用来枚举索引中所有的Term的,而Term currentTerm指向的是当前满足条件的Term,FilteredTermEnum的next()函数如下:

| public boolean next() throws IOException { if (actualEnum == null) return false; currentTerm = null; //不断得到下一个索引中的Term while (currentTerm == null) { if (endEnum()) return false; if (actualEnum.next()) { Term term = actualEnum.term(); //如果当前索引中的Term满足条件,则赋值为当前的Term if (termCompare(term)) { currentTerm = term; return true; } } else return false; } currentTerm = null; return false; } |

| 不同的MultiTermQuery的termCompare不同: 对于PrefixQuery的getEnum(IndexReader reader)得到的是PrefixTermEnum,其termCompare实现如下: protected boolean termCompare(Term term) { //只要前缀相同,就满足条件 if (term.field() == prefix.field() && term.text().startsWith(prefix.text())){ return true; } endEnum = true; return false; } 对于FuzzyQuery的getEnum得到的是FuzzyTermEnum,其termCompare实现如下: protected final boolean termCompare(Term term) { //对于FuzzyQuery,其prefix设为空"",也即这一条件一定满足,只要计算的是similarity if (field == term.field() && term.text().startsWith(prefix)) { final String target = term.text().substring(prefix.length()); this.similarity = similarity(target); return (similarity > minimumSimilarity); } endEnum = true; return false; } //计算Levenshtein distance 也即 edit distance,对于两个字符串,从一个转换成为另一个所需要的最少基本操作(添加,删除,替换)数。 private synchronized final float similarity(final String target) { final int m = target.length(); final int n = text.length(); // init matrix d for (int i = 0; i<=n; ++i) { p[i] = i; } // start computing edit distance for (int j = 1; j<=m; ++j) { // iterates through target int bestPossibleEditDistance = m; final char t_j = target.charAt(j-1); // jth character of t d[0] = j; for (int i=1; i<=n; ++i) { // iterates through text // minimum of cell to the left+1, to the top+1, diagonally left and up +(0|1) if (t_j != text.charAt(i-1)) { d[i] = Math.min(Math.min(d[i-1], p[i]), p[i-1]) + 1; } else { d[i] = Math.min(Math.min(d[i-1]+1, p[i]+1), p[i-1]); } bestPossibleEditDistance = Math.min(bestPossibleEditDistance, d[i]); } // copy current distance counts to 'previous row' distance counts: swap p and d int _d[] = p; p = d; d = _d; } return 1.0f - ((float)p / (float) (Math.min(n, m))); } |

| 有关edit distance的算法详见http://www.merriampark.com/ld.htm 计算两个字符串s和t的edit distance算法如下: Step 1: Set n to be the length of s. Set m to be the length of t. If n = 0, return m and exit. If m = 0, return n and exit. Construct a matrix containing 0..m rows and 0..n columns. Step 2: Initialize the first row to 0..n. Initialize the first column to 0..m. Step 3: Examine each character of s (i from 1 to n). Step 4: Examine each character of t (j from 1 to m). Step 5: If s[i] equals t[j], the cost is 0. If s[i] doesn't equal t[j], the cost is 1. Step 6: Set cell d[i,j] of the matrix equal to the minimum of: a. The cell immediately above plus 1: d[i-1,j] + 1. b. The cell immediately to the left plus 1: d[i,j-1] + 1. c. The cell diagonally above and to the left plus the cost: d[i-1,j-1] + cost. Step 7: After the iteration steps (3, 4, 5, 6) are complete, the distance is found in cell d[n,m]. 举例说明其过程如下: 比较的两个字符串为:“GUMBO” 和 "GAMBOL".  |

| 在索引中,添加了以下四篇文档: file01.txt : apple other other other other file02.txt : apple apple other other other file03.txt : apple apple apple other other file04.txt : apple apple apple other other 搜索"apple"结果如下: docid : 3 score : 0.67974937 docid : 2 score : 0.58868027 docid : 1 score : 0.4806554 docid : 0 score : 0.33987468 文档按照包含"apple"的多少排序。 而搜索"apple*"结果如下: docid : 0 score : 1.0 docid : 1 score : 1.0 docid : 2 score : 1.0 docid : 3 score : 1.0 也即Lucene放弃了对score的计算。 |

| query BooleanQuery (id=89) | boost 1.0 | clauses ArrayList<E> (id=90) | elementData Object[3] (id=97) |------[0] BooleanClause (id=99) | | occur BooleanClause$Occur$1 (id=103) | | name "MUST" | | ordinal 0 | |---query BooleanQuery (id=105) | | boost 1.0 | | clauses ArrayList<E> (id=115) | | elementData Object[2] (id=120) | | //"apple*"被用方式一重写为ConstantScoreQuery | |---[0] BooleanClause (id=121) | | | occur BooleanClause$Occur$1 (id=103) | | | name "MUST" | | | ordinal 0 | | |---query ConstantScoreQuery (id=123) | | boost 1.0 | | filter MultiTermQueryWrapperFilter<Q> (id=125) | | query PrefixQuery (id=48) | | boost 1.0 | | numberOfTerms 0 | | prefix Term (id=127) | | field "contents" | | text "apple" | | rewriteMethod MultiTermQuery$1 (id=50) | |---[1] BooleanClause (id=122) | | occur BooleanClause$Occur$3 (id=111) | | name "MUST_NOT" | | ordinal 2 | |---query TermQuery (id=124) | boost 1.0 | term Term (id=130) | field "contents" | text "boy" | modCount 0 | size 2 | disableCoord false | minNrShouldMatch 0 |------[1] BooleanClause (id=101) | | occur BooleanClause$Occur$2 (id=108) | | name "SHOULD" | | ordinal 1 | |---query BooleanQuery (id=110) | | boost 1.0 | | clauses ArrayList<E> (id=117) | | elementData Object[2] (id=132) | | //"cat*"被用方式一重写为ConstantScoreQuery | |------[0] BooleanClause (id=133) | | | occur BooleanClause$Occur$2 (id=108) | | | name "SHOULD" | | | ordinal 1 | | |---query ConstantScoreQuery (id=135) | | boost 1.0 | | filter MultiTermQueryWrapperFilter<Q> (id=137) | | query PrefixQuery (id=63) | | boost 1.0 | | numberOfTerms 0 | | prefix Term (id=138) | | field "contents" | | text "cat" | | rewriteMethod MultiTermQuery$1 (id=50) | |------[1] BooleanClause (id=134) | | occur BooleanClause$Occur$2 (id=108) | | name "SHOULD" | | ordinal 1 | |---query TermQuery (id=136) | boost 1.0 | term Term (id=140) | field "contents" | text "dog" | modCount 0 | size 2 | disableCoord false | minNrShouldMatch 0 |------[2] BooleanClause (id=102) | occur BooleanClause$Occur$3 (id=111) | name "MUST_NOT" | ordinal 2 |---query BooleanQuery (id=113) | boost 1.0 | clauses ArrayList<E> (id=119) | elementData Object[2] (id=142) |------[0] BooleanClause (id=143) | | occur BooleanClause$Occur$2 (id=108) | | name "SHOULD" | | ordinal 1 | | //"eat~"作为FuzzyQuery,被重写成BooleanQuery, | | 索引中满足 条件的Term有"eat"和"cat"。FuzzyQuery | | 不用上述的任何一种RewriteMethod,而是用方式二自己 | | 实现了rewrite函数,是将同"eat"的edit distance最近的 | | 最多maxClauseCount(默认1024)个Term组成BooleanQuery。 | |---query BooleanQuery (id=145) | | boost 1.0 | | clauses ArrayList<E> (id=146) | | elementData Object[10] (id=147) | |------[0] BooleanClause (id=148) | | | occur BooleanClause$Occur$2 (id=108) | | | name "SHOULD" | | | ordinal 1 | | |---query TermQuery (id=150) | | boost 1.0 | | term Term (id=152) | | field "contents" | | text "eat" | |------[1] BooleanClause (id=149) | | occur BooleanClause$Occur$2 (id=108) | | name "SHOULD" | | ordinal 1 | |---query TermQuery (id=151) | boost 0.33333325 | term Term (id=153) | field "contents" | text "cat" | modCount 2 | size 2 | disableCoord true | minNrShouldMatch 0 |------[1] BooleanClause (id=144) | occur BooleanClause$Occur$2 (id=108) | name "SHOULD" | ordinal 1 |---query TermQuery (id=154) boost 1.0 term Term (id=155) field "contents" text "foods" modCount 0 size 2 disableCoord false minNrShouldMatch 0 modCount 0 size 3 disableCoord false minNrShouldMatch 0 |

相关文章推荐

- Lucene学习总结之七:Lucene搜索过程解析

- Lucene学习总结之七:Lucene搜索过程解析

- Lucene学习总结:Lucene搜索过程解析

- Lucene学习总结之七:Lucene搜索过程解析(1)

- Lucene学习总结之七:Lucene搜索过程解析(3)

- Lucene学习总结之七:Lucene搜索过程解析(2)

- Lucene学习总结之七:Lucene搜索过程解析(3)

- Lucene学习总结之七:Lucene搜索过程解析(2)

- Lucene学习总结之七:Lucene搜索过程解析(1)

- Lucene学习总结之七:Lucene搜索过程解析(4)

- Lucene学习总结之七:Lucene搜索过程解析(1)

- Lucene学习总结之七:Lucene搜索过程解析(5)

- Lucene学习总结之七:Lucene搜索过程解析(3)

- Lucene学习总结之七:Lucene搜索过程解析(6)

- Lucene学习总结之七:Lucene搜索过程解析(4)

- Lucene学习总结之七:Lucene搜索过程解析(4)

- Lucene学习总结之七:Lucene搜索过程解析(1)

- Lucene学习总结之七:Lucene搜索过程解析(5)

- Lucene学习总结之七:Lucene搜索过程解析(2)

- Lucene学习总结之七:Lucene搜索过程解析(2)